Chapter 1

SEO basics

Before we dive into specific techniques and aspects of SEO, let’s cover the basic definitions, vocabulary and frequently asked questions. Are you ready? Let’s start!

Chapter navigation

What is SEO?

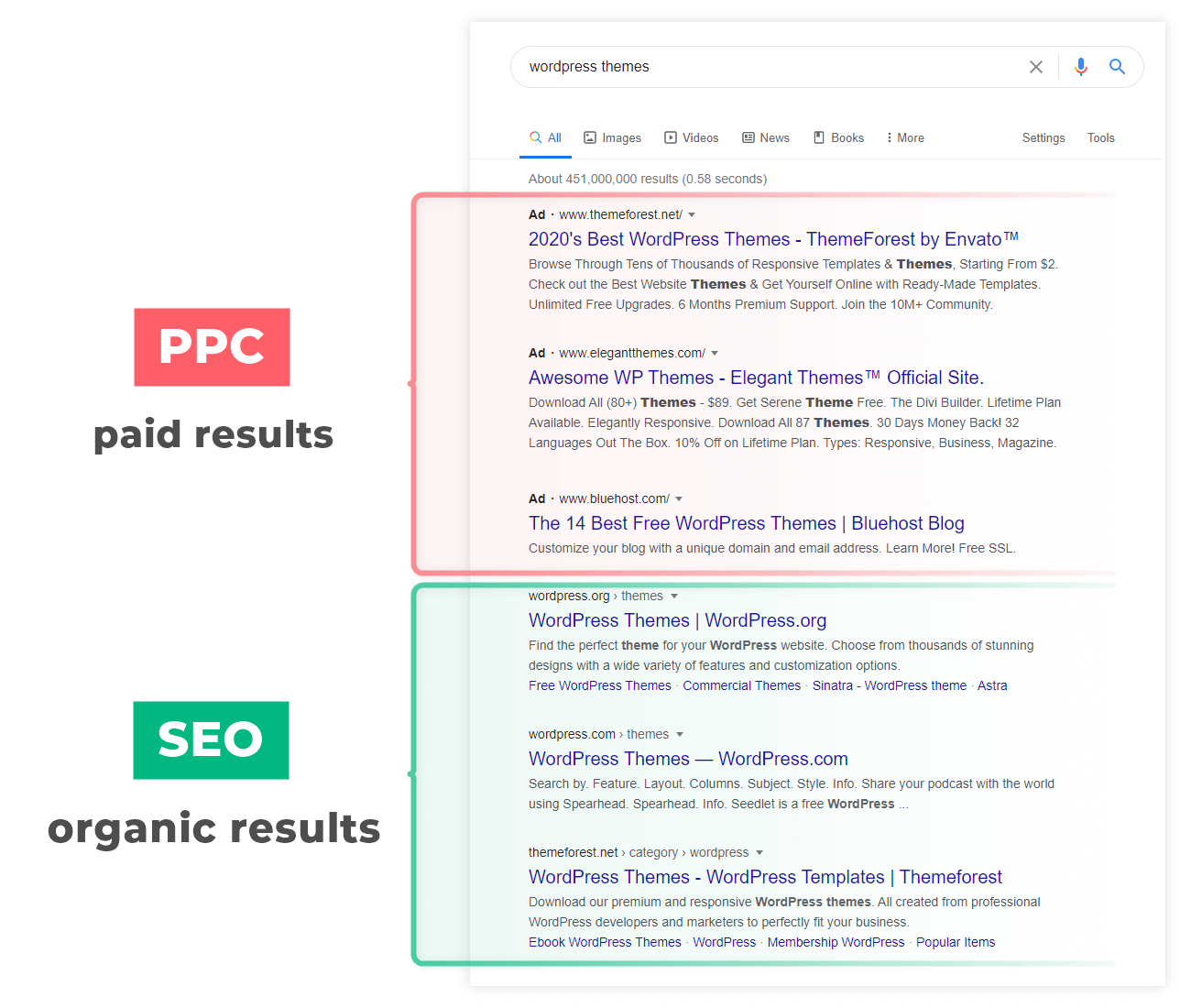

Search engine optimization (SEO) is a process of optimizing your website with the goal of improving your rankings in the search results and getting more organic (non-paid) traffic.

The history of SEO dates back to the 90s when search engines emerged for the first time. Nowadays, it is an essential marketing strategy and an ever-growing industry.

Search engine optimization focuses only on organic search results and does not include PPC optimization. Both SEO and PPC are part of Search Engine Marketing.

The search engines are used by internet users when they are searching for something.

And you want to provide the answer to that something. It doesn’t matter whether you sell a product or service, write a blog, or anything else, search engine optimization is a must for every website owner.

To put it simply:

SEO is all the actions you do to make Google consider your website a quality source and rank it higher for your desired search queries.

Note: Although SEO stands for “search engine optimization”, with the current dominance of Google, we could simply use the term “Google optimization”.

That’s why all the tips and techniques in this guide are mainly about Google SEO, although many things are universal and apply to the optimization for any other search engine.

SEO in a nutshell

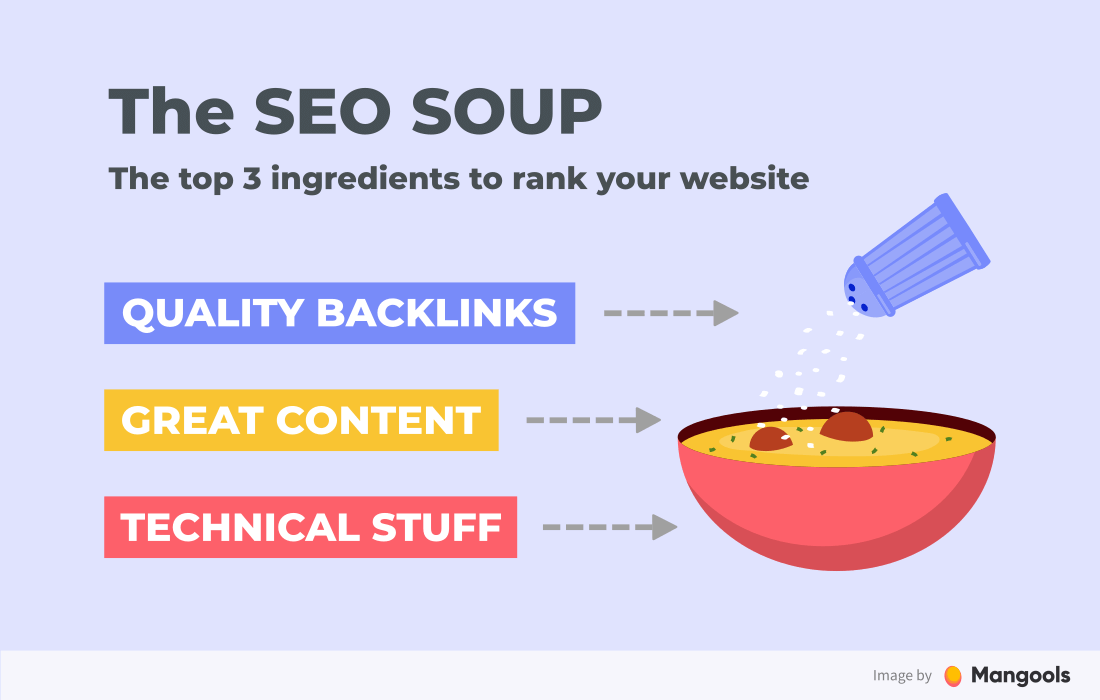

You don’t need to know ALL the factors and the exact algorithms Google uses to rank your website. But you need to cover the key components of SEO to be successful.

An easy way to understand the 3 most important factors is to imagine a bowl of soup – the SEO soup.

There are three key aspects of SEO:

- Technical stuff – The bowl represents all the technical aspects you need to cover (often referred to as technical or on-page SEO). Without a proper bowl, there would be nothing to hold the soup.

- Great content – The soup represents the content of your website – the most important part. Low-quality content = no rankings, it is that simple.

- Quality backlinks – The seasoning represents the backlinks that increase the authority of your website. You can have great content and a perfectly optimized website but ultimately, you need to gain authority by getting quality backlinks – the last ingredient to make your SEO soup perfect.

In the following chapters, we’ll take a look at all of these aspects from the practical point of view.

Useful vocabulary

As soon as you start digging into SEO, you’ll come across some common terms that try to categorize its various aspects or approaches, namely:

- On-page SEO & off-page SEO

- Black hat SEO & white hat SEO

Although they are not that important from the practical point of view, it is good to know their meaning.

On-page SEO & off-page SEO

The terms on-page and off-page SEO categorize the SEO activities based on whether you perform them on the website

On-page SEO is everything you can do on the website – from the optimization of content through technical aspects.

- Keyword research

- Content optimization

- Title tag optimization

- Page performance optimization

- Internal linking

The goal is to provide both perfect content and UX while showing search engines what the page is about.

Note: The terms on-page SEO and technical SEO are sometimes used interchangeably and sometimes used to distinguish the content-related optimization (e.g. title tags) and technically-oriented optimization (e.g. page speed).

Off-page SEO is mostly about getting quality backlinks to show search engines that your website has authority and value. Link building may involve techniques like:

- Guest blogging

- Email outreach

- Broken link building

Off-page SEO is also closely connected to other areas of online marketing, such as social media marketing and branding, which have an indirect impact on building the trust and authority of your website.

Remember that a successful SEO strategy consists of both on-page and off-page SEO activities.

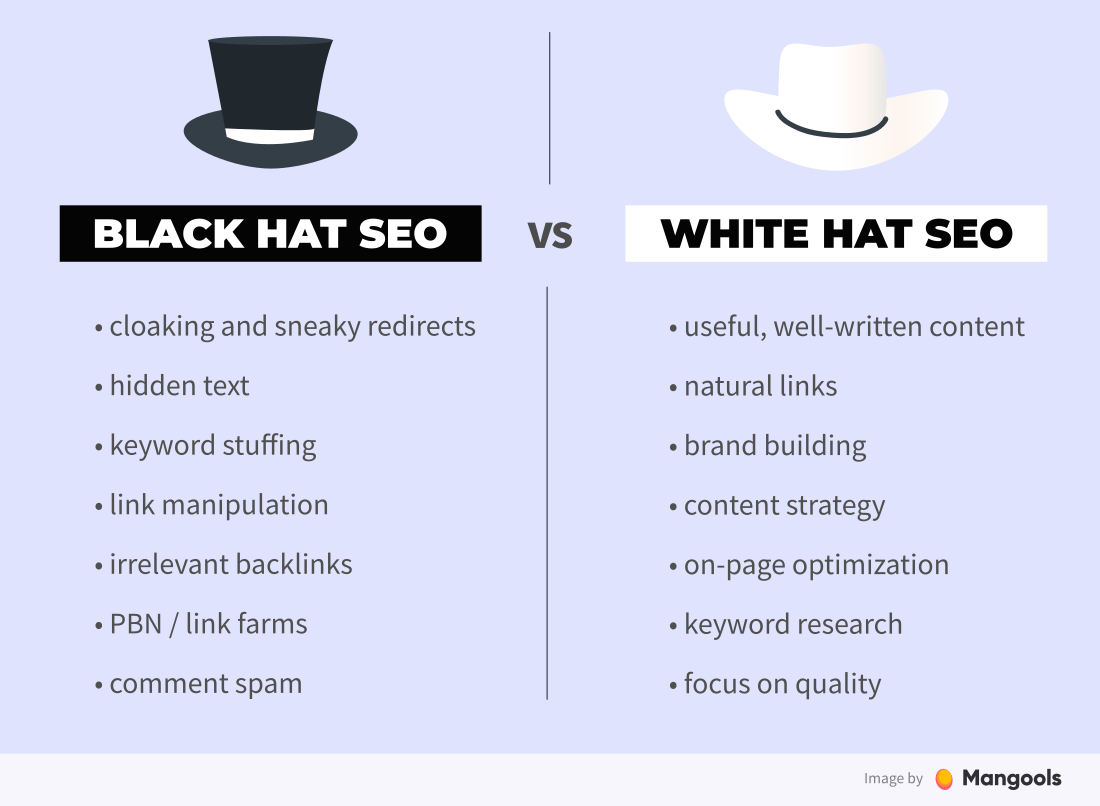

White hat SEO vs. black hat SEO

Black hats and white hats have their origin in Western movies. They represented bad guys and good guys.

In SEO, the terms are used to describe two groups of SEOs – those who adhere to the rules set out by Google’s Webmaster Guidelines and those who don’t.

Black hat SEO is a set of unethical (and usually spammy) practices to improve the rankings of a website.

These techniques can get you to the top of the search results in a short time, however, search engines will most probably penalize and ban the website sooner or later.

White hat SEO, on the other hand, refers to all the regular SEO techniques that stick to the guidelines and rules. It is a long-term strategy in which good rankings are a side-product of good optimization, quality content, and a user-oriented approach.

While SEO experts agree that “white hat” is the way to go, there are different opinions on the acceptability of various link building techniques (including link buying).

Frequently asked questions

Can I do SEO on my own?

SEO is not easy. But it’s no rocket science either.

There are things you can implement right away and there are concepts that will take much more time and effort. So yes, you can do SEO on your own.

The only question is whether you are willing to invest some time into learning all the aspects of SEO, or you’ll hire a professional and invest your time into something else.

How can I learn SEO?

There’s a couple of things you should do to learn SEO:

- Read reliable resources

- Get hands-on experience

- Don’t be afraid of experiments

- Have a lot of patience (SEO is a marathon, not a sprint)

Implementing the things from this guide is a great way to start 🙂

How long does it take to learn SEO?

To answer this question, we’ll use a common answer of SEO experts to almost any SEO issue: it depends.

While understanding the basics won’t take you longer than a couple of weeks, the actual mastering of this discipline depends largely on the practice, which is a question of months, even years.

Last but not least, SEO is evolving all the time. You should always keep learning and stay updated with the latest updates, experiments and findings.

Do I need SEO tools?

If you’re serious about SEO, you shouldn’t neglect the useful data and insights provided by various SEO tools. They give you a great competitive advantage and save a lot of your time.

Here are some essential SEO tools every website owner should use:

- Google Search Console

- a traffic analysis tool (e.g. Google Analytics)

- a keyword research tool (e.g. KWFinder)

- a backlink analysis tool (e.g. LinkMiner)

- a rank tracker (e.g. SERPWatcher)

You can also utilize various small free SEO tools directly on our website (yes, they are really free 😉)

Is SEO dead?

When people use the phrase “SEO is dead”, they usually mean that “the spammy attempts to cheat the Google algorithm that were used 10 years ago are dead”.

Other than that, search engine optimization is an essential marketing strategy and an ever-growing industry.

Chapter 2 In the 2nd chapter of this SEO guide, you will learn what are search engines, how they work and what are the most important SEO ranking factors in Google.

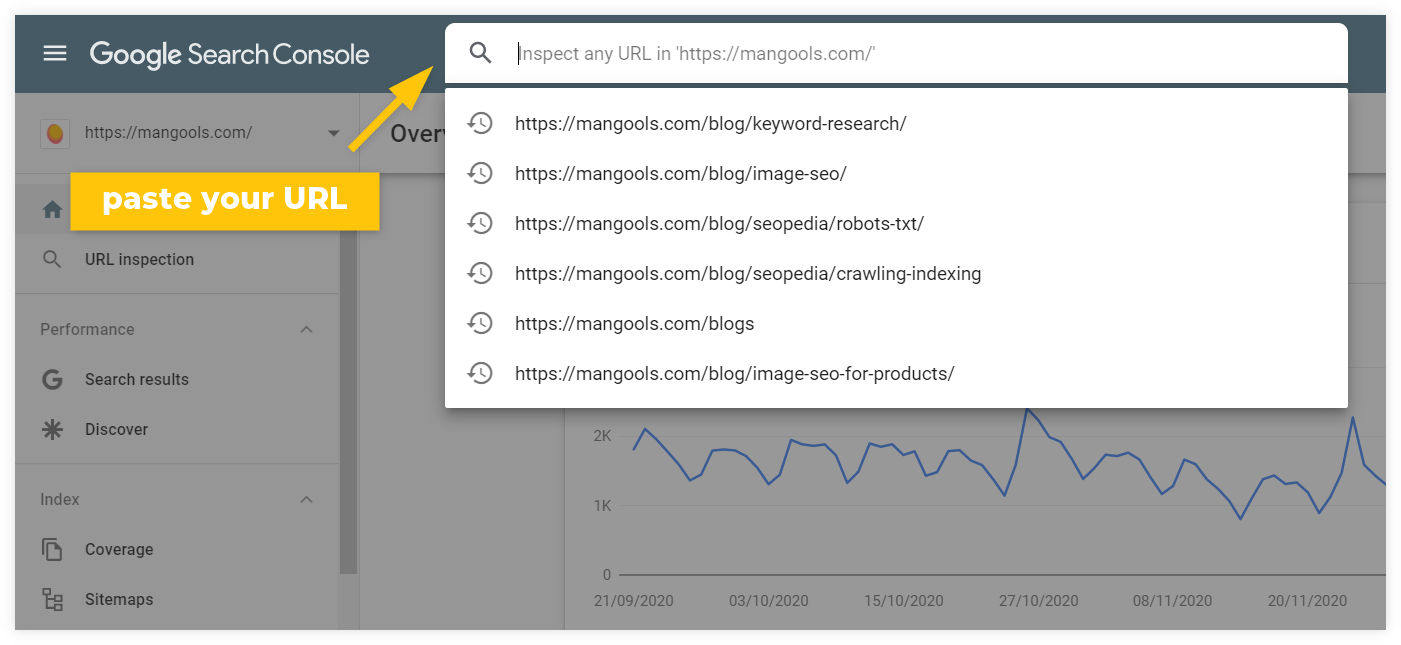

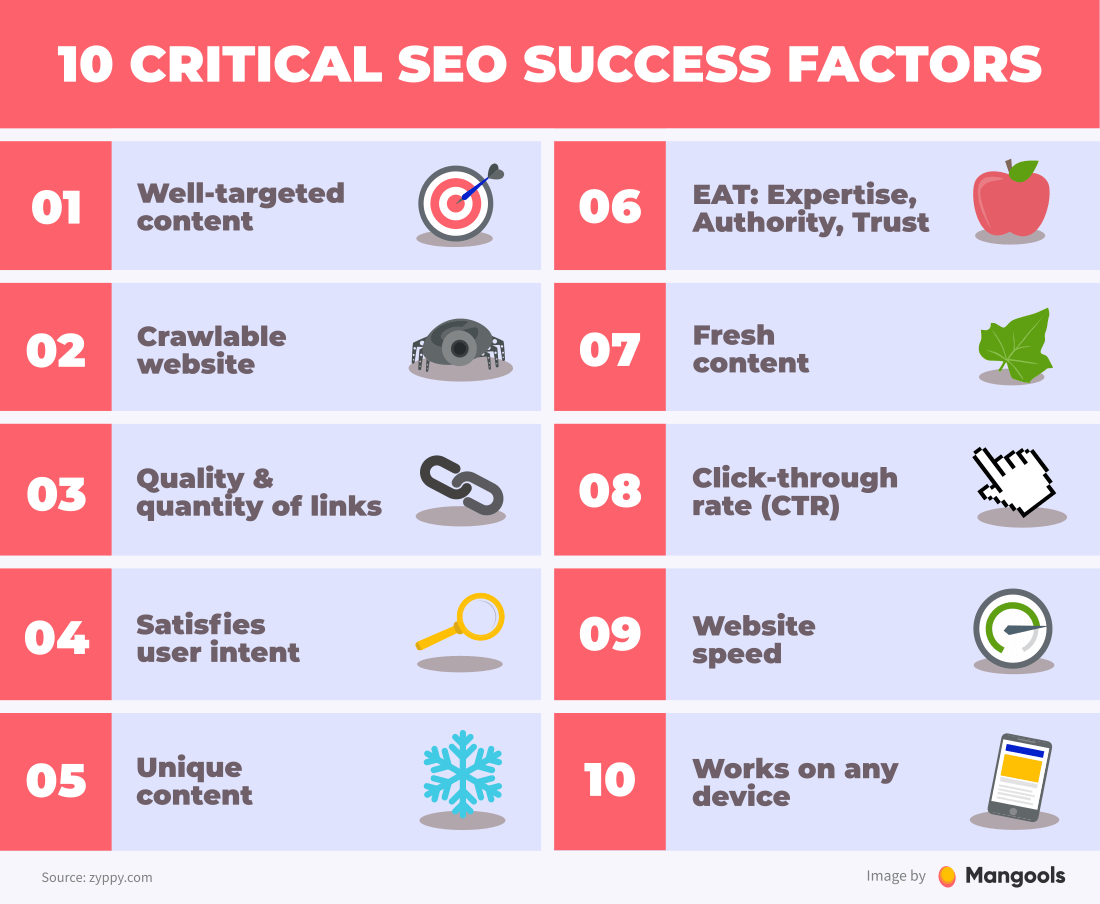

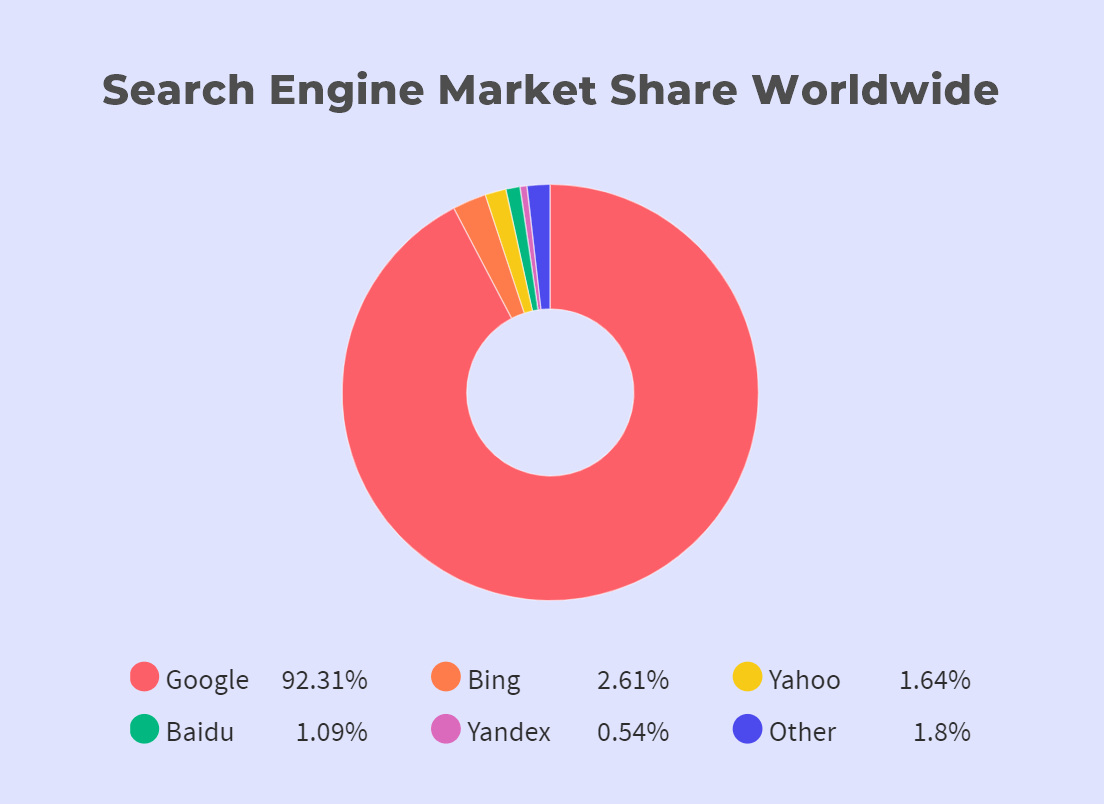

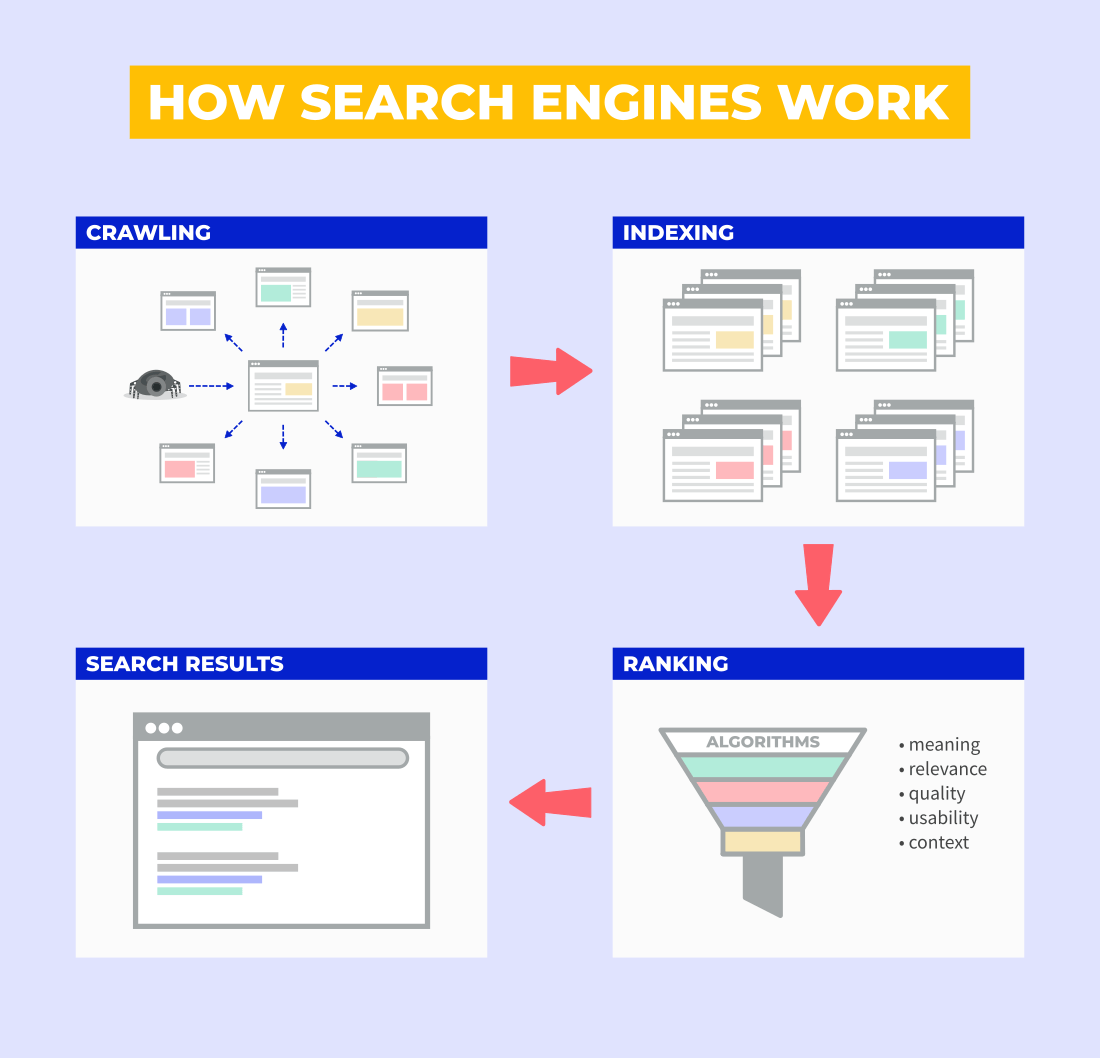

Chapter navigation A search engine is an online tool that helps people find information on the internet. A typical example? Google. And the truth is, Google is also probably the only example you need to know. Just look at the chart of the worldwide search engine market share (data by Statcounter): So when we talk about search engines in this guide, we mostly mean Google. Other search engines work on similar principles and as long as your website is optimized for Google, you should be all set up for others too. Tip: Find out more about the most popular search engines in our SEOpedia article on this topic. The process in which search engines work consists of these main steps: …and finally, showing the search results to the user. The process looks something like this: Crawling is the process in which search engines scan all the internet webpages continuously. They use small pieces of programs (called crawlers or bots) to follow all the hyperlinks and discover new pages (as well as updates to the pages they discovered before). Martin Splitt, Google Webmaster Trend Analyst, describes the crawling process quite simply: “We start somewhere with some URLs, and then basically follow links from thereon. So we are basically crawling our way through the internet (one) page by page, more or less.” Once the website is crawled, the information is indexed. The search engines try to analyze and understand the pages, categorize them, and store them in the index. The search engine index is basically a gigantic library of all the crawled websites with a single purpose – to understand them and have them available to be used as a search result. Tip: If you want to find out whether your page was crawled and indexed, you can simply go to your Google Search Console (more on the tool in the last chapter) and use the URL Inspection Tool: You’ll see when the page was last crawled as well as warnings about any potential crawling and indexing issues Google may have with your page. Read more in our detailed post on Crawling & Indexing. Once the internet user submits a search query, the search engine digs into the index and pulls out the best results. The list of the results is known as a SERP (Search Engines Results Page). In the following paragraphs, we’ll take a closer look at Google’s Search Algorithm. Google’s Search Algorithm is used as an umbrella term to refer to all the individual algorithms, machine learning systems and AI technologies Google uses to rank websites. To provide the best results, they consider various factors, namely: As with any other complex system, the Google algorithm needs to be updated and tweaked regularly. Besides minor algorithm updates that happen on a daily basis, Google usually rolls a couple of core algorithm updates per year. They are officially announced by Google and create a lot of buzz in the SEO community. Today we released the November 2024 core update. We'll add it to our ranking release history page in the near future and update when the rollout is complete. For more on core updates: https://t.co/43pVoYH8k7 — Google Search Central (@googlesearchc) November 11, 2024 Going through a list of the most important core algorithm updates (e.g. Panda, Penguin, Hummingbird, Bert…) can be a great way to get a quick overview of how Google Search and SEO evolved over the years. To learn more, read our detailed post on the Google algorithm. Besides the algorithms, Google also uses human data input. There are thousands of external Google employees called Search Quality Raters who follow strict guidelines (available to the public), evaluate actual search results and rate the quality of the ranked pages. A typical example of pages that undergo this kind of strict evaluation are the so-called YMYL (Your Money, Your Life) pages – pages dealing with important topics that can impact someone’s happiness, health, safety or financial wellbeing. Quality Raters don’t influence the rankings directly, but their data is used to improve the search algorithm. Of course, search engines keep the exact calculations of their algorithms in secret. Nonetheless, many ranking factors are well-known. Ranking factors are a very discussed topic in the world of SEO. Many of them have been officially confirmed by Google but many remain in the realm of speculations and theories. From the practical point of view, it’s important to focus on factors that have a proven impact but also try to keep a “good score” across all the areas. Of course, not everything people think is a ranking factor is actually used by search engines (if something correlates with the higher rankings, it is not necessarily something Google uses in their algorithm). On the other hand, some confirmed ranking factors only have a very small impact on the rankings. Cyrus Sheppard from Zyppy made a nice list of Google success factors (the ones that correlate with higher rankings the most). Here are the 10 critical ones: Note: Quality of content is undeniably the most important SEO factor (notice that 5 out of 10 critical factors are related to content). To learn more about content optimization for SEO, jump to the 4th chapter. Other important factors that may have a positive impact on your rankings: There are many search engines, but only Google has made by far the most advances in Information Retrieval, Natural Language Understanding, and Natural Language Processing. In the last 25 years, search engines moved from pure text-based evaluation to the machine learning age. Today, Google iterates on user intent every month and is able to detect small nuances in the true desires of searchers: content quality, product offering, design, user experience. There are really no limits. As a result, SEO has changed from optimizing fixed criteria to working toward optimal user experience. Smart SEOs understand that they have to go beyond backlinks and content. They have to understand the needs of searchers in the context of a keyword.

Search engines

What are search engines?

How search engines work

Crawling

Indexing

Picking the results

Google algorithm

Search Quality Raters

Ranking factors

Chapter 3

Keyword research

Keyword research is one of the basic SEO tasks. In this chapter, you will learn how to find your niche and how to find profitable keywords you can rank for.

Chapter navigation

Keyword research should be the very first step on your SEO journey. It is especially important in two common scenarios:

- Getting to know your niche – when starting a new website, keyword research can provide a great overview of what sub-topics are interesting for people in your niche or industry

- Finding new content ideas – keyword research can help you find the most profitable keyword opportunities and plan your content strategy

Where to find keywords?

There are various ways to find keywords.

Your first task is to come up with the seed keywords – phrases you’ll use as the stepping stone to finding more keyword ideas. For example, if you run a coffee blog, simple phrases such as “coffee beans”, “coffee machines” or “espresso” will work great.

The classic ways to look for keywords:

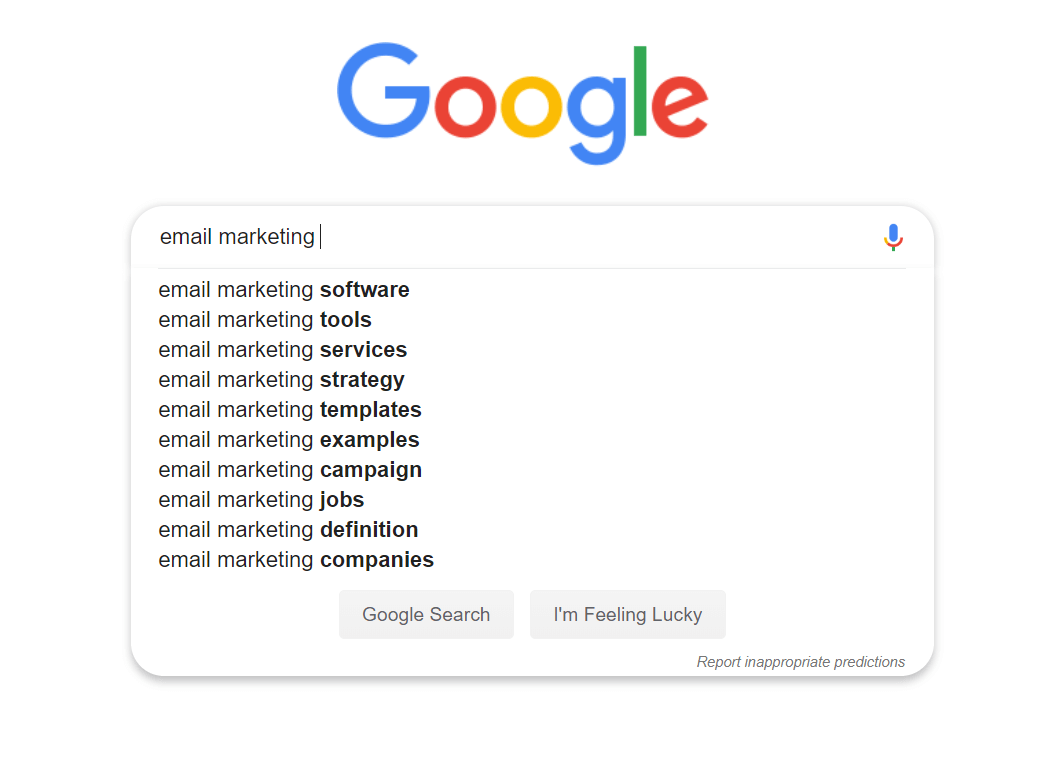

Google suggestions

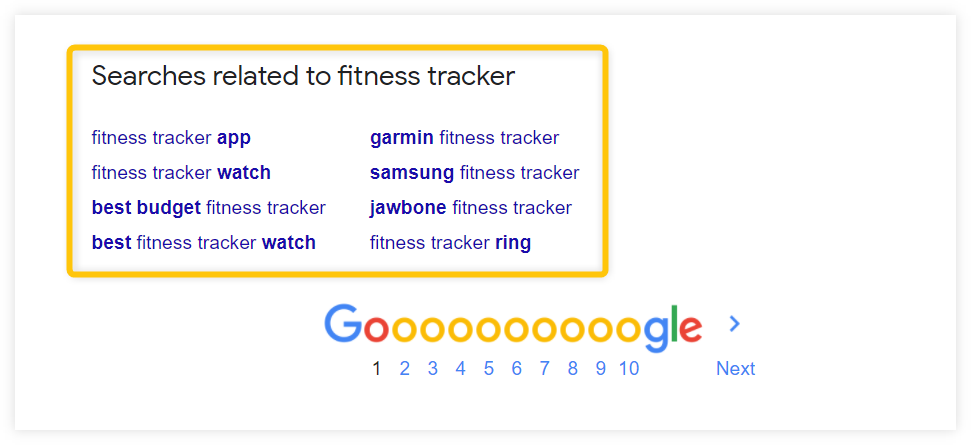

Google offers many keyword suggestions directly in the SERP. Features such as Google Autocomplete, People Also Ask or Related Searches can be a great source of keyword ideas.

With the autocomplete feature, you just need to write your seed keyword into the Google search and the suggestions will appear automatically.

You can combine your seed keyword with different letters from the alphabet to find more autocomplete ideas (e.g. email marketing a, email marketing b,…)

Here’s another example of keyword ideas that can be found in the Google results page:

The suggestions are based on real search queries used by people all over the world.

Note: Besides Google, there are many other platforms that can help you find new keyword ideas. Focus on the ones people in your niche use to ask questions, communicate and share ideas. Some examples: Reddit, Quora, YouTube, forums, Facebook groups…

Keyword tools

There are many free keyword tools that can give you hundreds of keyword ideas based on a single seed keyword. The problem is: they are very limited when it comes to other features.

So if you make money with your website in any way, a quality paid keyword tool is a great investment that will pay off sooner or later.

Besides the keyword suggestions, professional tools offer other useful SEO metrics and insights to evaluate the keywords and pick the best ones. So they can save you a lot of time and give you a competitive advantage.

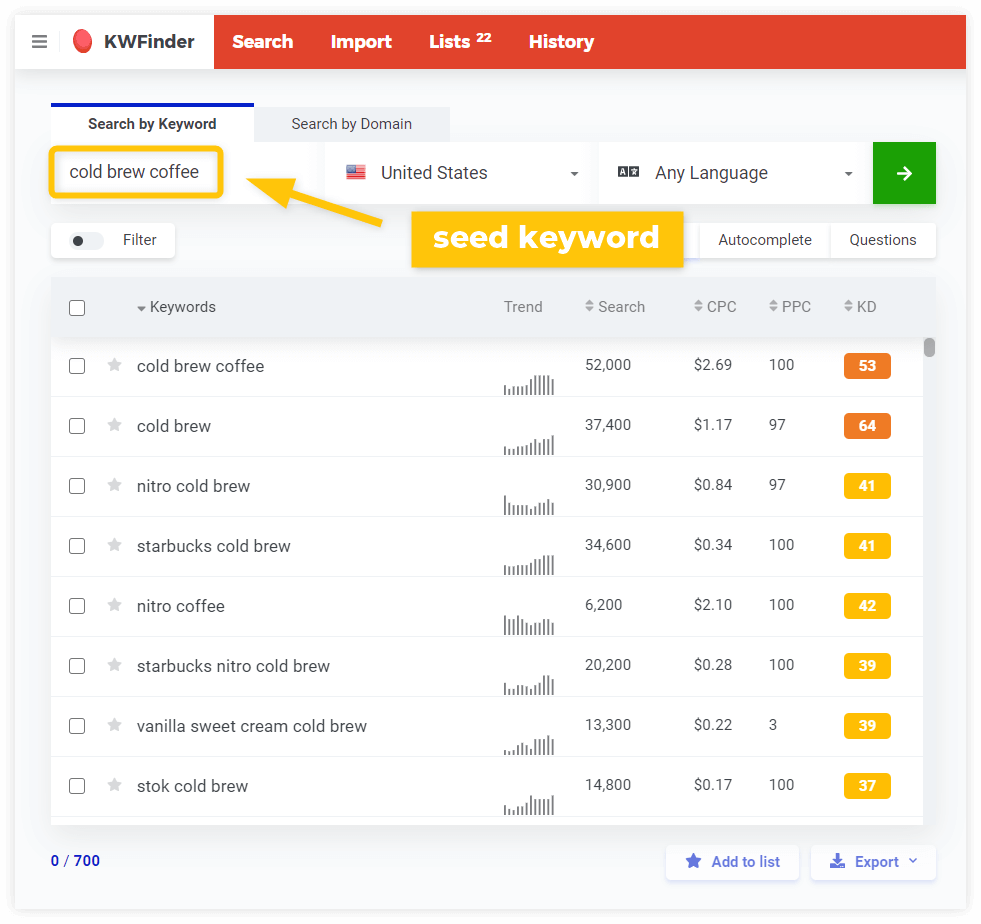

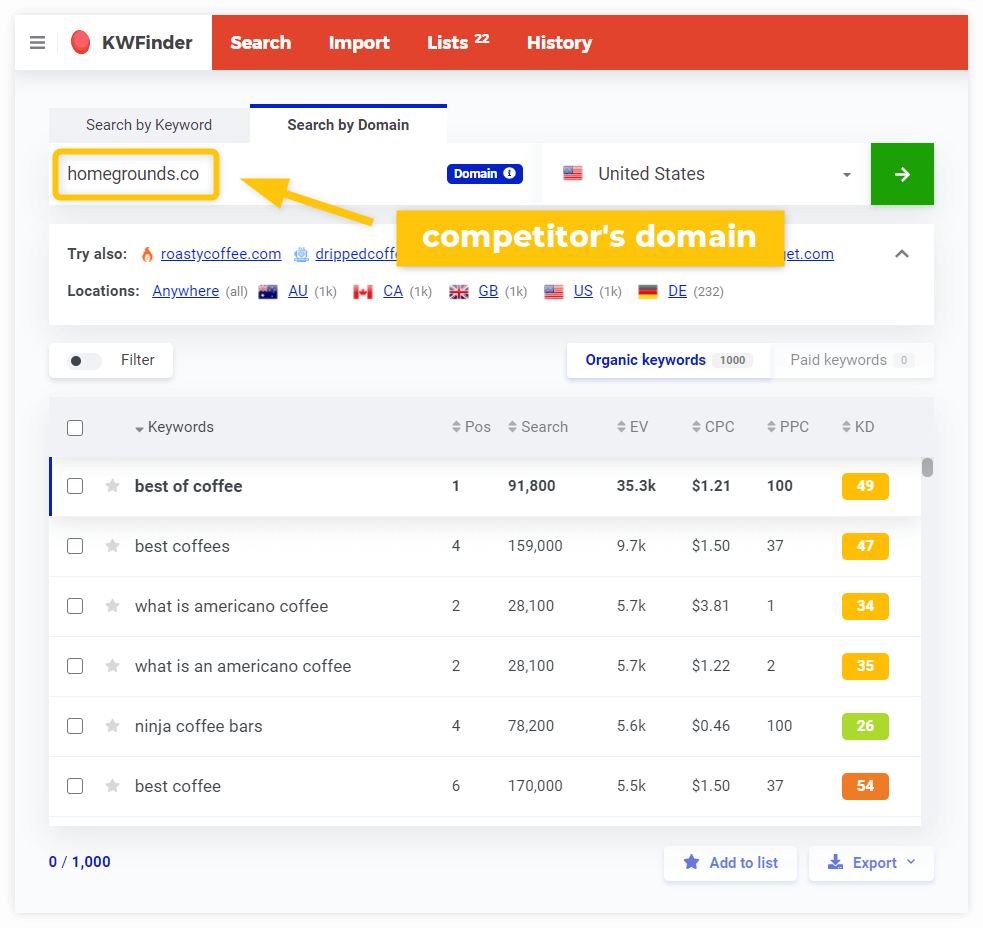

There are 3 ways to start keyword research with a keyword tool:

- seed keyword

- competitor’s domain/URL research

- keyword gap analysis

Here’s what a list of keyword suggestions looks like in KWFinder:

You can also look for the keywords your competitors rank for by simply typing their domain or URL:

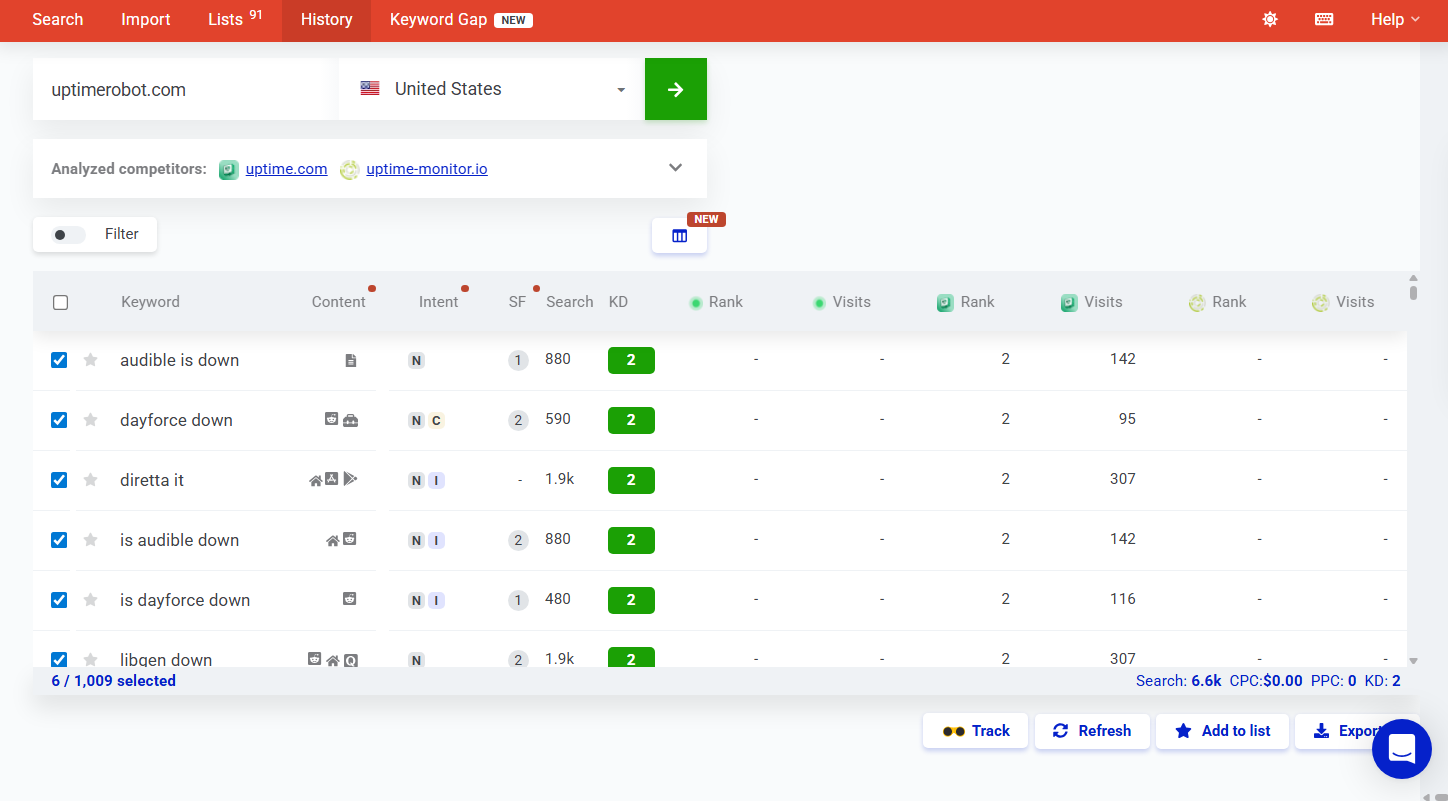

In addition, you can use a keyword gap analysis tool to identify the keywords your competitors rank for but your website does not.

Besides keyword suggestions, it calculates the difficulty of ranking for the keywords and helps you to analyze the SERP.

Keyword metrics

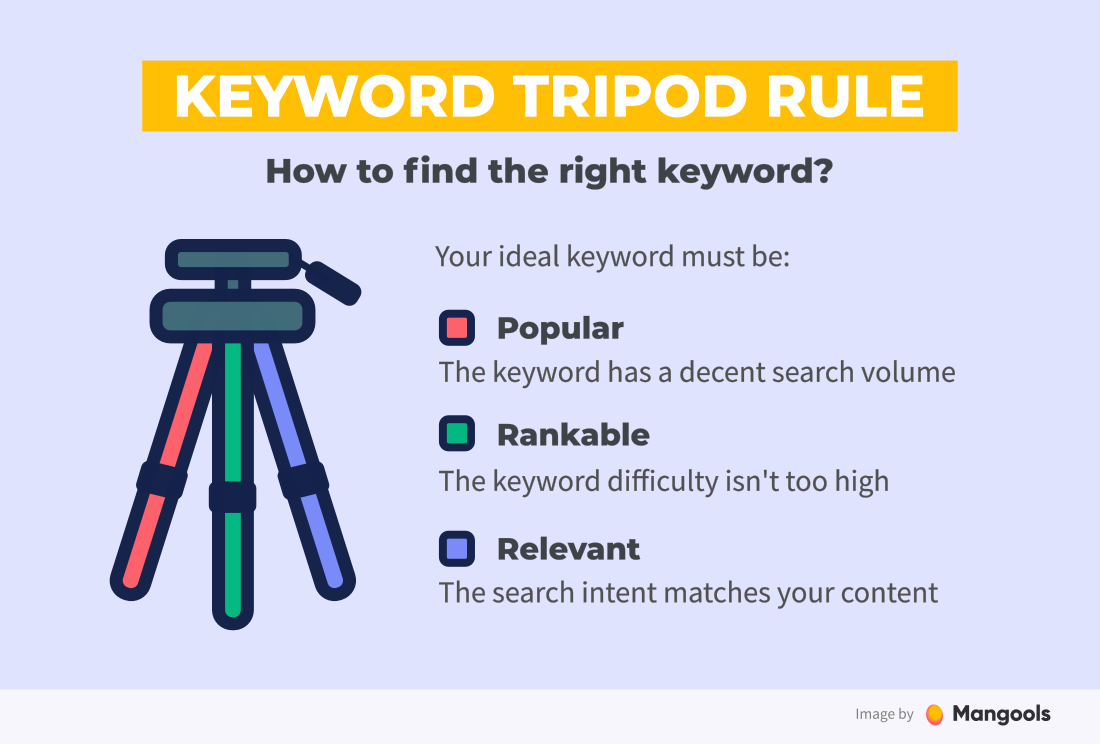

Your goal is to find relevant keywords with high search volumes and low keyword difficulty – an ideal combination of the three most important factors of keyword research.

We call this principle The Keyword Tripod Rule and the three factors represent the three legs.

As soon as you take one of the legs, the tripod will collapse.

Search volume

In the past, content creators did keyword research only to find the keywords with high search volumes.

They stuffed them into content to trick the search engine algorithms and ensure high rankings in organic search. Since then, keyword research has become a lot more complex.

Long-tail keywords vs. search volumes

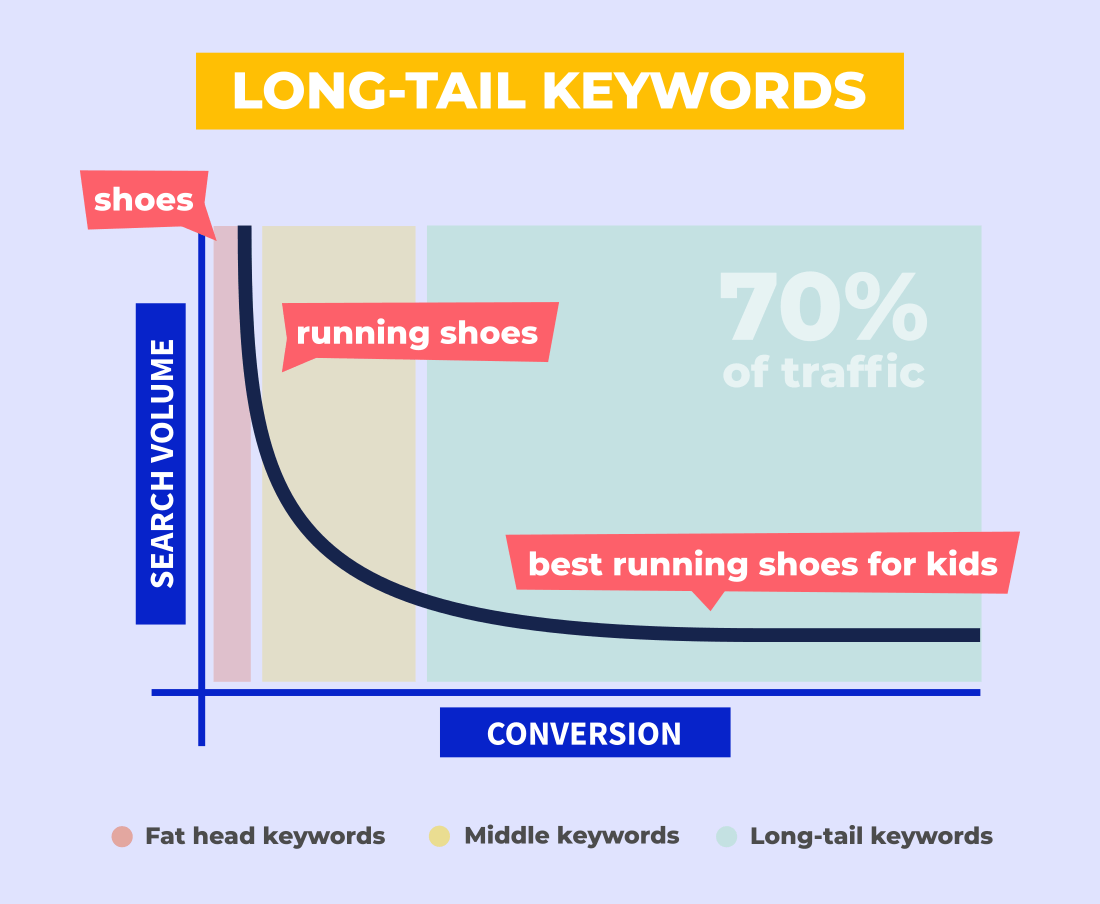

Many keyword research guides recommend focusing on the so-called long-tail keywords – keywords that are more specific and usually consist of more words.

The reason is simple:

Long-tail keywords tend to have lower difficulty and higher conversion rates. It’s because the query is more specific, so there’s a higher chance the user is further down the buyer’s journey.

Not to mention that there are hundreds of them – the estimate is that about 70% of all the traffic comes from long-tail keywords.

Of course, the downside is a lower search volume. So you need to consider all the aspects and find the balance between the effort and the potential benefits.

Besides, ranking only for high-volume keywords is not always possible.

The truth is that as a new website, you simply won’t be able to rank for big keywords. It doesn’t mean you shouldn’t try it once you establish yourself on the market and get some authority.

It’s all about evaluating your actual chances. The metric called keyword difficulty can help you with that.

Keyword difficulty

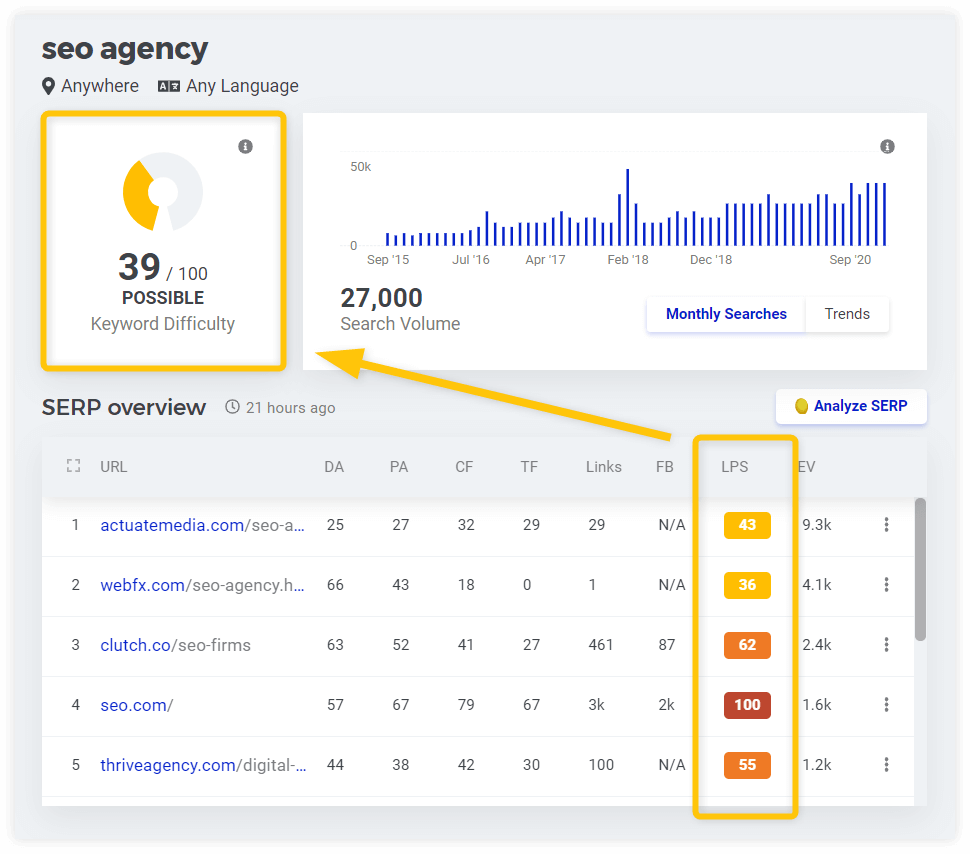

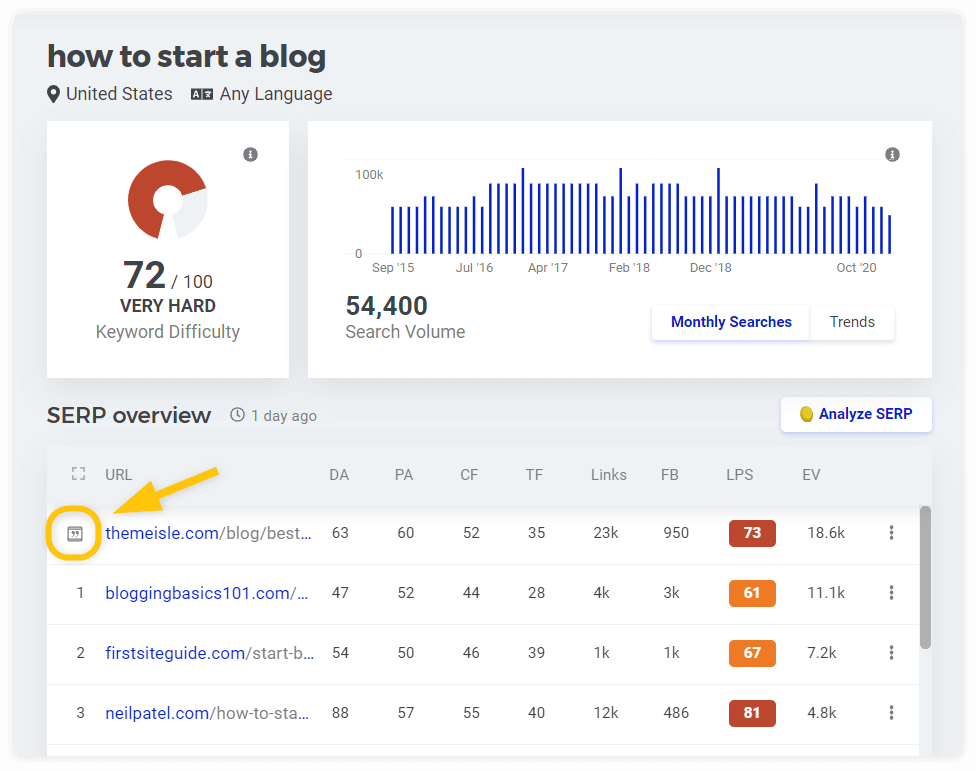

Once you find keywords you want to rank for, you’ll need to find out how hard it will be by evaluating the competition. It is usually expressed with the metric called keyword difficulty.

In most tools, the keyword difficulty value is indicated on a scale from 0 to 100. The higher the score is, the harder it is to rank on the 1st SERP for the keyword.

It is based on the authority of pages ranking for the given keyword.

By keeping an eye on keyword difficulty:

- You’ll get a great overview of what are the “big” keywords and “big” players in your niche

- You’ll be able to identify keywords that you have an actual chance to rank for

- You’ll be able to save a lot of time by focusing on keywords that can bring you results even if you don’t have much authority yet

Note: The keyword difficulty values may vary in different tools – you can see a score 30 in one tool and 50 in another one for exactly the same keyword.

That’s because the calculations are based on slightly different metrics and algorithms. The important thing is to compare the results within one tool.

Keyword relevance

Last but not least, your keyword must be relevant. The easiest way is to do a proper SERP analysis to find out:

- Whether you are able to compete with websites in the 1st SERP (see the previous part about keyword difficulty)

- What is the search intent behind the keywords you want to optimize for

By looking at the SERP you can identify what’s the search intent behind the query and whether it matches your content.

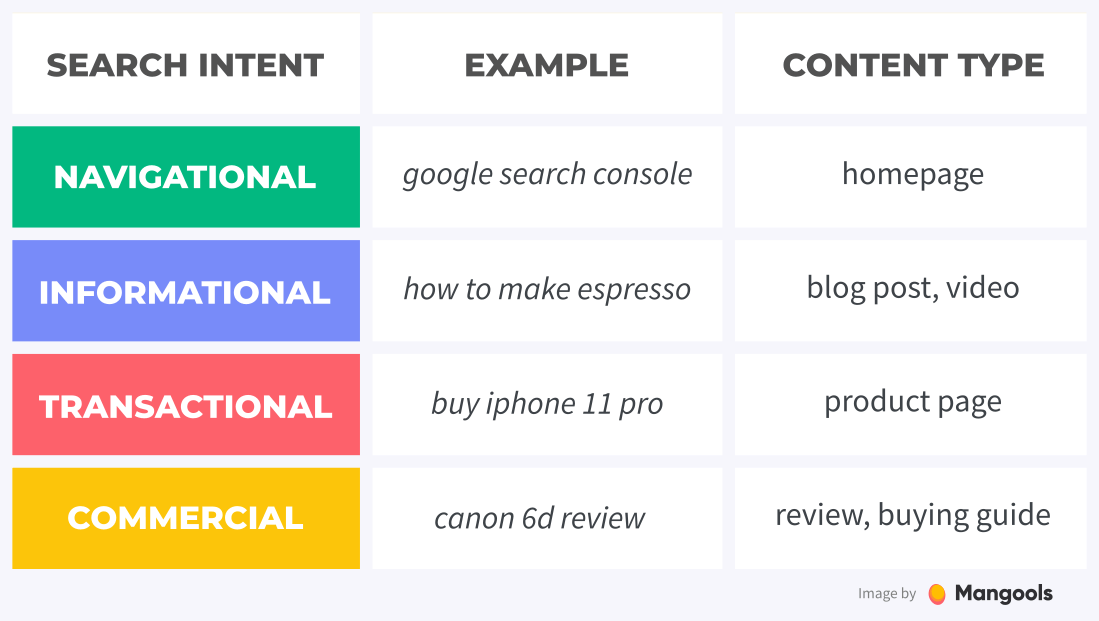

There are 4 different search intent types:

- Navigational – search for a specific website/brand

- Informational – search for general information

- Transactional – the user wants to buy something online

- Commercial – the user does the research before purchase

Look at this table to see some examples:

Let’s say you run an e-commerce store with fitness equipment. You want to optimize your squat racks product page and you find the keyword “best squat rack”. It has a solid search volume and the difficulty is not too high.

However, if you look at the search results, you’ll notice that all the pages ranking for “best squat rack” are reviews and buying guides, not product pages. In other words, Google considers it a commercial, not a transactional keyword.

A quick look at the SERP will tell you that.

Always keep this in mind so you won’t end up optimizing for the wrong keywords.

One of the biggest mistakes people make with keyword research is that they only look at the numbers they see in the keyword tool.

They rarely consider intent when in reality, it is even more important than search volume.

Why?

First, Google really knows what kind of content they want to see for each query and if you check the top results, you will often see similar results.

So if you don’t fall into the categories they want to see, your chances of ranking are extremely low, even if you have all the link metrics.

Second, even if you actually land visitors from Google, tiny changes in the query mean very different things to people and some may be interested in buying your stuff while others really couldn’t care less.

For example:

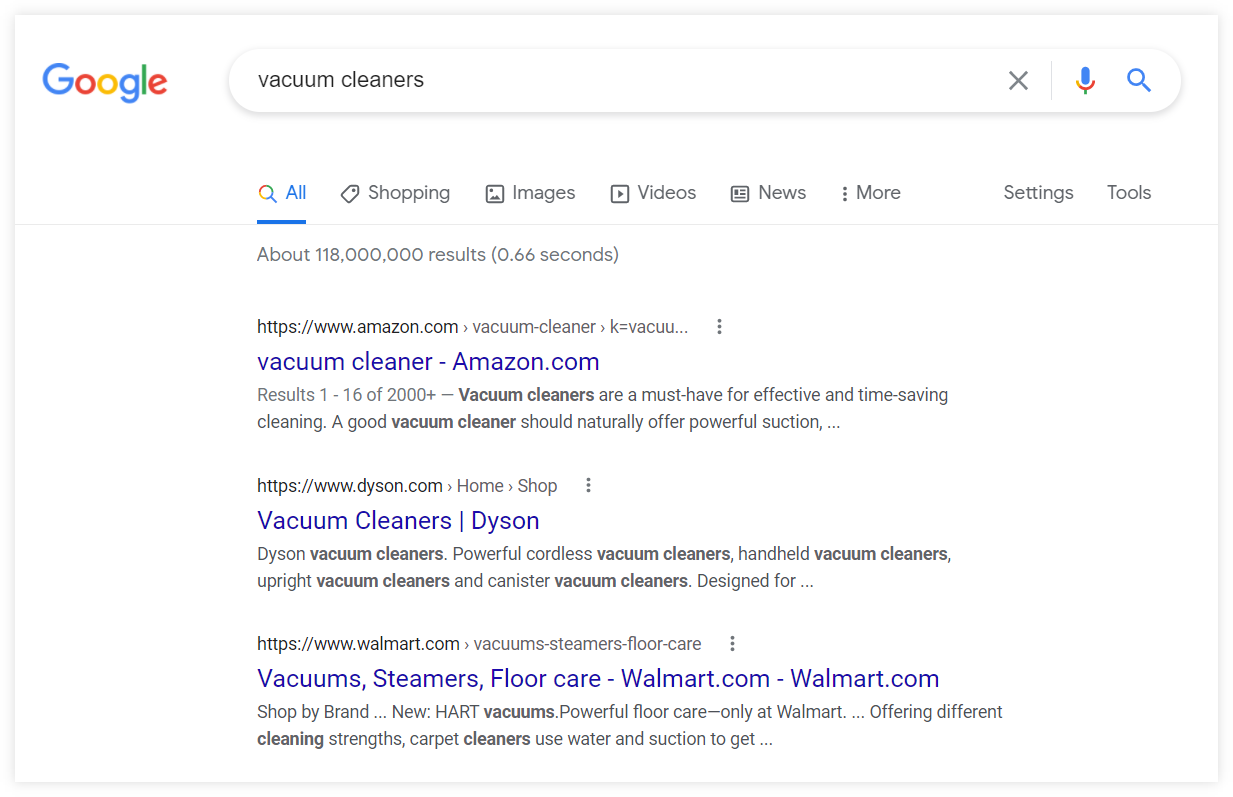

If you check the keyword “vacuum cleaners” a lot of people are searching for it (223,000 average monthly search volume).

But people are more likely to look for brand results and e-commerce pages and this is why Google returns Amazon, Dyson and Walmart results.

And you’re not very likely to sell them anything.

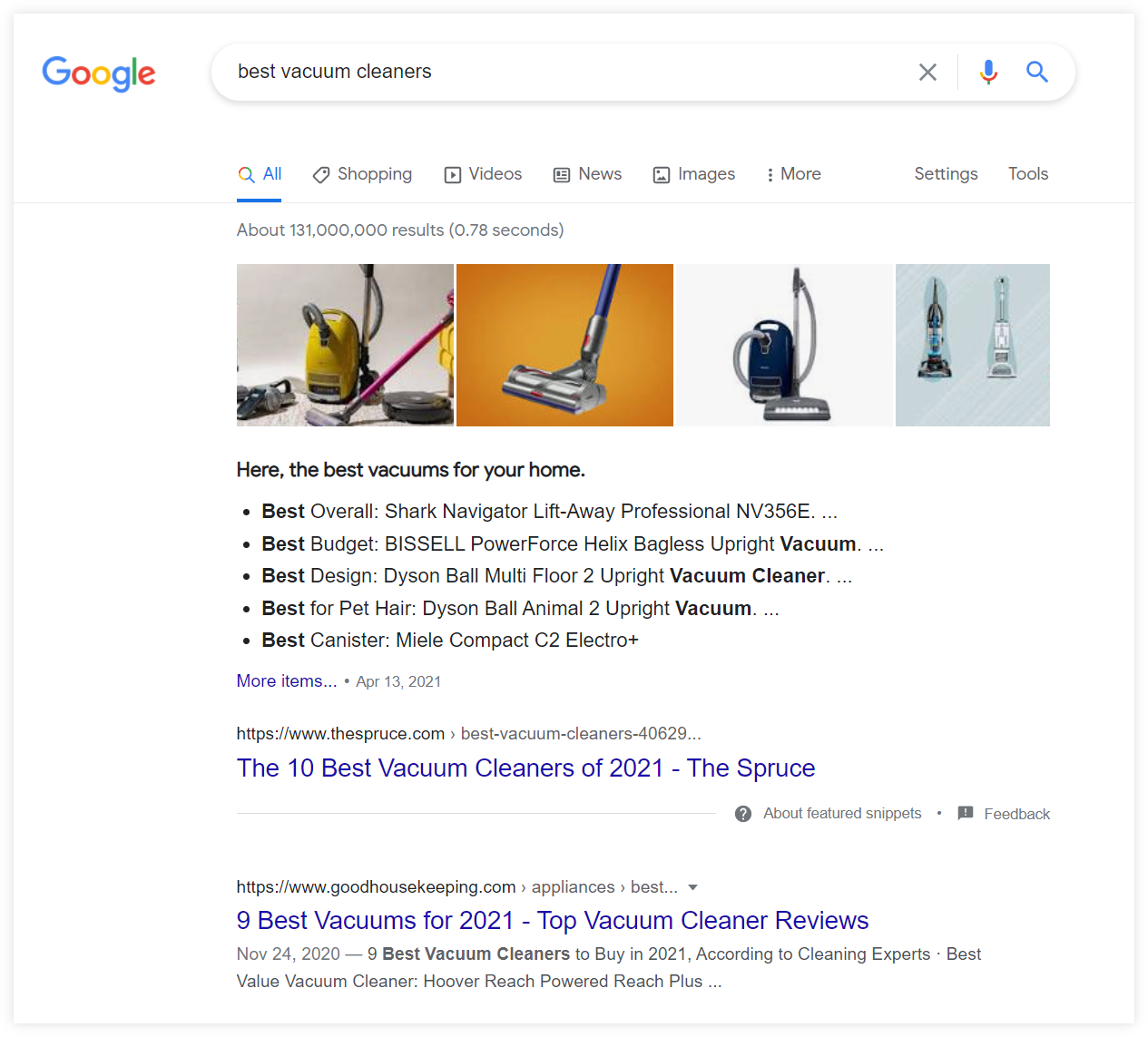

Whereas the query “best vacuum cleaners” has a lot less search volume (13,000 average monthly search volume). But the query is much more commercial in nature and will for sure generate more revenue which is why Google is showing buying guides instead of Walmart product page.

So don’t just focus on search volume, intent is the most important metric.

Tip: If you want to dive deeper into keyword research, you should read our ultimate guide to keyword research for SEO. There is a short quiz at the end to test your keyword research knowledge 😉

Chapter 4

Content optimization

A lot of marketers used to think that content & SEO are separate players. They couldn’t be more wrong. Let’s find out how you can benefit from their synergy.

Chapter navigation

They say that content is king.

As cliche as it may sound, there’s a lot of truth about it. SEO and content are interconnected.

(In other words: there’s no point in doing SEO if your content is garbage).

That’s why we decided to share some practical tips on creating a successful content strategy in this SEO guide.

Topic identification & organization

Your content strategy should be based on a proper understanding of your niche and the needs of your audience.

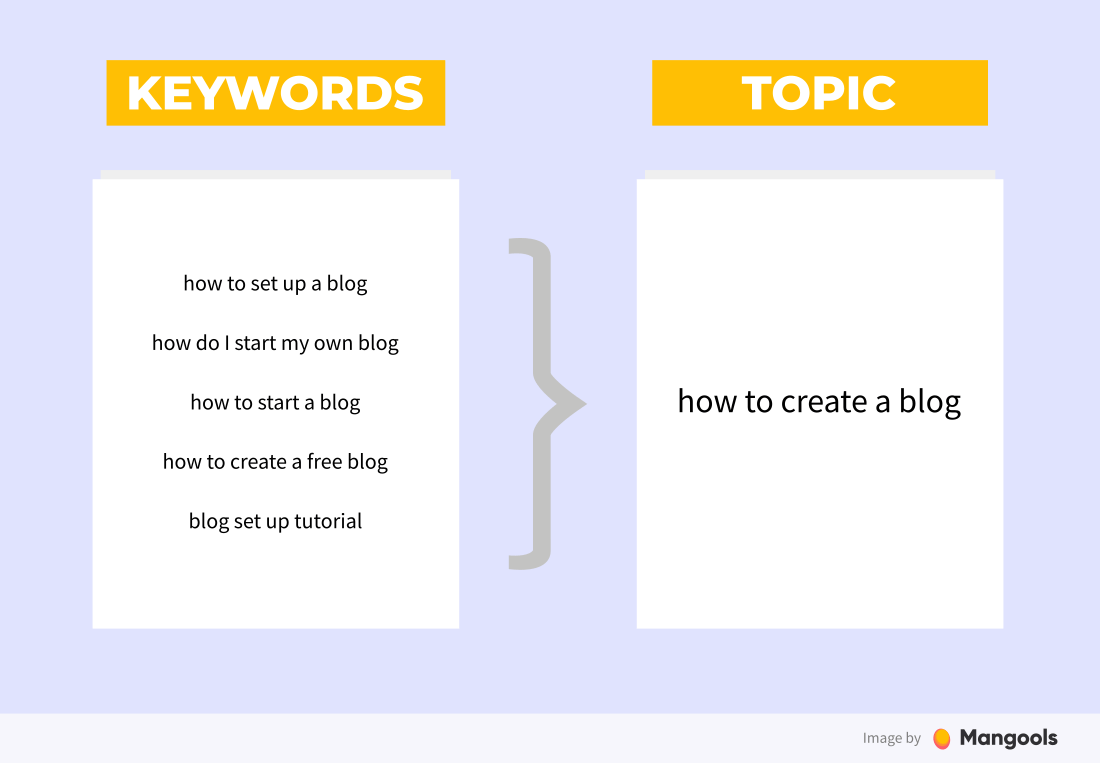

We’ve covered the first step in this process – finding the right keywords – in the previous chapter. The second step is identifying the topics.

Now, many times the keyword is also a standalone topic. But it’s not always like that.

Look at the following example:

Let’s say you run an online marketing blog and you’ll find several keywords like:

- how to start a blog

- how to create a blog

- how do you start a blog

- how to start your own blog

You may notice that although the words differ, they are all about the same thing – creating a blog.

It would make no sense to create a separate post for each of them. Instead, we group them into one topic – how to create a blog – and cover it in a comprehensive guide that could potentially rank for each of these keywords.

(If you do a quick SERP analysis you’ll notice that the search results are almost identical for all of them).

Once you’ve established the topic, you can go back to the level of keywords and select one that will represent your topic the best – the focus keyword (also called the target keyword).

The basic principle of content strategy for SEO is simple:

1 page = 1 topic = 1 focus keyword

To select the focus keyword, you should follow the keyword research principles we covered extensively in the previous chapter – consider its search volume, difficulty and relevance.

How to organize the topics?

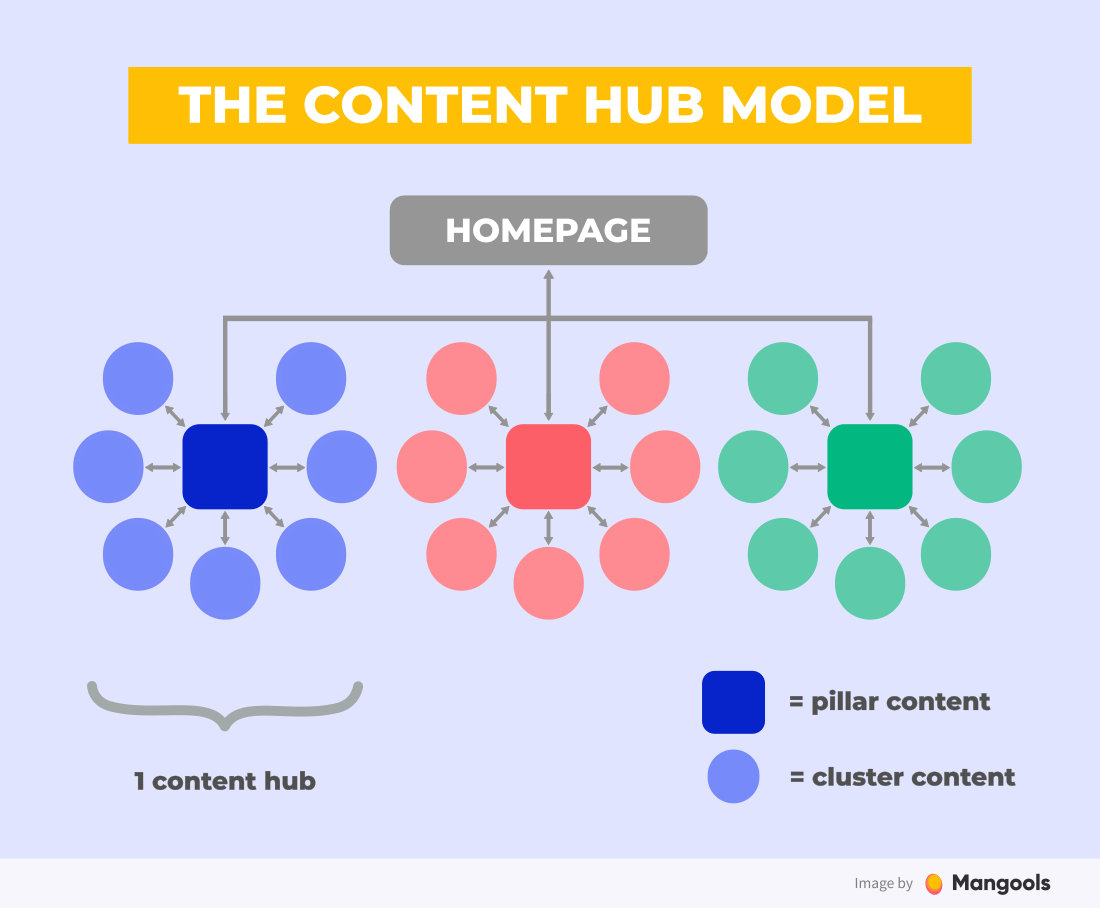

A great way to plan and organize your topics is to use the so-called content hubs.

A content hub is a collection of pages that are all related to a certain topic.

The pages are interlinked and provide a general overview of the topic as well as deeper insights into the sub-topics.

There are two types of content to achieve that:

- Pillar content – a pillar page that provides a general overview of the broad topic, usually targeting a broad keyword (e.g. jogging)

- Cluster content – the supporting pages that focus on subtopics within the theme in detail (e.g. jogging benefits, jogging shoes, jogging mistakes)

This strategy has multiple benefits:

- You provide more value for your readers by covering each topic in detail – they don’t have to visit other websites to learn all about a certain topic

- You are forced to plan and structure your content and cover all the important keywords from your niche systematically

- You increase your authority in certain topics by interlinking pages that are closely topically related

Target various intent types

When selecting the topics for your content, remember that there are various types of search intent (see the previous chapter) – informational, navigational, transactional and commercial.

You don’t have to “sell” in each post. By focusing on various search intent types (including the informational one) you’ll be able to target various stages of the buyer’s journey.

As a side benefit:

- You’ll establish yourself as an authority in the field.

- You’ll build trust, brand awareness and potentially increase the overall share of search for your website.

- You’ll reach new users (that may convert later).

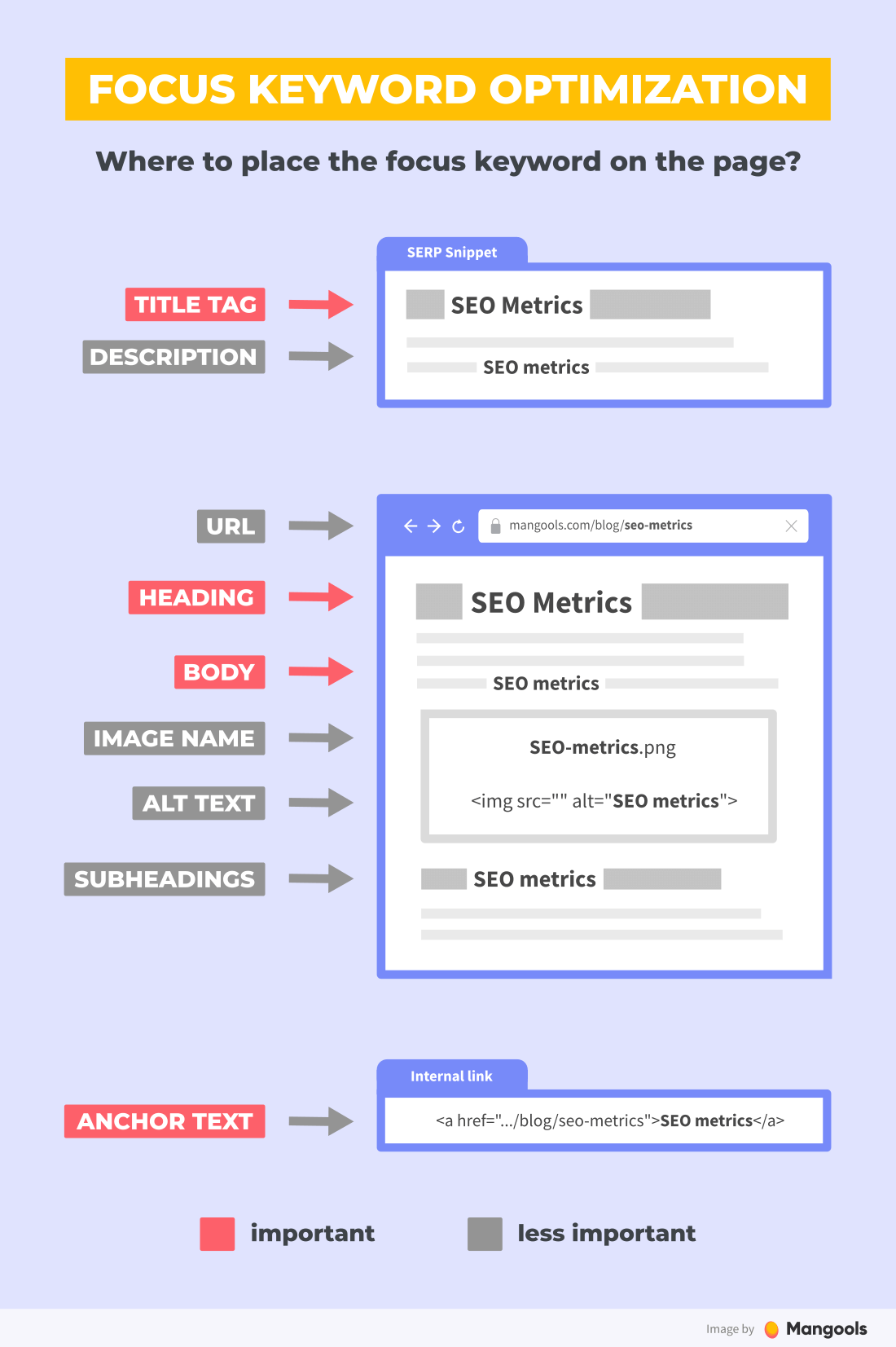

Focus keyword optimization

Once you have a focus keyword, you should use it to optimize your page for the given topic.

Here’s a list of all the possible elements where you can use the focus keyword:

- Title tag and meta description

- URL

- Heading and subheadings

- Body text

- Image metadata

- Anchor text of the internal links

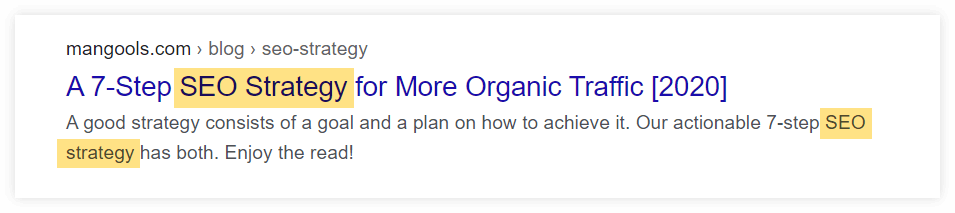

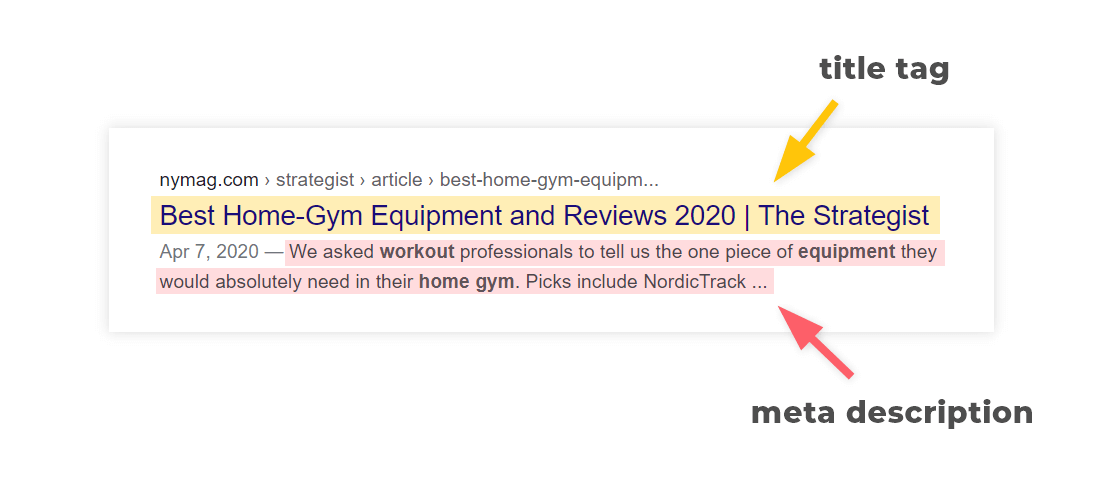

Title tag and meta description

Putting your focus keyword in the title tag (and, to a lesser extent, in the meta description) is very important. Ideally, as close to the beginning as possible.

If you write about a certain topic, it’s only natural that the target keyword appears in the on-page elements that summarize the content of the page.

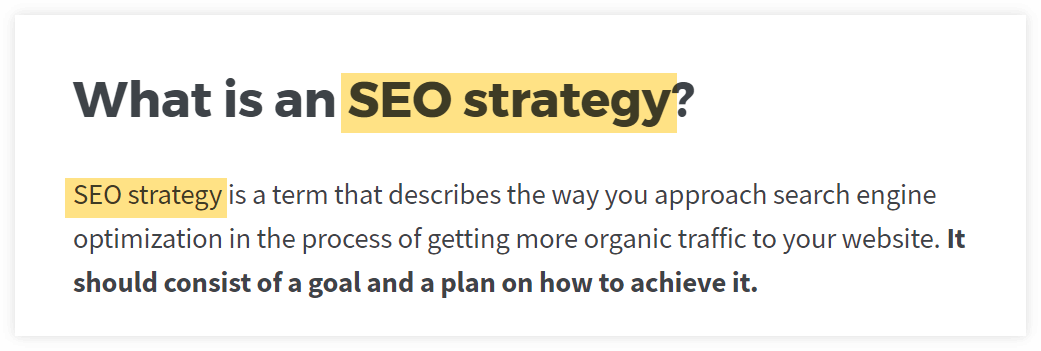

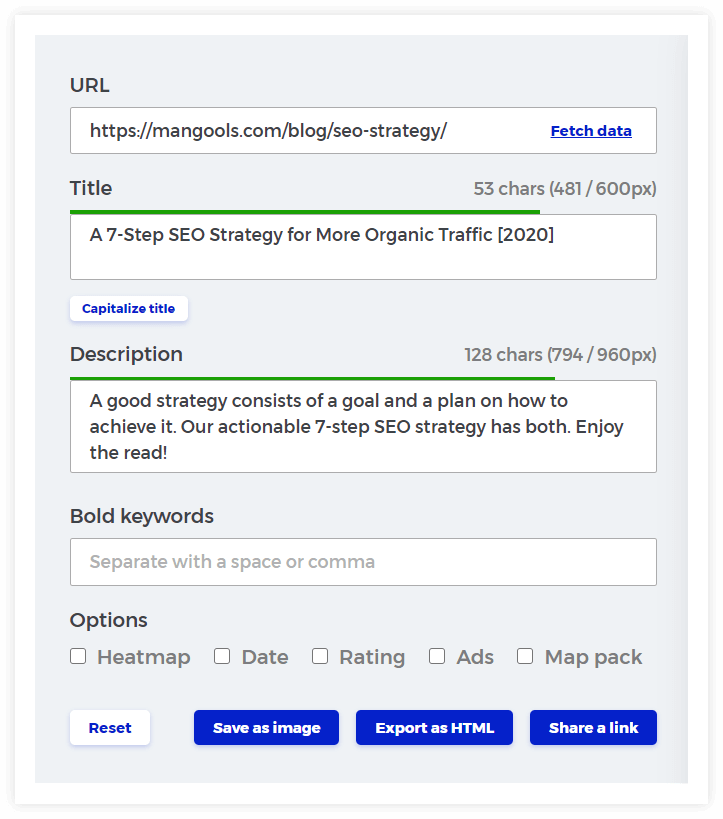

Here’s an example of a SERP snippet for our post on “7-step SEO strategy” with the focus keyword SEO strategy.

You’ll find more about title tag and meta description optimization in the next chapter.

URL

Your URLs should be short and easy to read. It’s not the most important SEO factor, but it can help.

One of the benefits: If someone links to your page with the so-called “naked URL”, the backlink will include your focus keyword.

Headings and body text

It is best practice to use your focus keyword in the H1 heading of the page. If appropriate, it may be used in some of the subheadings.

Finally, it should appear in the body text a couple of times.

Always remember that there’s no such thing as the ideal number of keyword appearances on a page (a.k.a. keyword density).

You can actually do more harm than good by trying to use the keyword a certain number of times in your text.

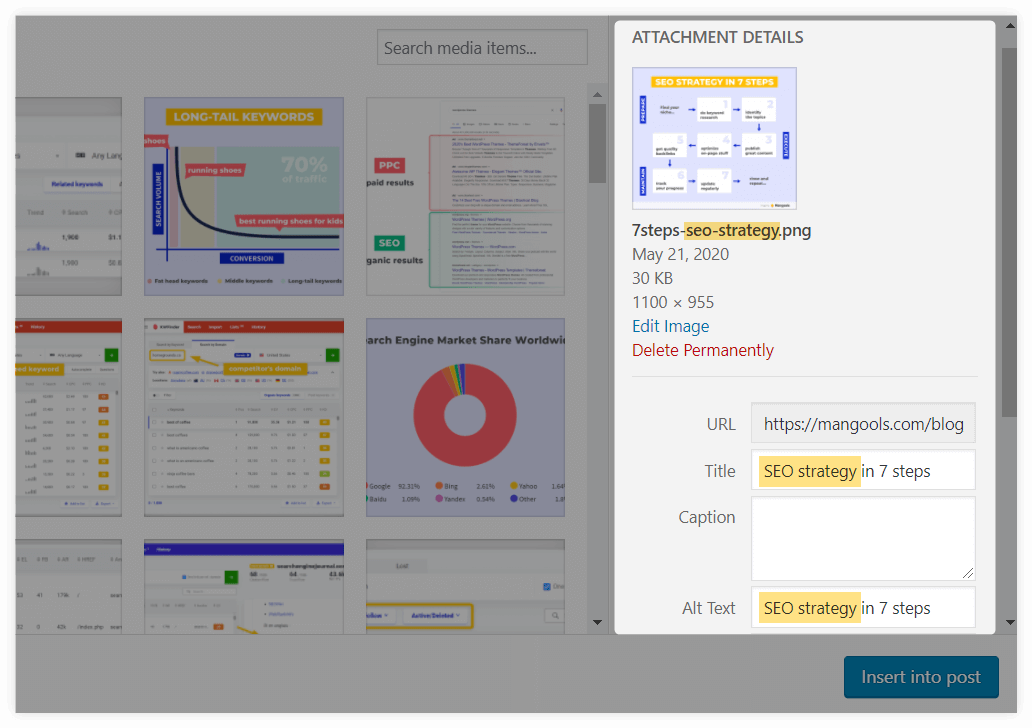

Image metadata

You can insert your focus keyword into various image metadata, namely:

- image filename

- image title

- image caption

- image alt text

The alt text is the most important from the SEO point of view – it describes the image for visually impaired visitors as well as crawlers (that can’t “see” your image either).

It does not mean you have to put it into all these places.

If your focus keyword is “outdoor sports” and you’ll feature an image of a man climbing the mountain, you don’t have to use the alt text “a man performing an outdoor sport”.

Anchor text of the internal links

Last but not least, you should use the focus keyword in the anchor text of your internal links.

Anchor text is the visible part of the link. If it contains the focus keyword, you let Google know what the page you’re linking to is about.

Here’s an example: SEO strategy 🙂

Note: The same applies to external links. However, you can’t always influence what anchor text will be used on external pages linking to your site.

In fact, it may be quite dangerous to manipulate the anchor texts of your backlinks (it is a big “no no” for Google). More on that in the link building chapter.

Now, here’s the most important part about the optimization of your page for the focus keyword: Don’t force it.

If your focus keyword is “best content marketing strategy for small businesses”, it would be crazy to use it in all the on-page elements mentioned above just to tick them all off the list.

Use common sense and write naturally.

What about LSI keywords?

Many SEO “gurus” recommend using the so-called LSI keywords.

What they mean is that you should find synonyms and related keywords and “sprinkle them” across the page to make sure Google knows what it’s about.

The truth is, LSI keywords are just a dangerous SEO myth. Here’s why:

- the term makes no sense and has nothing to do with the original latent semantic indexing algorithm

- the “technique” puts way too much emphasis on the idea of using certain words artificially, which poses a big risk to the integrity of your text (and usually leads to keyword stuffing)

John Mueller, the Webmaster Trends Analyst at Google, summed up the LSI keywords phenomenon in the following way:

“There’s no such thing as LSI keywords – anyone who’s telling you otherwise is mistaken, sorry.”

What to do instead?

Think about “how to make the post as relevant as possible”, not “how to stuff the post with keywords so that Google thinks it’s relevant”.

It’s actually quite simple: Analyze your competitors, study the topic and cover it in a comprehensive way. If you do that, Google will figure out the topic of your page.

Quality of content

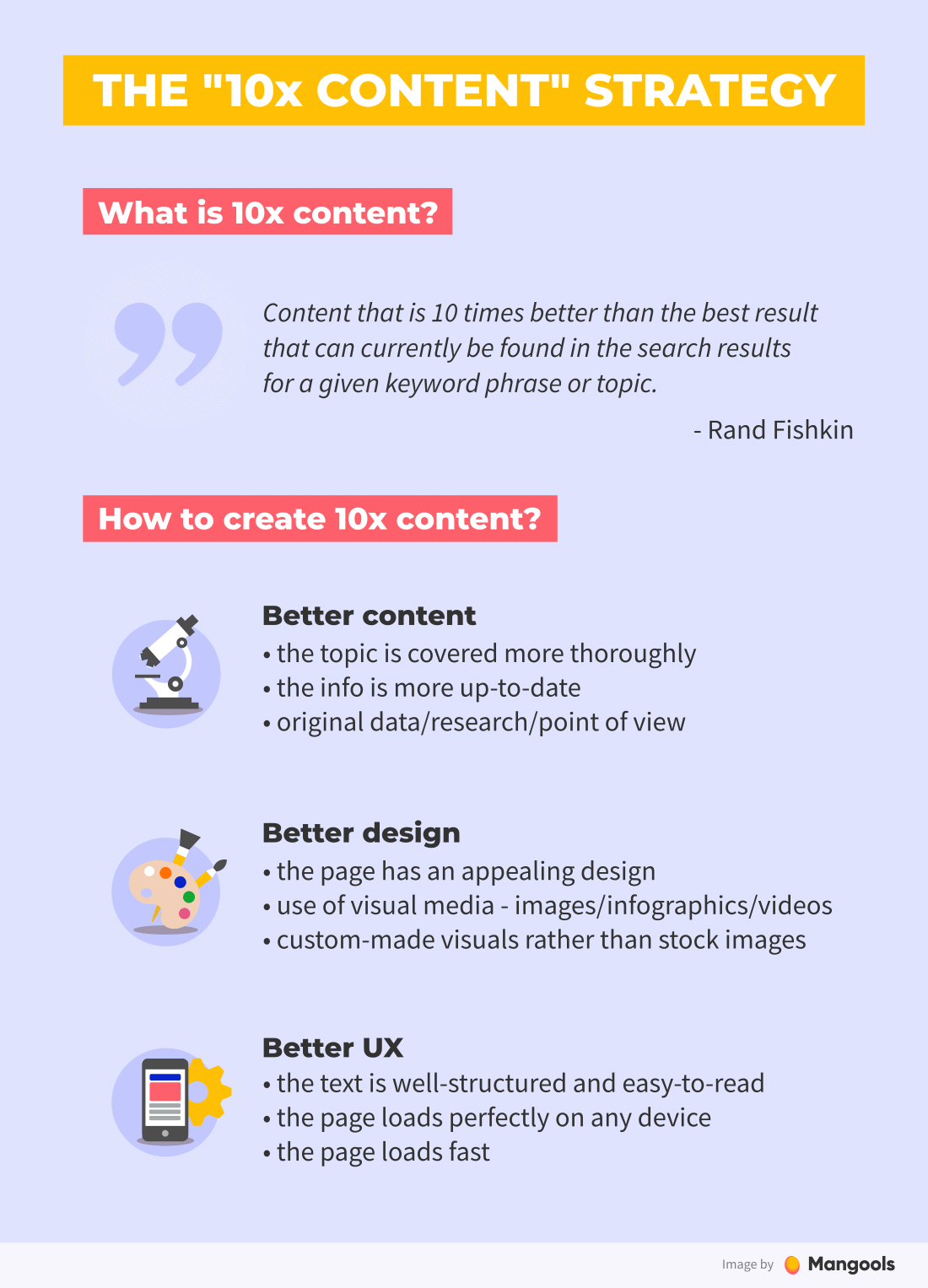

Nowadays, ranking for almost any keyword is much harder than it was in the past – most niches are oversaturated.

But there’s one strategy that works perfectly – creating content that is 10x better than your competitors, also known as the “10x content” strategy.

Here’s what it means in practice:

Better quality

- Cover the topic more thoroughly than your competitors (you can literally look at the top-ranking pages and try to figure out what can be done better)

- Provide more up-to-date information and data

- Provide more expertise (feature insights from experts in your niche) and trustworthiness (cite trusted sources)

- Be original, use unique data, provide new angles, do experiments

- Link to other relevant resources of high quality

Is “linking out” to other websites good for SEO?

Many people are afraid of linking to other websites as they don’t want to “send their visitors away”. The truth is, linking to other quality resources can be good for you from an SEO point.

Linking out to relevant content helps to strengthen the topical signals of your pages. It can help Google to better understand the context of your site and it brings added value for your visitors.

Better design

- Use a unique layout for your most important pieces of content

- Add visually stunning media (illustrations, infographics, charts, gifs, screenshots, videos)

- Use your own illustrations and avoid stock photos

Better UX

- Make sure the text is readable (font type and size) and free of grammar errors

- Avoid walls of text – write short, easy-to-digest paragraphs

- Use navigational elements (e.g. table of contents) for longer pages

- Use quotes, info boxes, bulleted lists, bolded sentences

- Optimize the technical aspects (more on that in the next chapter.)

Content length

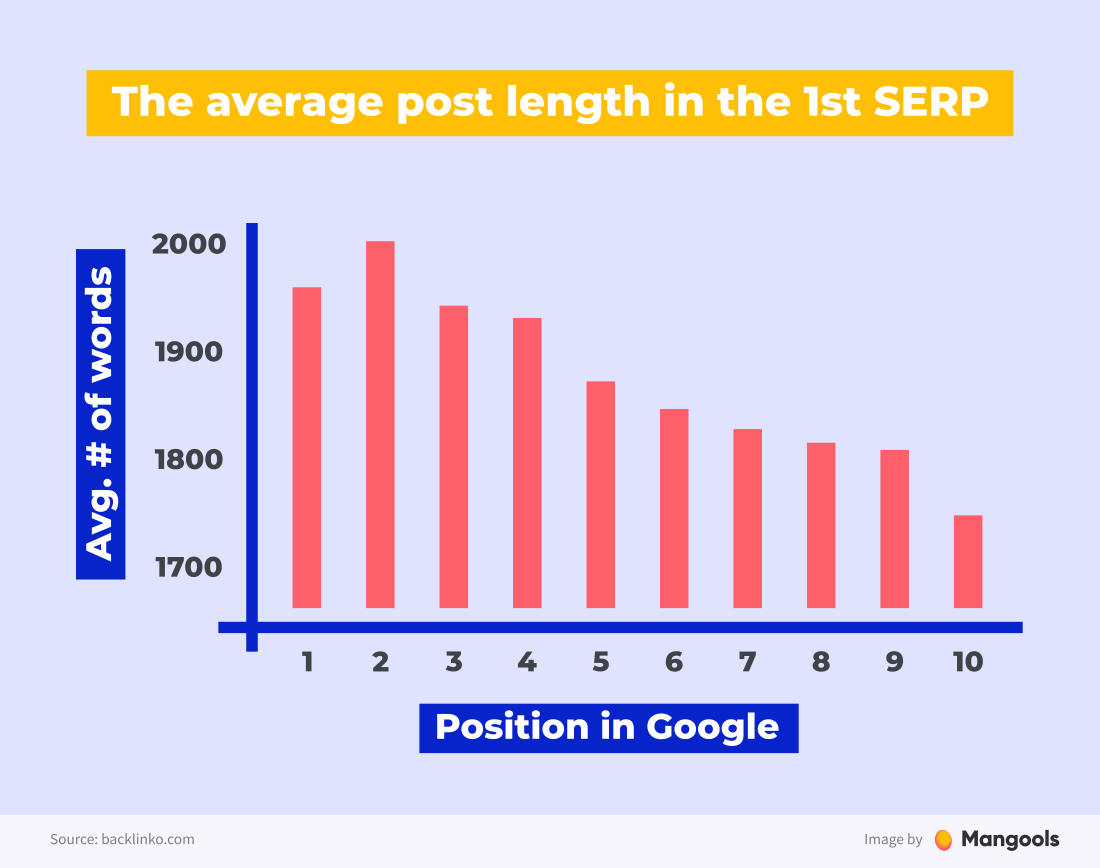

Many people think that content length is one of the ranking factors. There’s a well-known study by Backlinko that shows that posts with about 2,000 words ranked better in Google.

While it’s true that longer content ranks better in Google, it is not the number of words that brings high rankings. It’s the fact that long posts usually cover the topic in a more comprehensive way.

Today, I never create blogs below 2,000 words, if my goal is to rank for a focus keyword.

In fact, to prove this myself, I launched a brand new site, Content Hacker. It was my “content length” experiment. Could I rank with only 11 blogs for at least a few dozen keywords, if those blogs were “mega-blogs” at 5,000 words each?

In less than three months, we ranked for 2,500 keyword positions. Most of my 11 blogs are already ranking in Google for their focus keyword. How crazy is that?!

The thing is that as an author, you can’t “bluff” your way through 2,000-5,000 words on your topic. And to earn those rankings – to nail that “Expertise – Authoritativeness – Trustworthiness” qualifier from Google – you must be a subject matter expert.

Content length and comprehensiveness basically checks that “expert” box. Again, there’s no shortcut. You must be an expert on the topic you’re writing about, and you must prove it when you write content.

So how to approach the content length?

- Look at the average word count of the pages that rank for your focus keyword to give you a rough idea of how long the content should be. (e.g. if every post on the 1st SERP has 2,000+ words, you most probably won’t rank with an 800-word article)

- Cover the topic in a comprehensive way that covers everything a potential reader might want to know.

- Always keep in mind that a high number of words alone won’t improve your rankings. Focus on the quality of content, not just quantity.

Note: Correlation does not always mean causation in SEO. If something (like longer posts) correlates with higher rankings, it does not necessarily mean that it is a direct ranking factor.

Content updates

Content decay is a real thing.

No matter how successful a piece of content is, there’s a big chance the traffic will gradually decrease unless you keep it fresh and updated.

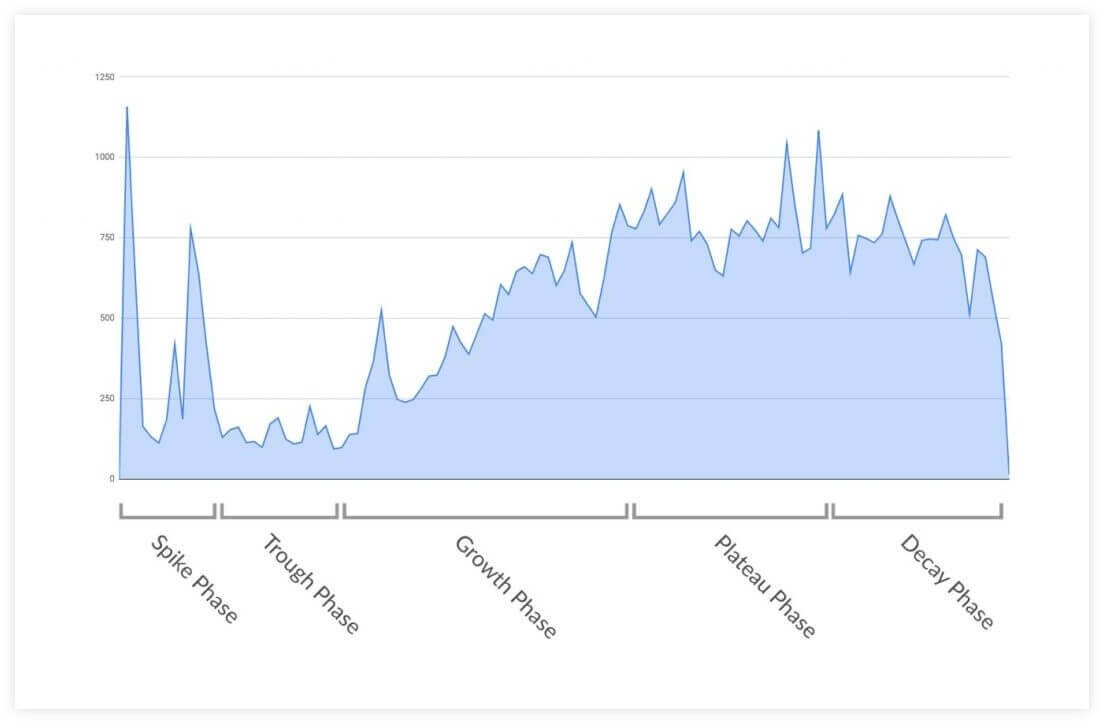

Andrew Tate noticed that many successful blog posts share the same traffic curve and described this phenomenon as the 5 phases of the content lifecycle:

So, how to make sure your post is not forgotten over time?

The answer is: regular updates.

Regular content updates are an important (yet often overlooked) SEO technique.

One of the reasons why content updates may have a positive impact on your rankings is that Google notices the frequency of updates and tends to favor frequently updated pages for some queries.

It means, not every topic requires content freshness but many do.

Even if it wasn’t your case, an update is a relatively easy way to improve the quality of your content, which is never a bad thing to do.

Tip: There’s a handy free tool by Animalz that can be connected to your Google Analytics account and identify pages that may need an update based on the declining traffic.

Updating vs. republishing

While smaller changes of your pages don’t require any special steps, a major remake is probably worth republishing the post – so that it shows at the top of your blog feed and the readers know the post went through a big update.

Here are some cases when you may consider to republish your post:

- The update affects more than 50% of the content

- You’ve added a significant amount of new content

- You’ve merged 2 or more posts into one

Republishing is also a great opportunity to promote your post again on social media and newsletter, or starting a new link building campaign.

Chapter 5

On-page &

technical SEO

In the previous chapter, we covered on-page SEO techniques related to content. Now, we’ll take a look at the more technical aspects. Let’s dive in.

Chapter navigation

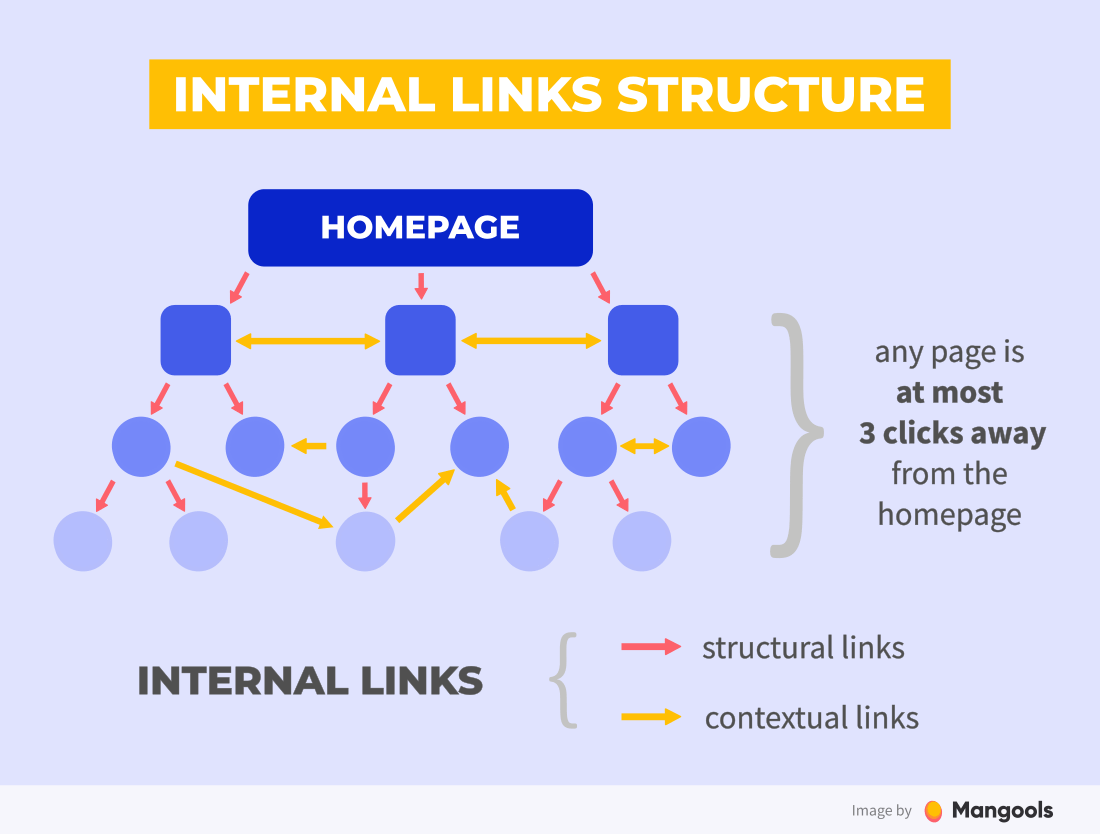

Internal linking

Internal linking is quite an overlooked SEO practice.

Yes, external backlinks are essential in SEO (more on that in the next chapter.), but having a proper structure of internal links is equally important.

Here’s why:

- Internal links improve the crawlability of your website. If your pages are well interlinked, the search engine crawlers have an easier job to find and index all your pages.

- Internal links improve the UX and engagement. If you have clear navigation, your visitors will find what they need more easily. With relevant contextual links, they’ll spend more time with your content instead of leaving the website to find their answers elsewhere.

- Internal links can improve your rankings. Yes, internal links pass link equity too. If a page has a lot of relevant internal links with descriptive anchor texts, Google will understand the linked page better, consider it important within your page structure and give it more prominence.

The golden rule of good internal linking is this: Any page should be at most 3 clicks away from your homepage.

The biggest advantage of internal linking? Unlike external links, internal links are fully in your hands.

So, how to get a well-interlinked website?

Use clear navigational elements

The key to having a well-interlinked website is to have properly structured navigational elements.

People are used to navigating through websites in a certain way and you should make this process as easy and clear as possible for them.

- Menu – a main navigational element that should be clear and descriptive

- Breadcrumbs – very useful if you have a deeper structure of nested pages

- Categories – categorize your content into logical categories so that people can find similar content easily

Further reading: Website Navigation: 7 Best Practices

Link from the body of the page

Besides the structural internal links, it is a good practice to also link to other relevant pages from within the page body.

These links include contextual in-text links or “further reading” boxes that link to other pages from your website that might be interesting for your visitor.

Just learn these two simple practices:

- Every time you’re about to publish a new post, think about your other content the reader might find useful and link to it contextually.

- Once the new post or page is published, add a couple of internal links from other topically-relevant pages.

Over the past few months, I’ve reviewed over 1,000 websites. One of the most common “mistakes” I see is that people don’t make their headlines clickable, especially when it comes to blogs.

So they’ll have a headline, post snippet and a Click here or Read more button in a vertical line. As you can imagine, ‘Read more’ is the only link they have in place.

Not only does this not make for the best user experience – people are naturally inclined to click on images and headlines to read something, – but you don’t want the internal links to every single one of your articles to just say ‘read more’.

It’s basic advice, sure, but it’s amazing how many sites I come across where this is in place.

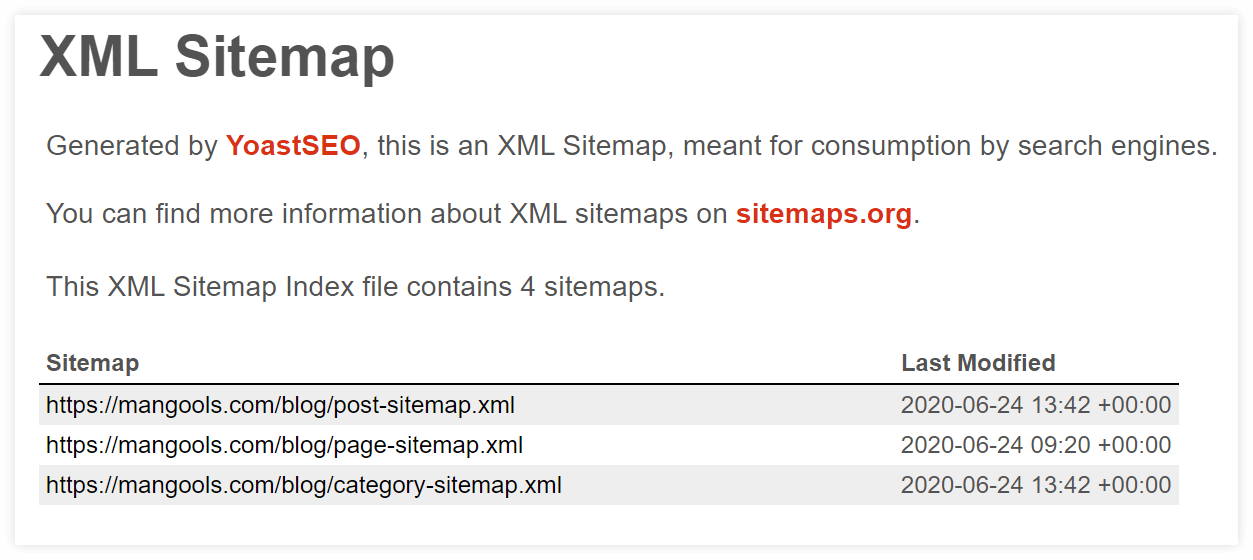

Sitemaps

A sitemap is a structured list of all pages on a website available to be crawled by search engines. Having one is yet another way to let crawlers find all your pages.

What kinds of sites will benefit from a sitemap (according to Google):

- Large websites with hundreds or thousands of pages

- New sites with little or no backlinks

- Websites that don’t have many internal links (e.g. they contain pages with no internal links)

- Websites with many media files (e.g. image gallery)

Do you always need a sitemap?

No, you don’t. Especially if you have a small website with a couple of well-interlinked pages.

On the other hand, having a sitemap can never hurt you. What’s more, it contains some useful additional information, like the lastmod attribute – the date when the last update of the page was made so that the crawlers will know whether the page needs to be re-crawled.

Find out more in our SEOpedia post on XML sitemaps.

If you’re not sure how to create a sitemap and your website runs on WordPress (which it most probably does), we recommend creating the sitemap with the Yoast SEO plugin.

It will look something like this:

To let Google know about your sitemap, you can submit it to Google Search Console.

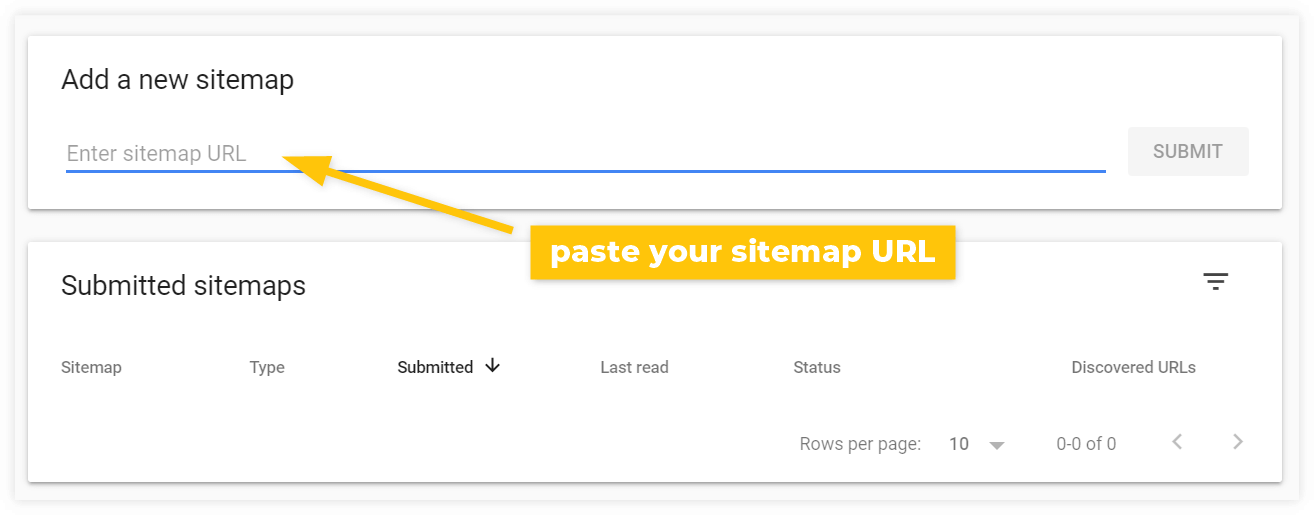

Then go to Google Search Console > Sitemaps and paste it under Add a new sitemap:

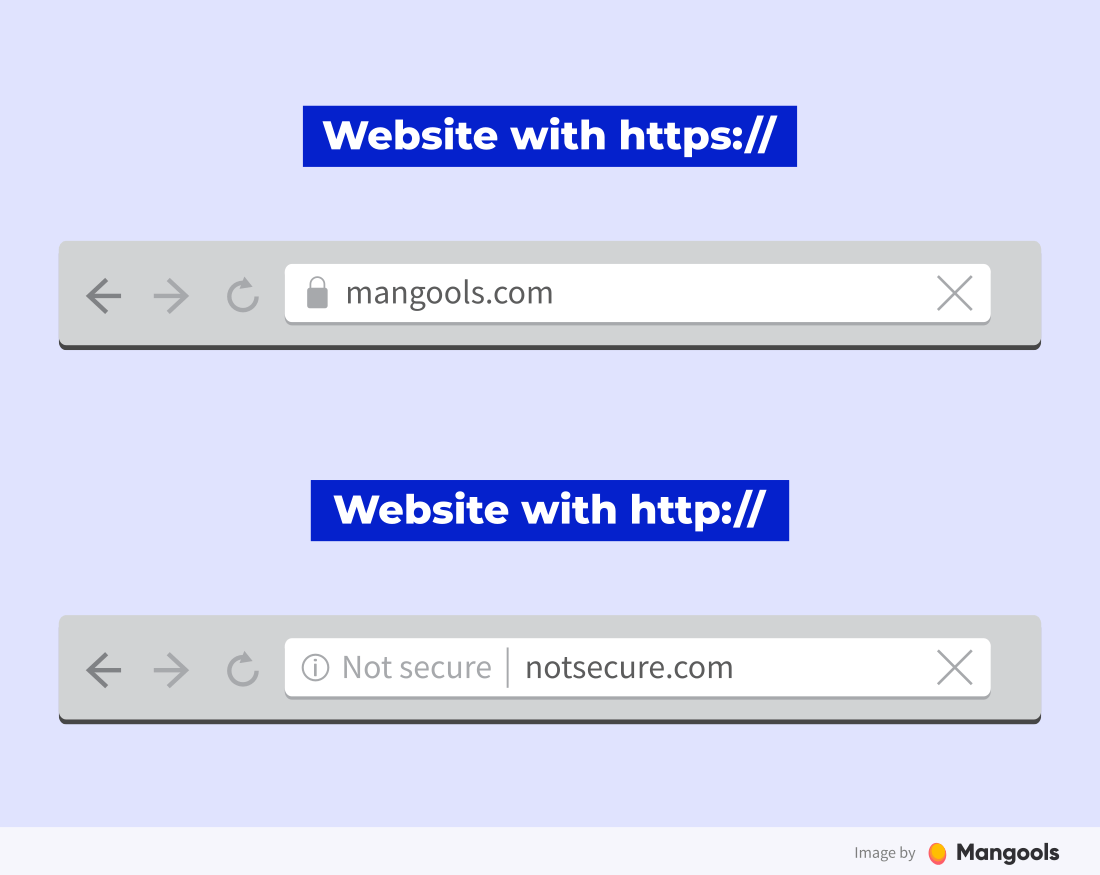

HTTPS

This one goes without saying. There’s really no excuse for not using an SSL certificate these days, especially since there are free options available (like Let’s encrypt).

The security of your website’s visitors should be a priority for you.

Not only for the obvious reasons but also because the usage of HTTPS protocol has become a minor ranking signal in 2014. In other words, your website may perform worse in Google if you don’t use HTTPS.

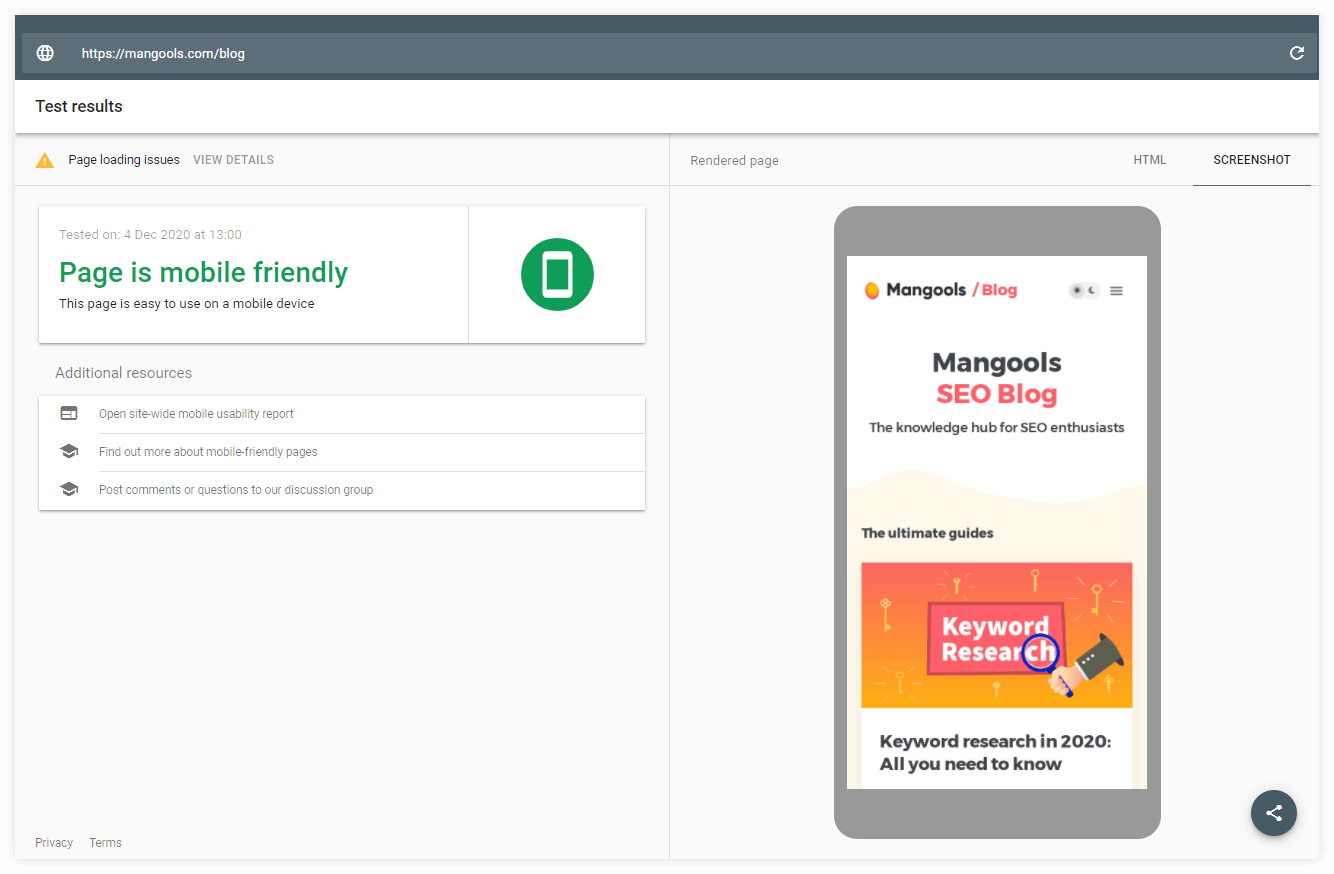

Mobile-friendliness

As of 2019, Google uses mobile-first indexing. It means that most of the websites are crawled and indexed in their mobile version instead of the desktop version.

Having a mobile-friendly website is an essential SEO task. In practice, it means:

- A responsive layout

- Menu that is easy-to-navigate on mobile devices

- Compressed images

- No aggressive pop-ups

- A readable font

If you’re not sure whether your website is mobile-friendly, you can test it with this tool from Google or go to Search Console and see if there are any issues in the Mobile Usability section.

Luckily, most of the developers keep mobile-friendliness in mind these days so if you pick a quality WordPress theme, you should be fine.

But there’s one particular mobile SEO factor that you should pay close attention to. Page speed.

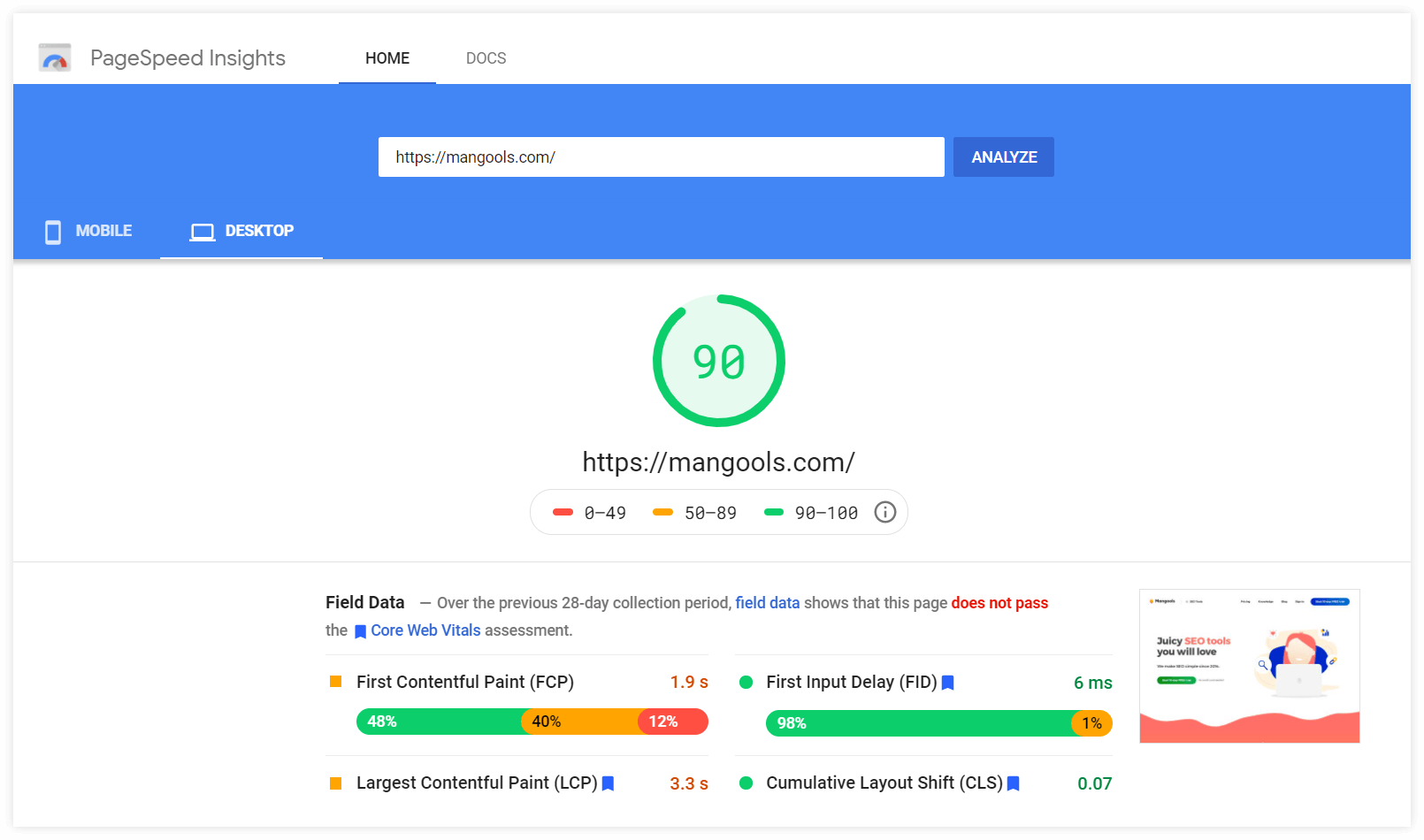

Page speed

Page speed is one of the most important aspects of technical SEO and an essential UX factor. Nobody is willing to wait more than a couple of seconds for a page to load.

What’s more, page speed is a confirmed ranking factor.

There are many useful tools that will help you measure your page speed and find the most common page speed issues. Namely:

Now, let’s take a closer look at the best practices to keep your page speed at a satisfactory level:

1. Use a quality web hosting

Your web hosting is the first thing that influences your page speed.

If your hosting has a poor server response time, there’s little you can do with any further optimization.

You don’t have to worry about milliseconds, but don’t expect awesome performance from providers that offer hosting for $0.10/month.

Note: Most beginners and small website owners will do just fine with quality shared hosting. It is affordable and can be upgraded in the future if needed.

Last but not least, make sure the physical location of the server is as close to your target audience as possible (e.g. if you target the US market, don’t have a server located in Germany).

2. Implement caching

Caching is a process in which parts of your page are remembered (either by your server or the visitor’s browser) in order to make the next loading much faster.

There are two main types of caching:

- Browser caching – the caching is done on the user’s side; if you use WordPress, you can use one of many plugins like WP Rocket or W3 Total Cache (always use only one!)

- Server-side caching – runs on the lower level and is more effective; usually provided by managed web hosting services

Read more on website caching in this great post by Winning WP.

3. Consider AMP

The Accelerated Mobile Pages technology allows faster content distribution on mobile devices. In practice, it means the content is served in a simpler, stripped-down version of your page on smartphones.

It can be very useful for content-heavy websites (like news magazines or larger blogs). If you run a WordPress website, there’s an official AMP plugin to help you with implementation.

4. Limit third party-scripts

Any third-party scripts you use on your website add some time that is required for a page to load. These include:

- WordPress plugins

- Analytics and remarketing scripts

- Commenting services (e.g. Disqus)

- Chat widgets

It doesn’t mean you shouldn’t use any of these. Just follow these simple rules:

- Only use the services you really need. This is especially important with WordPress plugins. Don’t use a special plugin for every single small feature on your website. Too many plugins can slow down your website.

- If possible, delay the activation of third-party scripts so that they are loaded only after a couple of seconds or when the visitor scrolls down the page. This can be applied to commenting services as well as chat widgets.

You’ll find more useful information on this topic in a great guide on analyzing third-party performance by Kinsta.

5. Optimize your images

Big image files are one of the most common factors that cause slow page loading.

Here are some image optimization practices you should follow to make sure your images are not too big:

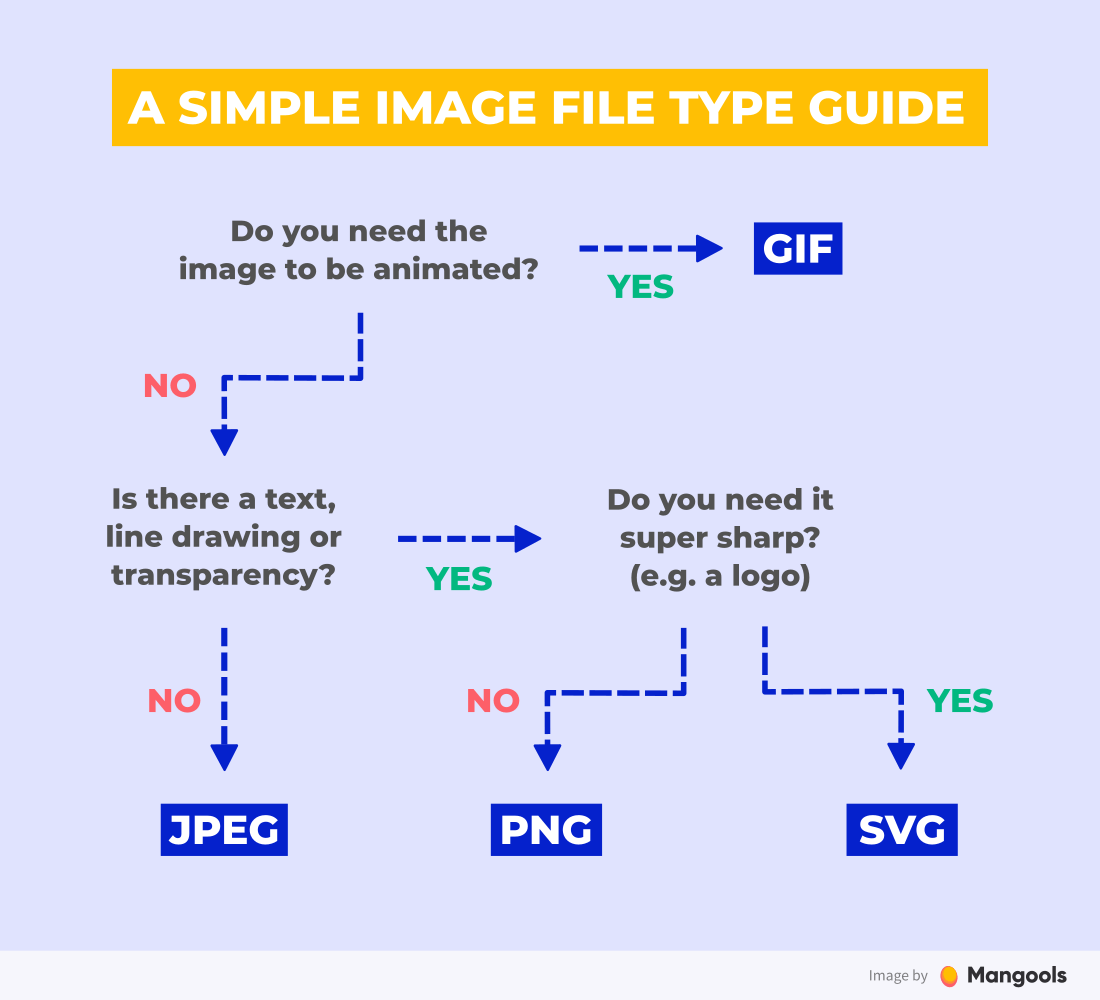

a) Use the right file type

Using the right image file format can help you get a better image quality and reduce the file size.

- JPEG – photos

- PNG – line drawings, screenshots, images that contain text

- GIF – animated images

- SVG – logos, icons, simple illustrations

Next-gen formats

The ideal solution is definitely to use the so-called next-gen formats (WebP, JPEG 2000 and JPEG XR) as they were designed specifically to save resources.

However, they don’t have 100% browser support yet (neither are they supported in WordPress) so you can either use them selectively or wait until they’re used across all platforms.

Take a look at this simple guide to selecting the right image file type:

b) Resize your images

Many people upload images that are simply too big. If your blog content area width is 800px, it is an overkill to use 2500px-wide images.

Before uploading your image to the server, use an image editor to resize the image to fit your website width. It can be a little wider, but you rarely need a full size (especially with the photos).

c) Compress your images

Image compression is a process that removes some unnecessary image data while preserving image quality.

You can either do it manually and try to find the best ratio between quality and file size or automate the whole process with a plugin (e.g. Imagify, ShortPixel, Tiny PNG).

d) Consider lazy loading

Lazy loading is a simple process in which the content that is visible above the page fold is given priority and the rest is loaded a little bit later. It is very useful for image-heavy pages.

Read more about image lazy loading in this great guide by ImageKit.

Image alt texts

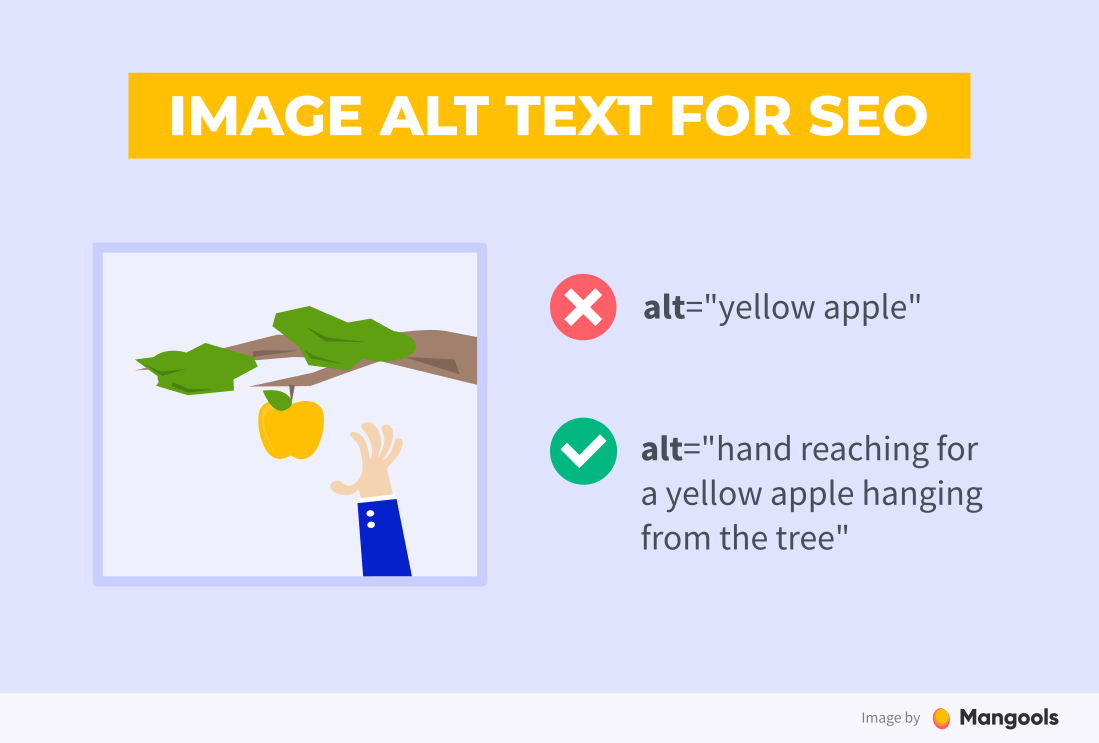

Image alt text (also called alt tag) is a piece of text in the HTML code that describes the image and appears if the image can’t be loaded.

It is very important for 2 reasons:

- From the UX point of view – the screen reader can read the alt text for visually impaired visitors

- From the SEO point of view – alt text provides better context for crawlers since they can’t “see” your image

Note: You don’t always need to use alt text, especially if the image does not convey any meaning. Check this alt decision tree for more information.

To write a good alt text, you should:

- be descriptive – describe the image in the best way possible

- keep it short – 5 to10 words should be just fine

- avoid keyword stuffing – alt text is not a place to stuff your keywords unnaturally

Besides the alt texts, you should also use:

- descriptive image file names (yellow-apple.jpeg is always better than DCIM1523.jpeg)

- image title and description

- captions (optional)

Title tags and meta descriptions

Title tag and meta description are HTML elements that represent the title and description of the page. They are displayed in the search results or when the page is shared on social media.

They are crucial from the SEO point of view. A well-written title tag and meta description is your only chance to catch the user’s attention in the SERP.

Here are some tips on how to write a good title tag and meta description:

1. Include the focus keyword

As we’ve mentioned in the previous chapter, the title tag and meta description of a page is a good place to put your focus keyword.

The best practice is to place the focus keyword near the beginning of the title tag. It is not mandatory though and you should not force it.

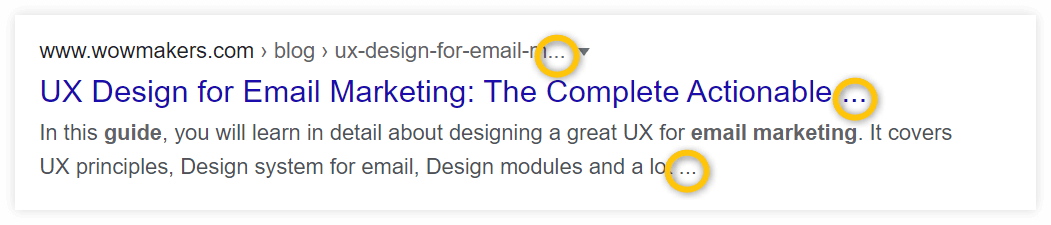

2. Be careful about the length

The length limit is 600px for a title tag and 960px for a meta description.

If they’re too long, they will be truncated by Google, which doesn’t seem very nice and can lower your click-through rate.

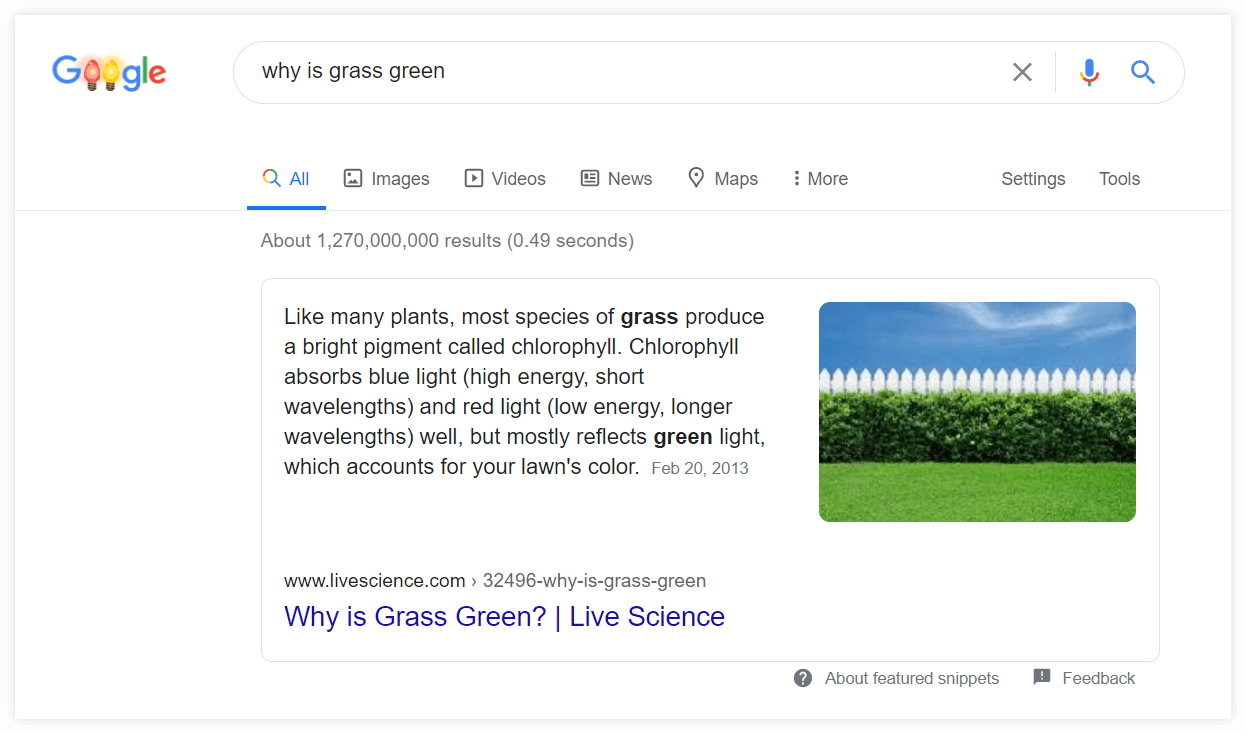

To make sure your title and description have the right length, you can use our free tool called SERP Simulator.

Besides the length check, it allows you to check the actual SERP results for any keyword in any location to compare them with your snippet and get inspiration from what works for your competitors.

Tip: If you would like to learn more about changing the location within search engines and explore ways to check and analyze Google SERPs in various locations, make sure to check out this article: How to change location on Google.

This brings us to the next point…

3. Stand out from the crowd

Here are some elements you can use to make your title tag unique:

- question

- number

- year

- an all-caps word

- brackets

- your brand name

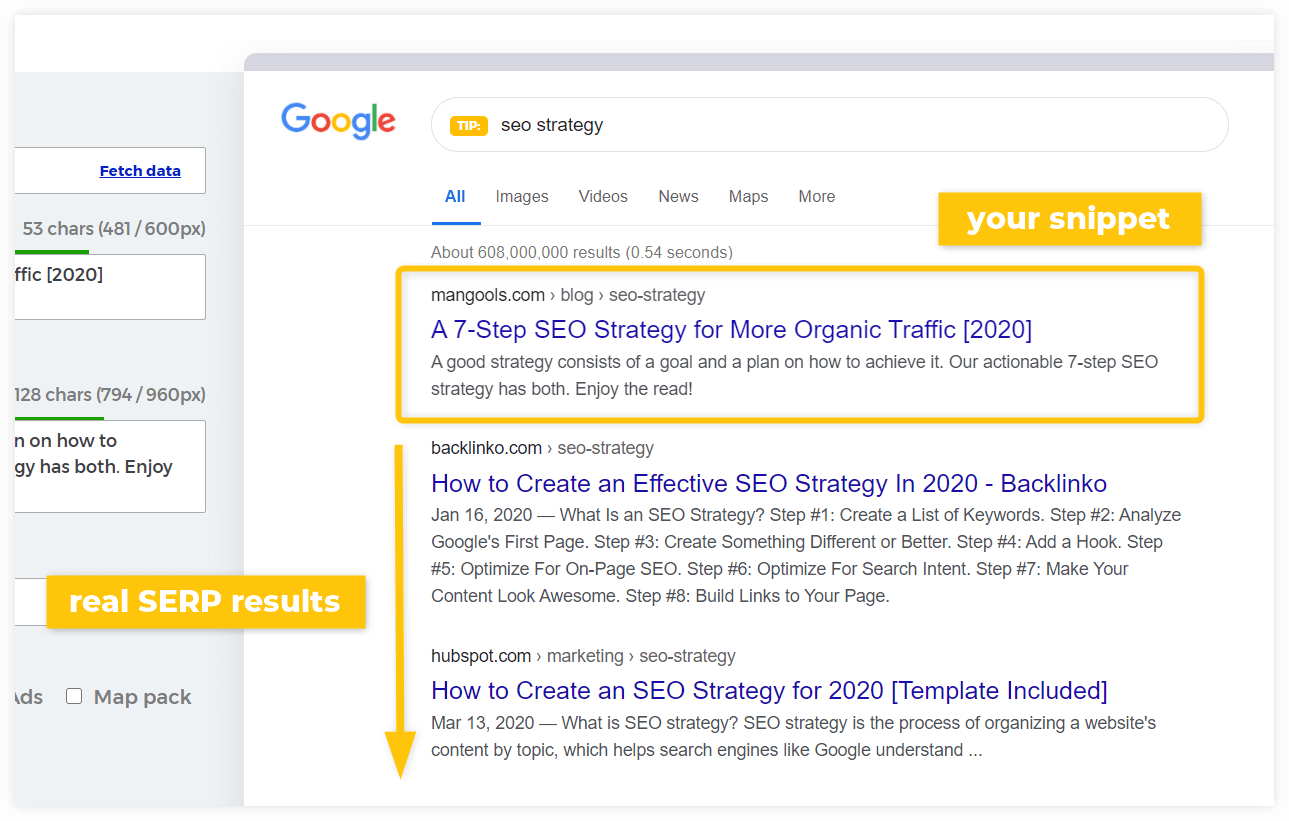

Featured snippets

Featured snippet (sometimes called “position zero”) is a selected search result that appears above the standard 10 results in Google search. Its goal is to answer the user’s question directly in the SERP.

Here’s a typical example:

There are 3 main types of featured snippets:

- Paragraph – usually a short answer to how, who, why, when, or what question

- List – mostly step-by-step instructions or recipes

- Table – often shown for comparison charts, data tables, etc.

The biggest advantage of having a featured snippet is that you can “outrank” your competitors even if your page has a lower position.

Many pages that appear in featured snippets don’t rank 1st. They would normally appear on the 2nd, 3rd or even lower position.

So, how to get a featured snippet?

1. Look for keywords with featured snippets

A great place to start is a keyword research tool where you can look specifically for “question” keywords.

If you click on a keyword in KWFinder, the presence of a featured snippet is marked with a small icon in the SERP table:

Another great source of keywords with featured snippets is the so-called “People also ask” box that often appears below the featured snippet.

2. Answer the question first

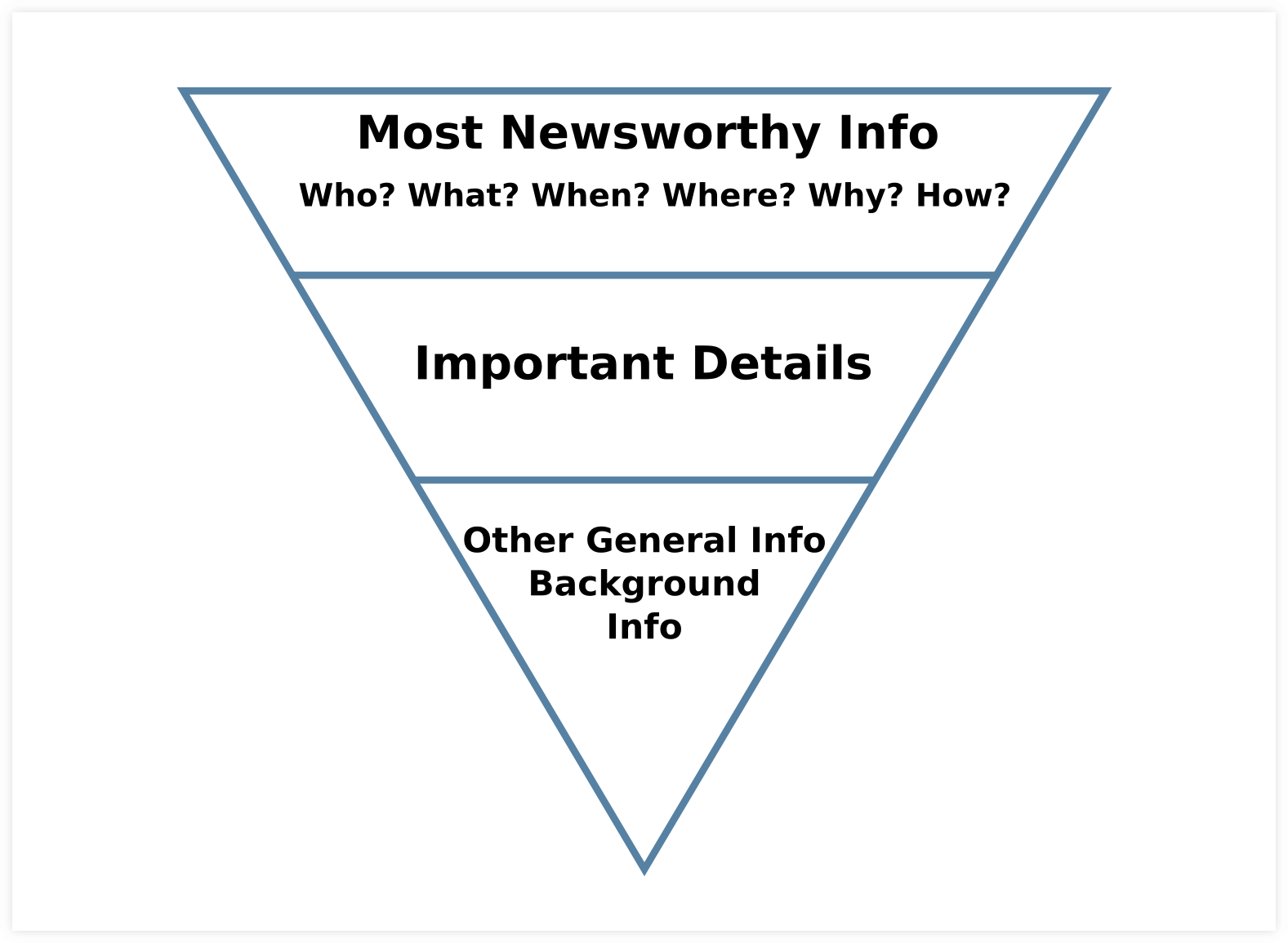

The key to appearing in the featured snippet is to answer the question as soon as possible on the page. This style of writing is called “the inverted pyramid writing style”.

It means you provide the definition first and then continue with the supporting details.

3. Follow the optimal word count

It’s not possible to mark what exact text should appear in a featured snippet. Google will select the part of your text automatically.

However, you should optimize the length of the assumed snippet text so that it fits the usual featured snippet length.

Most featured snippets have a length of 40-50 words.

This brings us to the last point…

4. See what works for your competitors

Last but not least, use the fact that there’s an existing featured snippet and get inspired by what works for your competition.

Look at things like:

- type of the snippet (paragraph, list, table)

- length of the text

- placement of the text on the page

- presence of images

If you want to dive deeper into on-page optimization, check out our practical on-page SEO guide for beginners. It covers everything from technical stuff, through content and CTR optimization to monitoring and analysis of your progress.

Chapter 6

Backlinks &

link building

In the 6th chapter of our SEO guide for beginners, we will discuss backlinks – one of the most important aspects of search engine optimization.

Chapter navigation

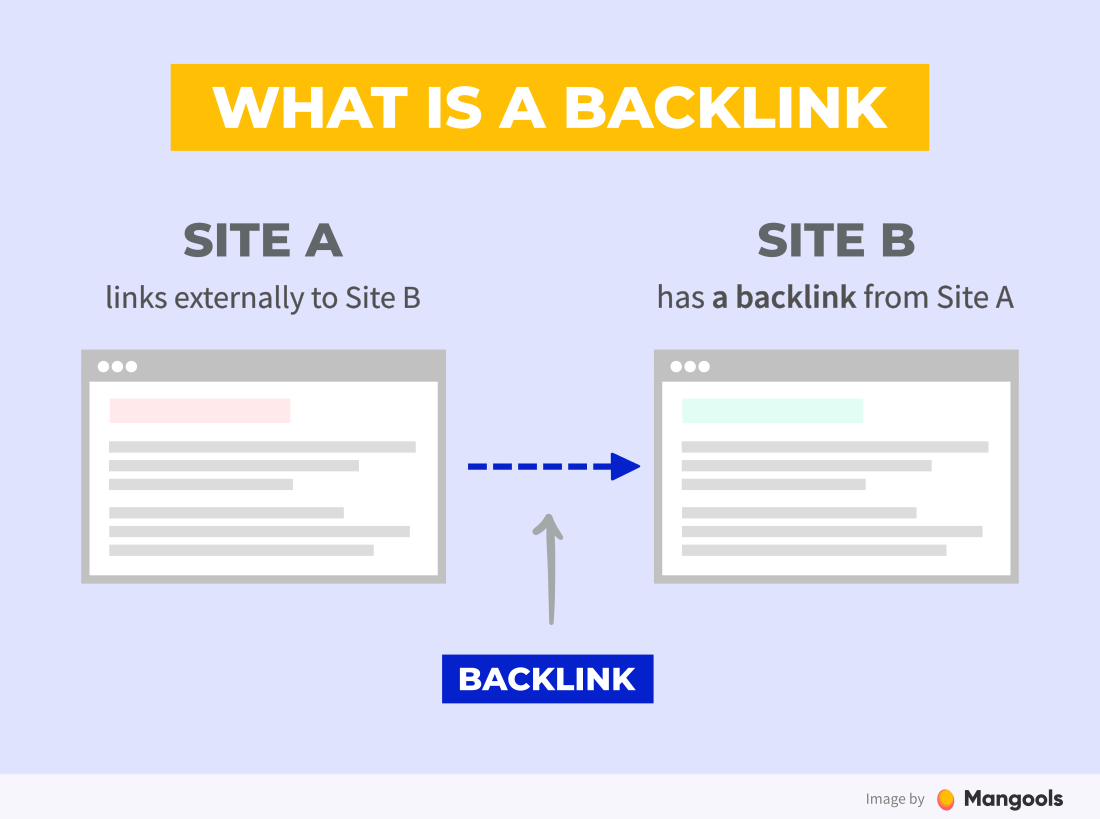

What is a backlink?

A backlink is a link from one page to another. If page A links to page B, we say that page B has a backlink from page A.

Backlinks are one of the most important ranking signals. There’s a direct correlation between quantity and quality of backlinks and rankings.

Why are backlinks so important?

Backlinks have been a very influential factor of search engine algorithms since the very beginning.

They work as academic citations. Search engine developers realized that if many quality resources link to a certain page, it means the page is valuable and trustworthy.

Link equity

Link equity (also called “link juice”) is a term used to describe the authority a page transfers to another page through a link.

Google PageRank

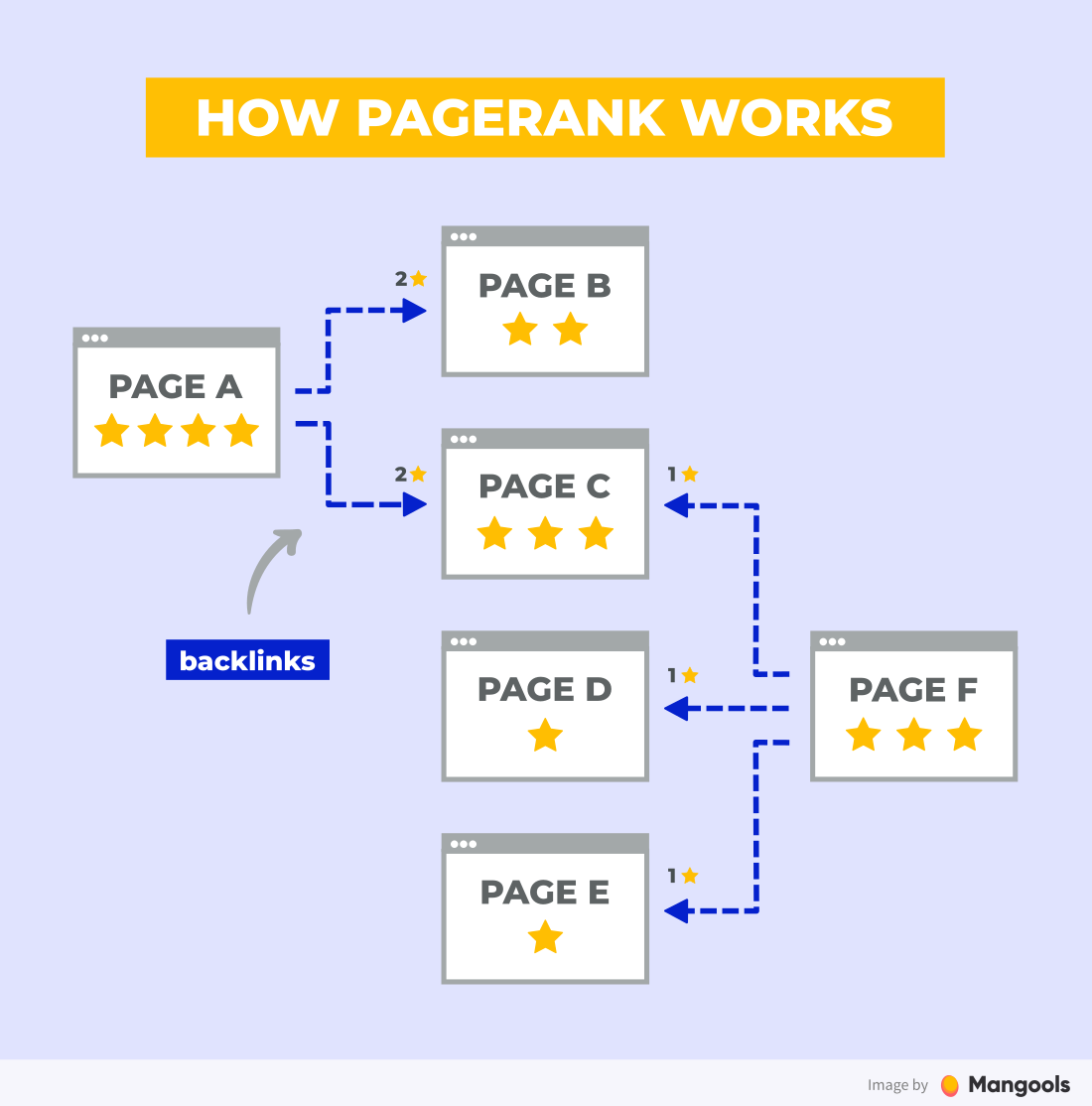

At the very beginning, Google created an algorithm called PageRank to incorporate the quality and quantity of backlinks into its ranking system and determine the relative importance of web pages in search results.

The three factors that influence the PageRank of a page are:

- Number of backlinks – the more backlinks the page has, the better

- Number of links on the linking page – the value (called link equity) is distributed among all the pages that are linked from the linking page

- PageRank of the linking page – a backlink from a page with higher PageRank passes more link equity

PageRank metric

Do not confuse the PageRank algorithm with an old metric of the same name that was used by Google to display the rank of the pages from 0 to 10.

They are not the same and while the PageRank metric was discontinued, the PageRank algorithm is still a part of Google’s ranking.

Read more in our detailed post about Google PageRank.

Types of links

Links can be classified into various categories. Here are the most basic ones you should know:

Internal vs. external links

This one’s quite obvious.

An internal link is a link from one page to another within the same website, while an external link is a link from an external website.

Nofollow links

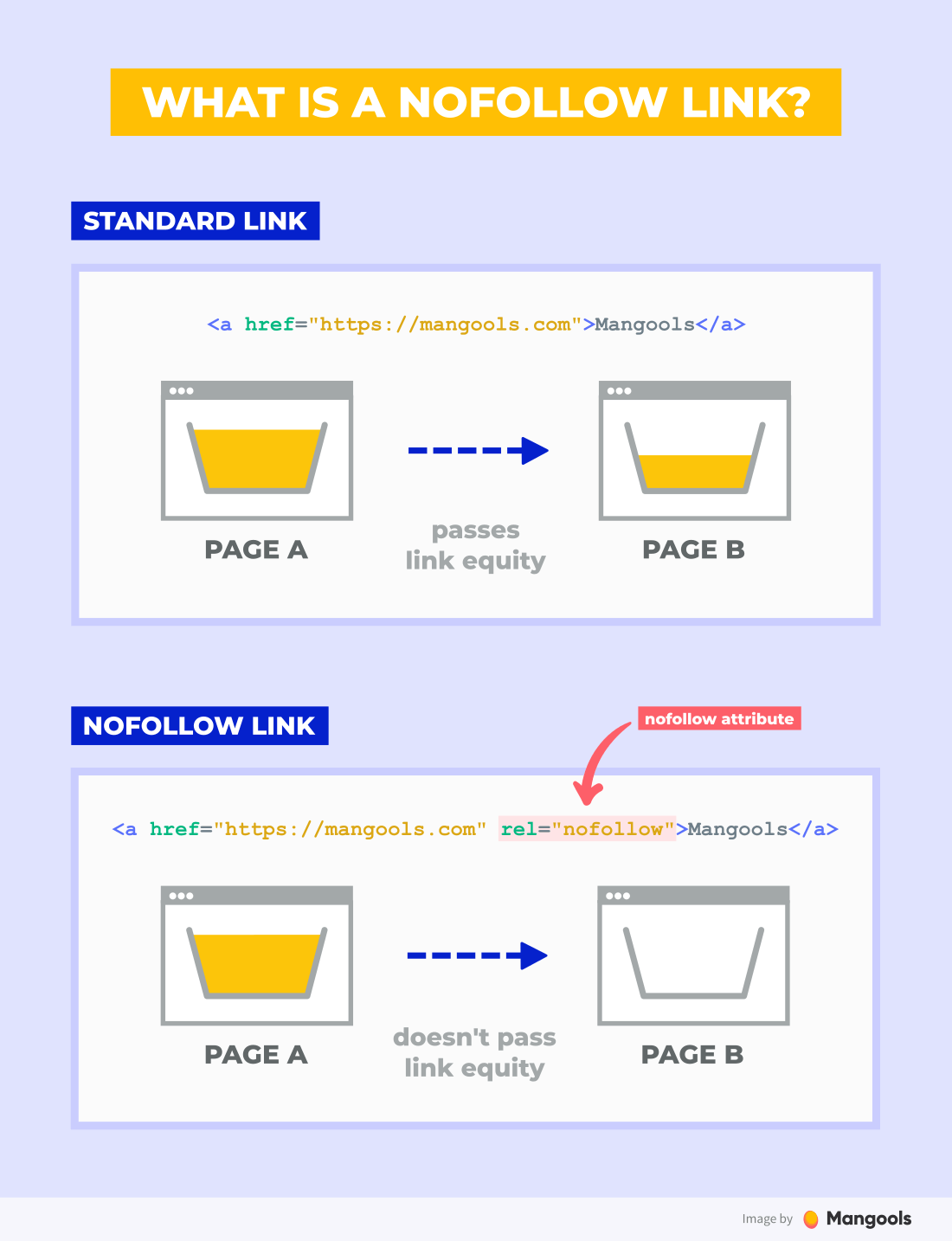

A nofollow link is a link that contains a rel=”nofollow” attribute in its HTML code.

It was introduced in 2005 by Google and it tells search engines not to pass link equity to the linked page. The instances when the nofollow attribute can be used include:

- Links in comments – it helps to fight comment spam as it makes links in comments less valuable)

- Affiliate and sponsored links – with the nofollow attribute, you won’t violate Google’s rules about buying backlinks

- Links to websites you don’t want to endorse – sometimes you need to link to pages you don’t want to endorse

Note: Technically speaking, there’s no such thing as “dofollow” backlinks as there is no “dofollow” parameter. The term is used colloquially to differentiate links that pass link equity as opposed to nofollow links.

Although nofollow links don’t pass authority, they can bring other benefits:

- They may serve as a hint for Google – in 2019, Google announced that they will treat nofollow links as hints to better understand and analyze links

- They can bring you traffic – a nofollow link may not bring you any “SEO points” but it can still bring you relevant traffic

- They diversify your link profile – nofollow links are a natural part of every link profile and it would be odd not to have any; see the next point…

Link profile

Link profile is another important SEO term you should know. It is used to describe all the links that point to your website.

The quality of your link profile directly correlates with your rankings.

What does a good link profile look like?

- Diverse – a healthy link profile is a mix of various types of links (both standard and nofollow) and natural anchor texts

- Quality backlinks – a good link profile consists of quality backlinks links from relevant websites

On the other hand, too many low-quality links from spammy websites will be ignored at best and hurt your website at worst.

Anchor text

The anchor text is a visible, clickable part of a hyperlink. It helps crawlers to indicate what the linked page is about.

We differentiate various types of anchor texts:

- Brand name – e.g. “Mangools”

- Exact match – e.g. “SEO guide”

- Partial match – e.g. “practical SEO tutorial”

- Generic – e.g. “read more”

- Naked URL – e.g. “https://mangools.com/blog/learn-seo/”

If more pages link to you with relevant terms used in the anchor texts, it may help you rank for these terms in the search engines.

This doesn’t mean you should try to get keyword-stuffed anchor texts at any cost.

Quite the contrary, you should be very careful and aim at a natural mix of various anchor text types.

Any obvious attempt to manipulate the anchor text of your links may be detected and penalized by Google (more on Google penalties here).

Attributes of a valuable backlink

Not all backlinks are created equal.

Besides the obvious differences between internal and external links and standard vs. nofollow links, two backlinks may have different values (and pass different amounts of link equity) based on many other factors.

Here’s what a valuable backlink looks like:

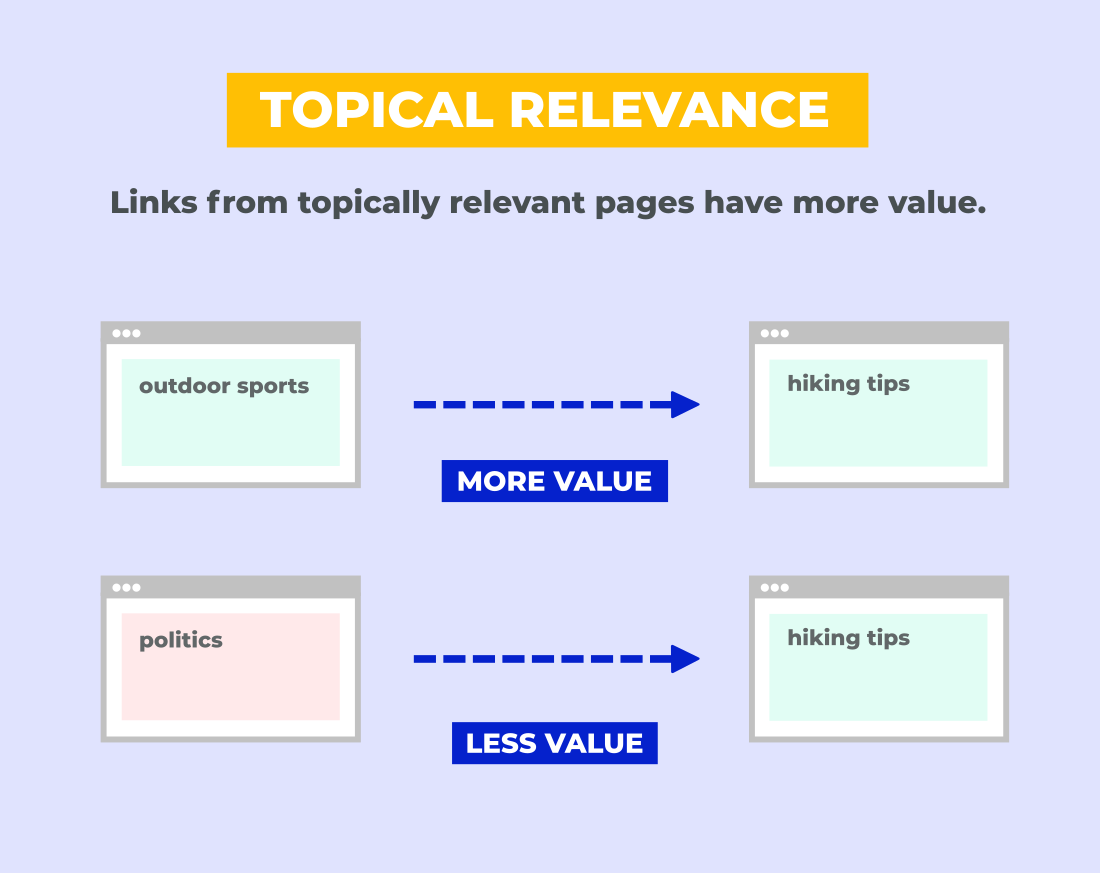

1. Relevant

A valuable backlink is topically relevant. It means the linking page should be about the same or similar topic as the linked page.

For example:

If you have a post about hiking tips and you have two backlinks, one from a post about outdoor sports and the other from a post about politics, the former backlink is much more valuable.

2. From an authoritative website

As we’ve explained previously with PageRank, pages with quality links pointing to them also pass more link equity to your page.

The more authoritative is the linking page, the more value the backlink has for you.

There is no official metric by Google that would represent the authority of a page but there are many metrics by commercial tools that can help you with the estimation.

The most popular are the Domain Authority and Page Authority by Moz.

3. Unique

The uniqueness of a backlink can be discussed on various levels:

a) Website level

A backlink from a website that hasn’t linked to you before is usually more valuable than the one from a site that has already linked to you before.

It is better to have 10 backlinks from 10 different websites than 50 backlinks from the same site.

Note: This doesn’t mean that having more backlinks from the same site is a bad thing (if it happens naturally). The links just may have a lower value.

b) Page level

If you have two links from the same page, the one that appears first may have more value than the second one.

(Google used to only count the first anchor text back in 2009. We don’t know how they treat them nowadays but we may assume it hasn’t changed.)

c) Number of other links

Last but not least, the PageRank is distributed equally across the linked pages.

So there’s a big difference between a backlink from a page that links to 3 resources and a backlink from a page that links to 30 resources.

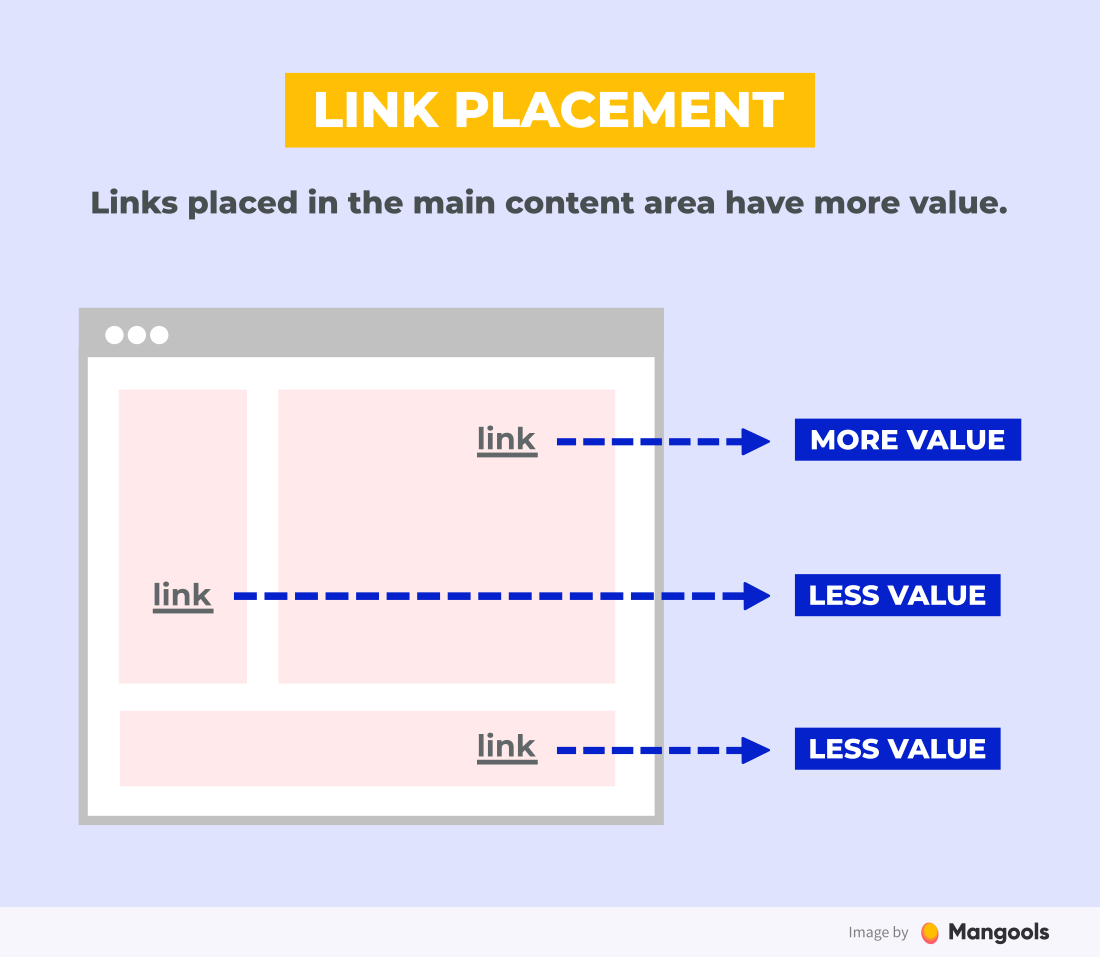

4. Placed near the top in the body

A quality link is the one that can also bring you traffic.

Not only for the obvious reasons (new visitors) but also from the SEO point of view.

Google uses the so-called Reasonable Surfer Model that predicts how likely a user is to click on a link: “The amount of PageRank a link might pass along is based upon the probability that someone might click on a link.”

Matt Cutts, the former head of webspam at Google, said specifically that you should “…put valuable links toward the top of blog posts.”

The more prominently a backlink is placed, the more weight it is given by Google.

In other words, a backlink from the top of an article is better than a backlink from the bottom. And the backlink from the bottom of the article is better than the one in the footer or sidebar.

5. Has relevant anchor text

Anchor text plays a significant role in link building.

Namely, a backlink with an anchor text that is relevant to the linked page is more valuable than the one with an irrelevant or generic anchor.

This applies to the text surrounding the link as well, since links may carry context around them.

Should you do link building?

In the ideal world, all you would need to earn quality backlinks would be to create great content.

The reality is a bit more complicated:

- It is very hard (if not impossible) to get quality backlinks without any further effort from your side. Especially for new websites.

- It is very hard (if not impossible) to rank without any backlinks. Especially for new websites.

That’s why link building is a big part of SEO. But it’s also a bit controversial topic.

Google is not a big fan of any attempts to manipulate PageRank of your site. Here’s what they state in their Quality Guidelines:

Any links intended to manipulate PageRank or a site’s ranking in Google search results may be considered part of a link scheme and a violation of Google’s Webmaster Guidelines.

Does it mean you can’t try to influence the number and quality of your backlinks in any way?

We don’t think so. Not all forms of link building are spammy or manipulative.

There’s a lot of techniques that can help you get backlinks while providing value to the users. They’re just not as instant as writing spam comments or buying a bunch of links from Fiverr.

Should you buy links?

Now buying the backlinks is a whole different chapter. Most SEO experts will tell you that it is not worth it.

Here are two main disadvantages of link buying:

- It is risky – buying backlinks is a big no-no for Google and if they find out, you’ll get a manual penalty, which is something your website may not recover from

- It is expensive – a quality backlink is costly (starting at around $100/backlink, usually much more); of course, one backlink won’t make you rank so you’ll have to spend a lot to get the results

Yet, the link selling industry is quite alive and buying backlinks is a common practice.

The final decision is up to you. Just remember that it is a very risky strategy that may not pay off in the long run.

Keep these essential points in mind when you step-up your link building game:

1. Always evaluate link quality from a long-term perspective.

Build only those links that will have the highest chances of working on a long-term basis. There are two reasons behind this:

First, it’s much more beneficial for your site to acquire long-term links on quality sites because they can keep growing in value over time.

Second, links should help you prove to Google that you’re a trustworthy brand, and links from mediocre sites won’t let you attain that position.

When it comes to evaluating link quality, I always look at a broad scope of metrics like a decent domain rating, level of organic traffic, and positive growth in the number of referring domains, etc. However, the most important criterion is whether or not a site represents a real brand.

2. Keep your link profile as diverse as possible.

We’ve seen cases where marketers have been so focused on building only certain types of links that they have neglected the rest of their options.

I would say that’s one of the shortest routes to penalization, or at least it invites Google to be suspicious about unnatural link building. Being too narrow and obvious about artificial connections will lead to a good drop in rankings resulting in traffic loss.

A solid link building strategy should always include a variety of ways to get links, keeping your backlink profile as diverse as possible while still remaining relevant.

Link building strategies

There’s plenty of link building techniques, hacks and tips (you can check our big list of 60+ link building techniques for inspiration).

In this SEO guide, we’ll cover the 3 most common strategies that work very well.

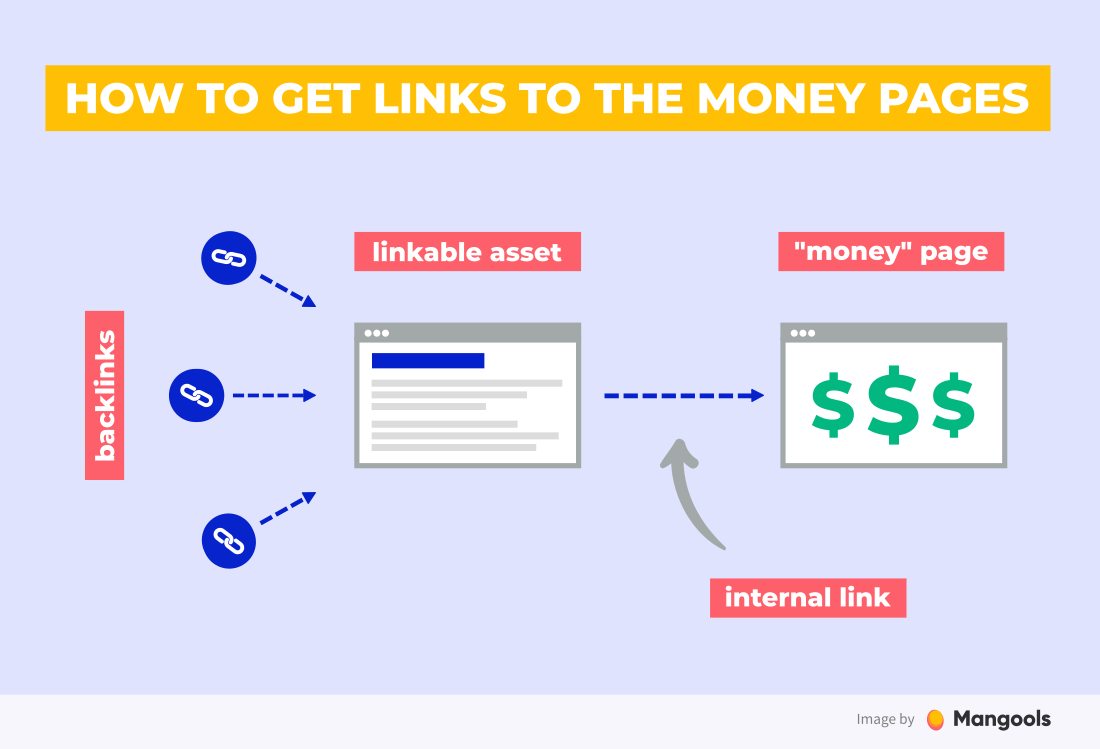

Linkable assets

The most natural link building technique is to create a unique and valuable piece of content – a so-called linkable asset – that will attract backlinks.

A linkable asset can be any piece of content but there are certain types of content that work perfectly for this. These include:

- ultimate guides

- big lists

- research with unique data

- resource directories

- free tools

The next step is to find websites that might want to link to you and contact them (more on that in a while).

Tip: Sometimes you don’t even need to ask for a backlink directly. You can contact the influencers and experts in your field, asking for honest feedback.

If your content is really great and unique (and it should be for this strategy to work), they may link to it or share it on social media by themselves.

As a bonus, you’ll start some valuable relationships that may be useful in the future.

Once you get backlinks to your linkable asset, you can redirect the “link juice” to your other pages (e.g. “money” pages) through internal links.

Guest posting

Guest posting (or guest blogging) is definitely the most popular link building technique. It is quite simple (although not easy) and scalable.

How guest blogging works (in a nutshell):

Author from Site A writes a guest post for Site B. The post contains a link to his own web. Site B gets a free piece of content and Site A gets a free backlink. Win-win.

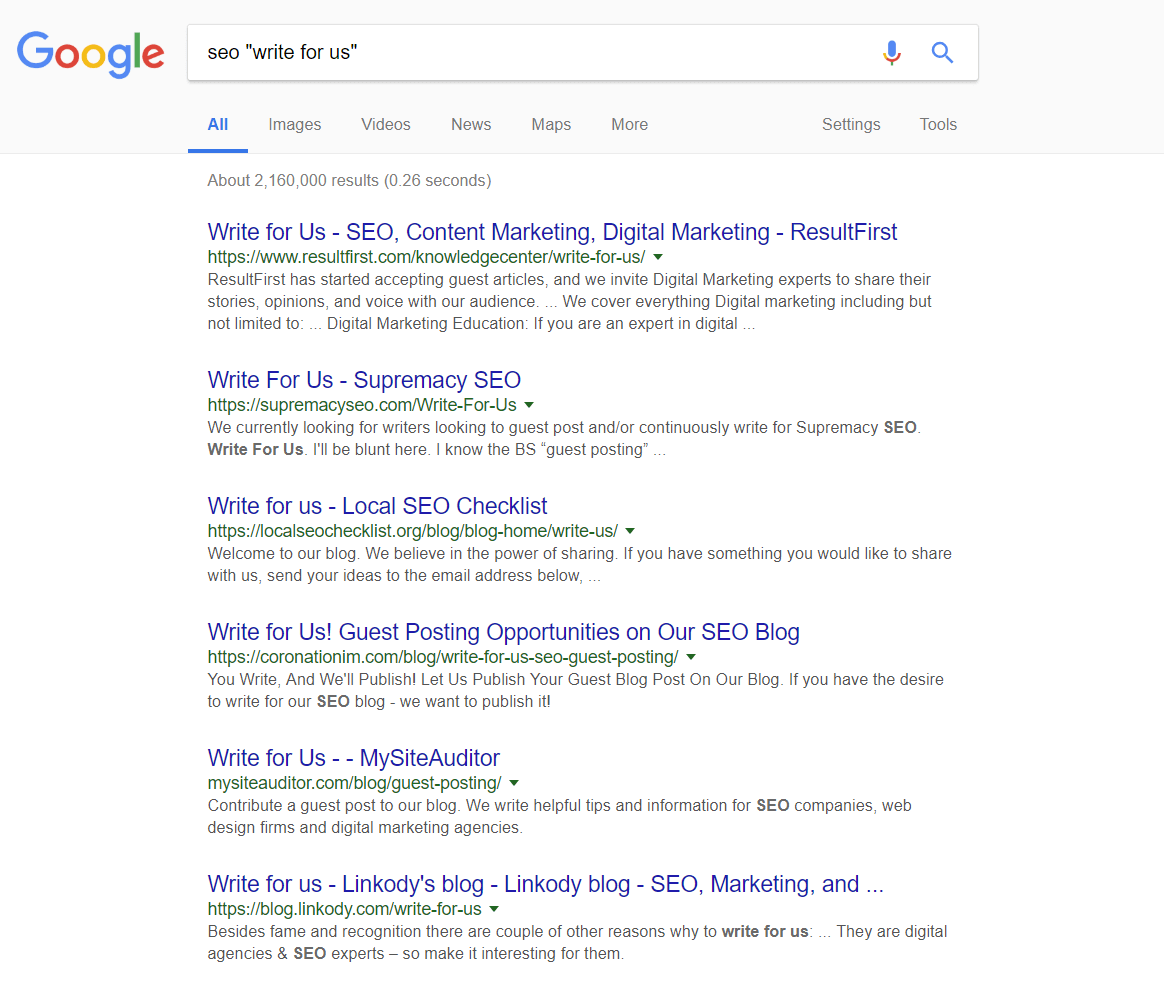

So, how to find blogs that accept guest posts relevant to your niche?

Try to use specific search operators that help you to filter the ones that are relevant to you. For example: “your niche” + “write for us”.

Once you find them, read their guest post guidelines and contact the owner with your guest post pitch.

Competitor’s backlinks

Scanning through the link profiles of your competitors is a great way to find websites that may be willing to link to you. (After all, they already linked to a similar page, right?)

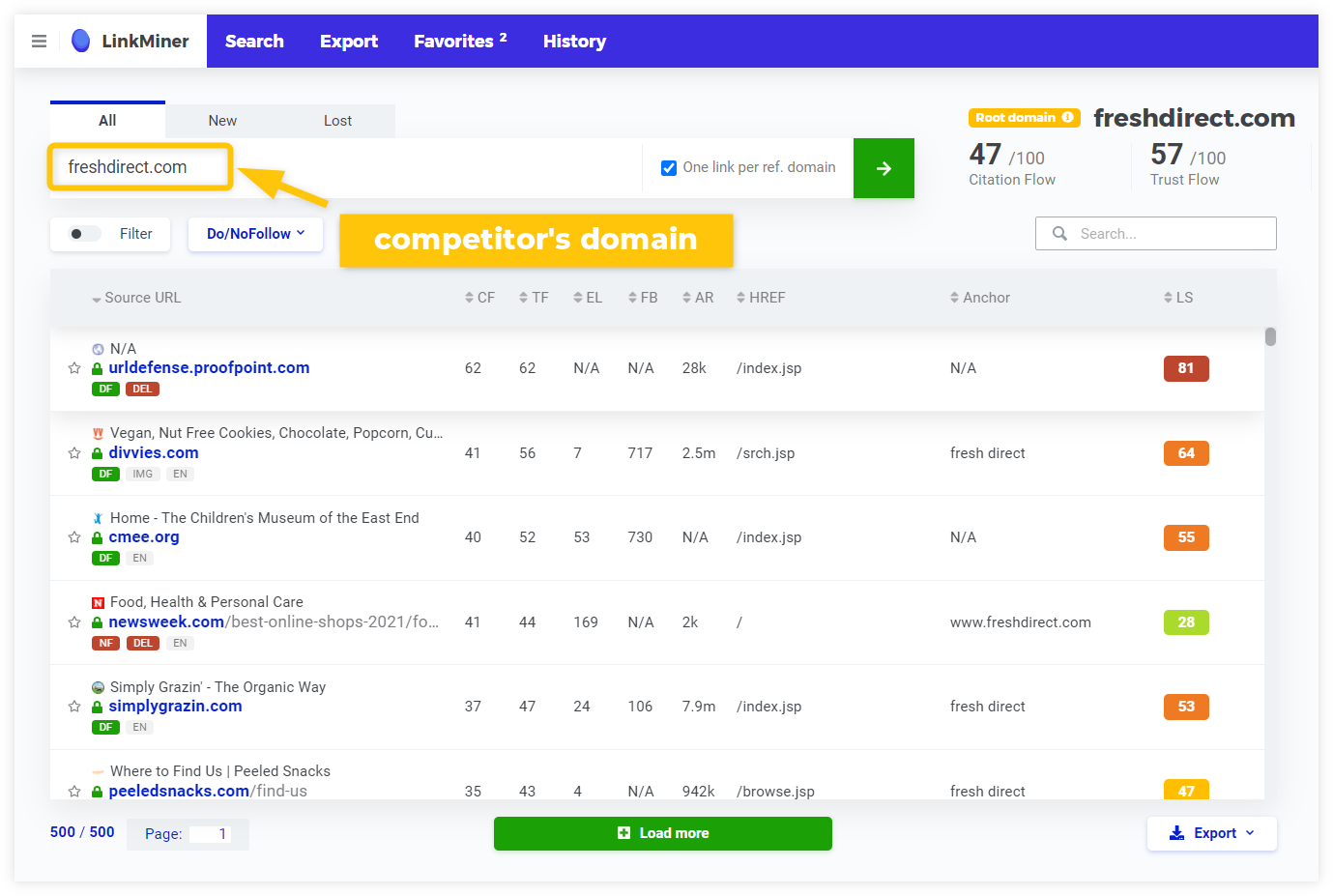

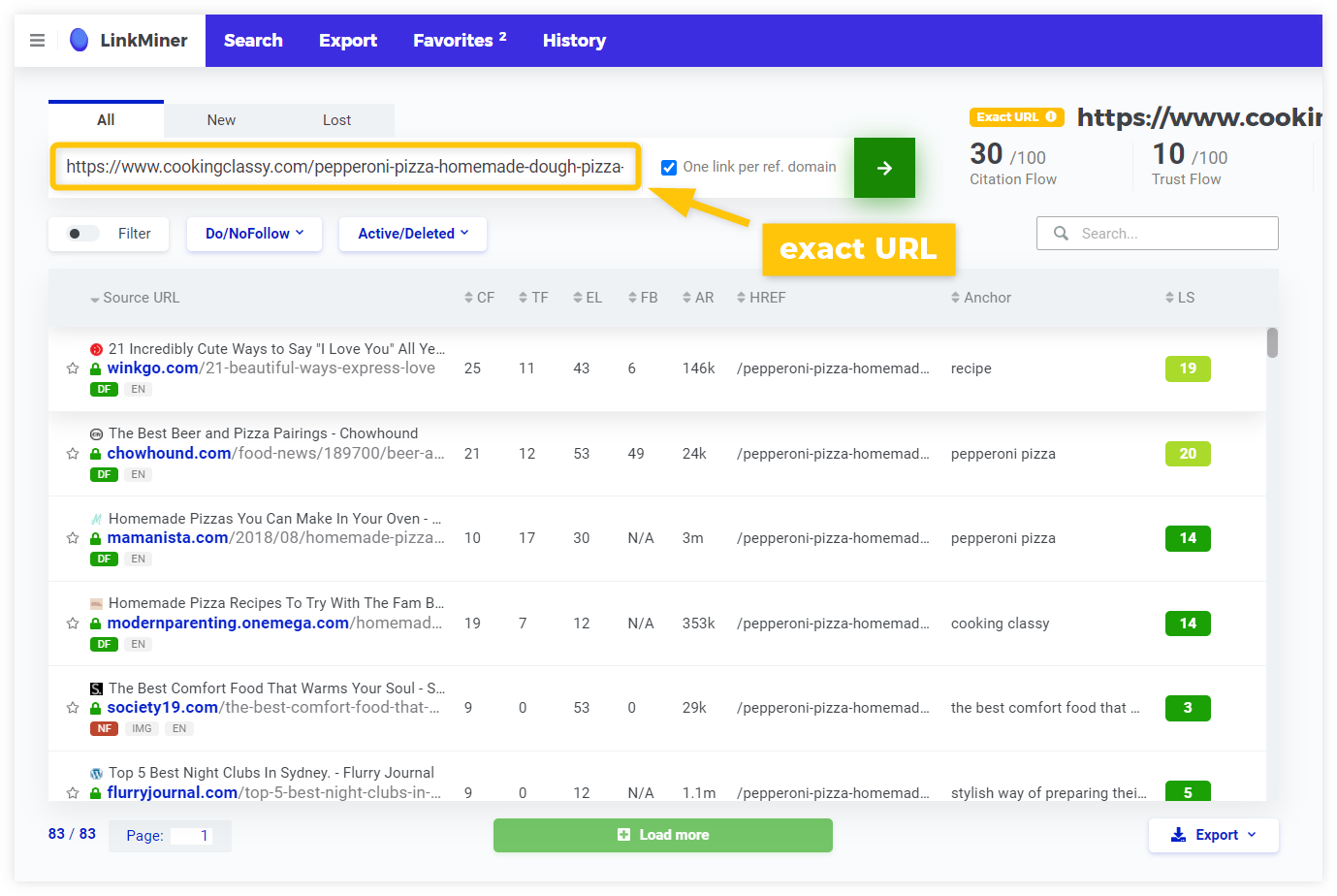

All you need is a backlink analysis tool (like LinkMiner) that will help you find the backlinks of your competitors.

You can either start by entering the domain:

…or focus on a specific URL (e.g. your competitor’s post on the same topic that you want to rank for):

The tool will show you all the backlinks and some additional information about them (the authority, anchor text, attributes, etc.)

When analyzing your competitor’s backlinks, you should consider:

- Link relevance – Would a backlink from this page be relevant to your content?

- Link strength – What is the authority of the linking page?

- Difficulty – Will you be able to get the same backlink?

The next step is the so-called email outreach – contacting the website owners to replace the backlink of your competitor (also known as The Skyscraper Technique) or add your backlink as an additional resource.

The Skyscraper Technique

The Skyscraper Technique (named by Brian Dean from Backlinko) works like this:

- Find the best article on a certain topic, write something that is even better (see the 10x content technique).

- Then contact all the websites linking to your competitor and ask them to link to you instead.

Other common link building techniques

- Broken link building – find websites with inactive links and suggest your content as a replacement

- Brand mentions – find unlinked mentions of your brand (you can use Google Alerts) and ask the web owners to link the mention

- Testimonials – write a testimonial for a product or service and get your site linked next to your name

- Roundups, expert insights – get featured in niche roundups or articles that require expert insights (see HARO technique)

- Social backlinks (nofollow) – share your content on social media, promote it on Facebook/Twitter/Pinterest, join discussions, comment on relevant posts

- Forums and Q&A sites (nofollow) – a well-placed link with added value on Quora or Reddit can drive a lot of traffic throughout time; avoid mindless spam at all cost

Chapter 7

Analytics & metrics

In the last chapter of our complete SEO guide, we’ll take a look at the essential data analytics tools and metrics every website owner should know and use.

Chapter navigation

Website analytics is a crucial part of search engine optimization.

The saying What gets measured gets improved is 100% correct in SEO. Using the right tools to track and analyze your website’s performance will help you answer important SEO questions, such as:

- What keywords you rank for in Google

- What is the click-through rate of your pages in search results

- What country your visitors come from

- What channels bring you the most traffic

- How your visitors engage with your pages

- What are your most visited pages

To help you understand the basics, we’ll cover 3 important analytics tools every website owner should have: Google Search Console, Google Analytics and a rank tracking tool.

Google Search Console

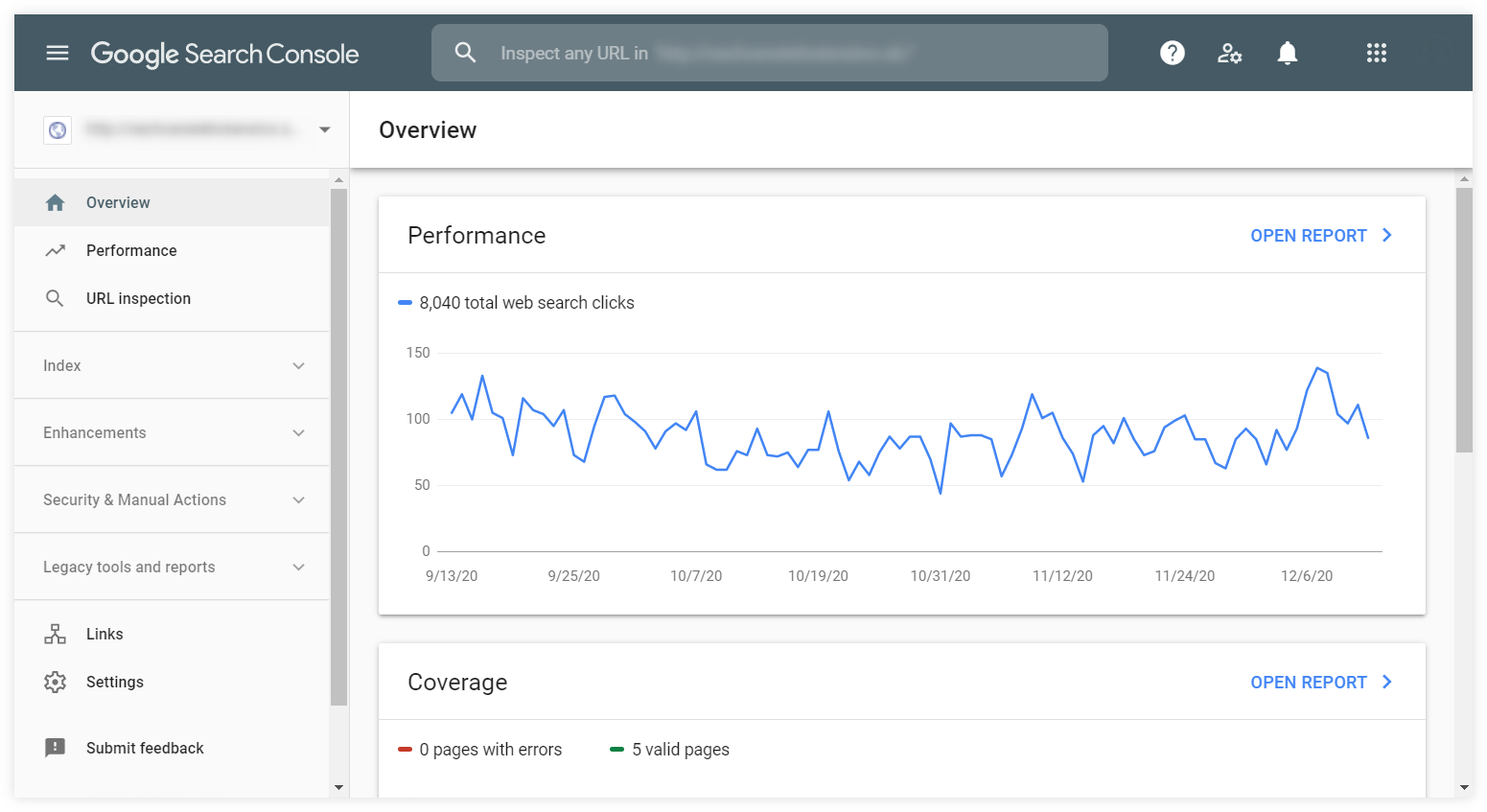

Search Console is a free online tool (or a series of tools) by Google that helps webmasters see how their site is performing in Google Search and optimize the visibility of their websites.

Google Search Console is an essential tool that is hard to be replaced. Every website owner should use it.

Note: To set up the Google Search Console, you need to verify the ownership of the website first. You’ll find a detailed step-by-step walkthrough on how to do it in our simple guide to Google Search Console.

Search Console consists of several dashboards from a basic overview of your website’s performance to reports of critical issues that you should address.

- Performance – gives you insights into how your site performs in Google Search results

- URL inspection – gives you information about Google’s indexed version of any of your pages

- Coverage – shows what pages are indexed on Google and reports any issues with indexation

- Sitemaps – enables you to add a new sitemap and see your previous submissions or problems

- Removals – serves as a tool to temporarily block any page from search results

- Enhancements – provides information about your enhancements (such as AMP, sitelinks, etc.) and user experience and usability issues

- Manual actions – shows whether you have any manual penalty from Google

- Security issues – reports any detected security issues on your site

- Links – provides a basic overview of your links (both external and internal)

The report you’ll spend the most time with and the one we’ll take a closer look in this chapter is the Performance report.

Performance report

The Performance report will give you a detailed overview of your site’s performance in Google Search.

It consists of 3 main areas you can configure to see the data you need:

- Top filter – allows you to select the search type, date range and filter the dimensions

- Metrics chart – shows the chart with the 4 main metrics (clicks, impressions, average CTR and average position); you can select any combination of the metrics by clicking on them

- Dimension tabs with data table – allows you to select the preferred dimension and see the data in a simple table

To get a quick overview of how the Performance report works, you can watch this short educational video by Google Search Central:

Besides the basic (but very useful) data like top queries or top pages, the Performance report is a goldmine of various deeper insights into your site’s search performance.

Let’s take a look at a few specific use cases:

Troubleshoot the performance drops

When analyzing any change in your performance (e.g. sudden drop in clicks), always try to find the root of the problem by checking various dimensions to find what exactly caused the changes.

Sometimes the overall performance can be influenced dramatically by a change in a specific country, a ranking drop of s single big keyword or a performance issue on a specific type of device.

Tip: Create a comparison of two subsequent time frames (Top filter – Date – Compare) to see the biggest changes when put in contrast with the previous time period.

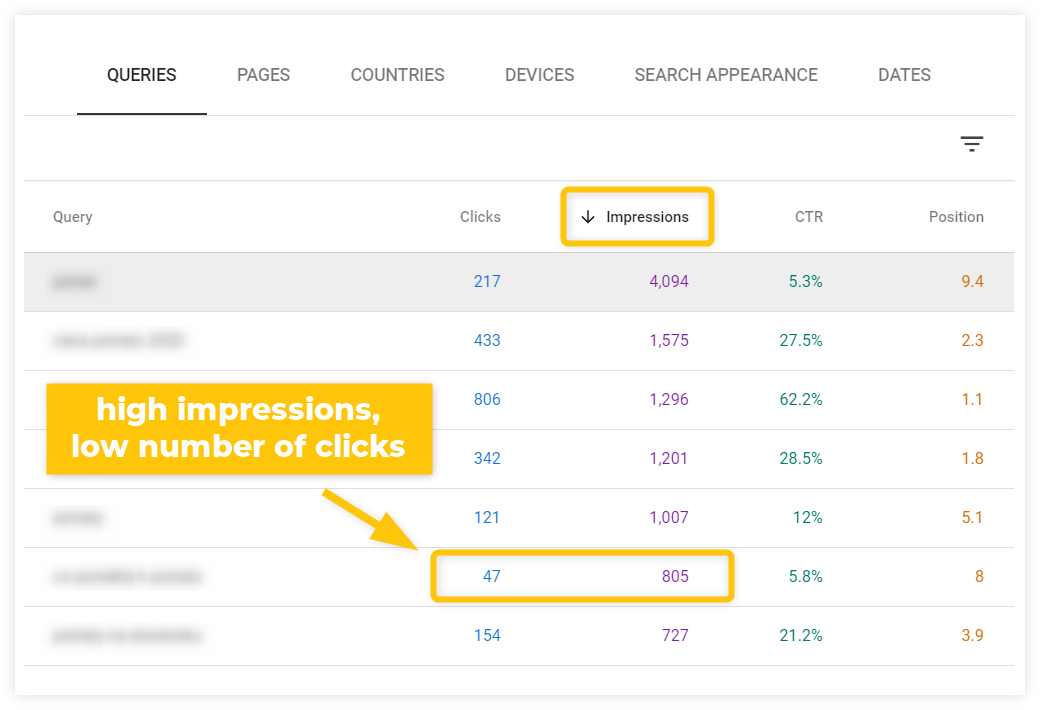

Find pages that need the CTR optimization

Look at the top-performing queries that have a low click-through rate (either using the Average CTR metric or comparing the Impressions and Clicks).

There’s a high chance you can improve the CTR by writing a better title tag and meta description for the page that ranks for the query.

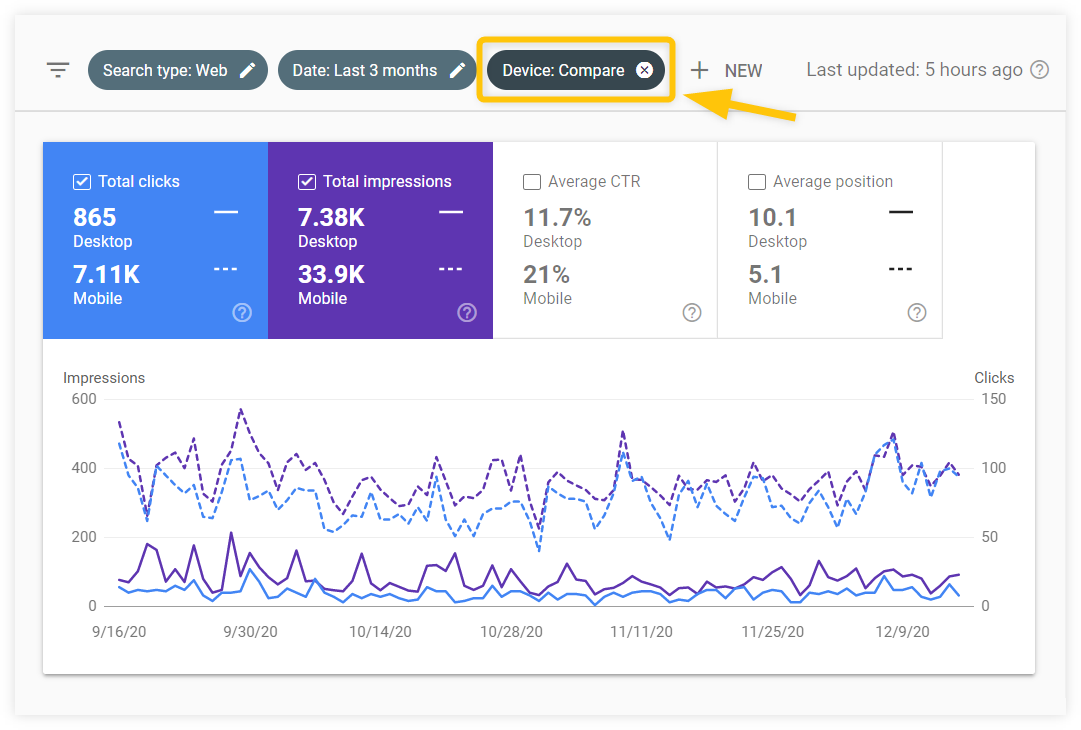

Compare your performance on desktop and mobile devices

In the top filter, select the device dimension and choose Compare instead of Filter.

You’ll be able to see the comparison of your performance on desktop and mobile devices and take appropriate steps when it comes to further optimization of your site.

Find low-hanging keywords you can rank for easily

In the dimensions table, use the filter to only show queries you rank for on position 20 or less (which means the keyword you rank for on 3rd SERP or higher).

Once you find such a keyword, switch to the Pages tab to see the page that ranks for it. These are pages that may need just a little optimization to rank better.

See whether you can improve the page that targets the keyword, or create a new piece of content that would focus on the keyword.

Compare branded and non-branded searches

You can use the top filter to only show queries that include (“queries containing”) your brand name.

You’ll see how much of your search traffic comes from branded keywords and how these keywords perform in Google Search.

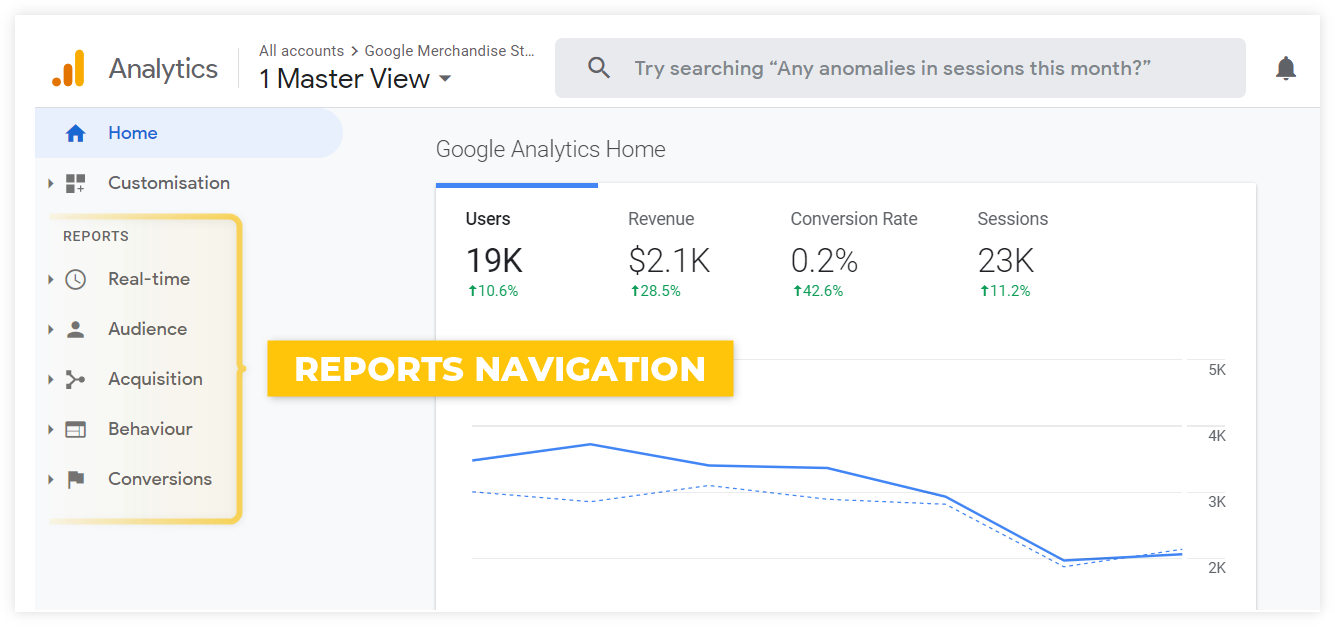

Google Analytics

Google Analytics is a free website analytics tool that tracks and reports website traffic and user behavior. It is a powerful tool that offers a ton of useful data.

The problem is that many beginners feel lost and overwhelmed when opening their GA account.

It’s completely normal. There are too many reports, too many metrics, too many different graphs and complicated navigation.

Note: Setting up Google Analytics is not complicated. You just have to insert a special piece of code on your website. Read this detailed guide on how to do it.

So, how do you learn using Google Analytics?

One step at a time.

What kind of data can you find in GA?

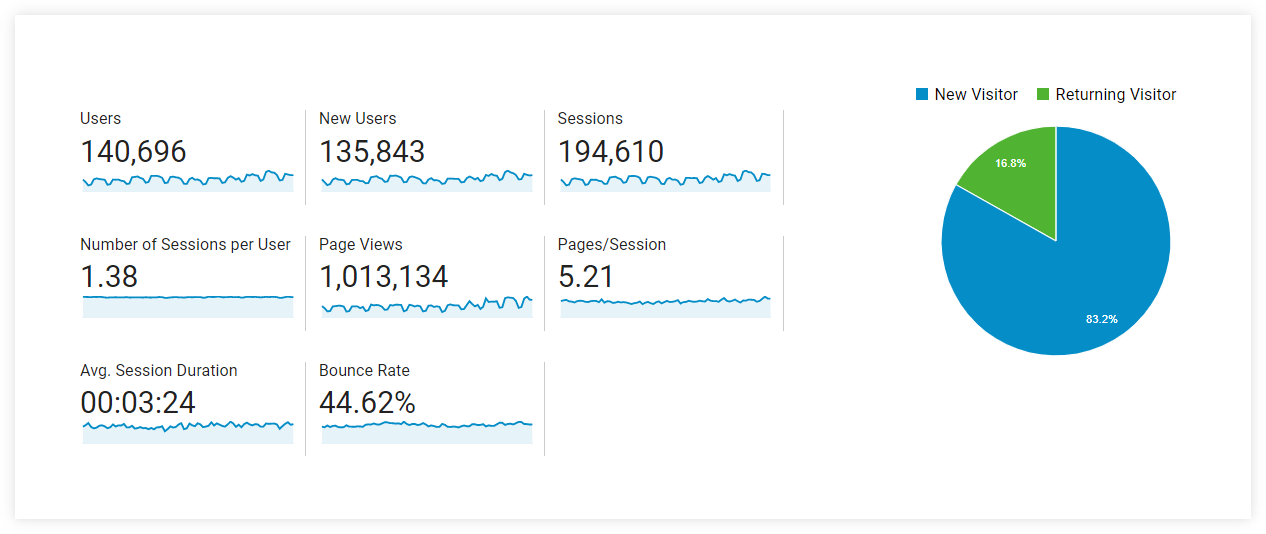

Google Analytics consists of various reports.

The home dashboard will show you a quick overview of the basic performance metrics. To see more, you can navigate to the detailed reports.

The reports are divided into 5 main categories based on what kind of data they provide. You can find them in the left menu.

- Real-time – the users’ activity as it happens in real time

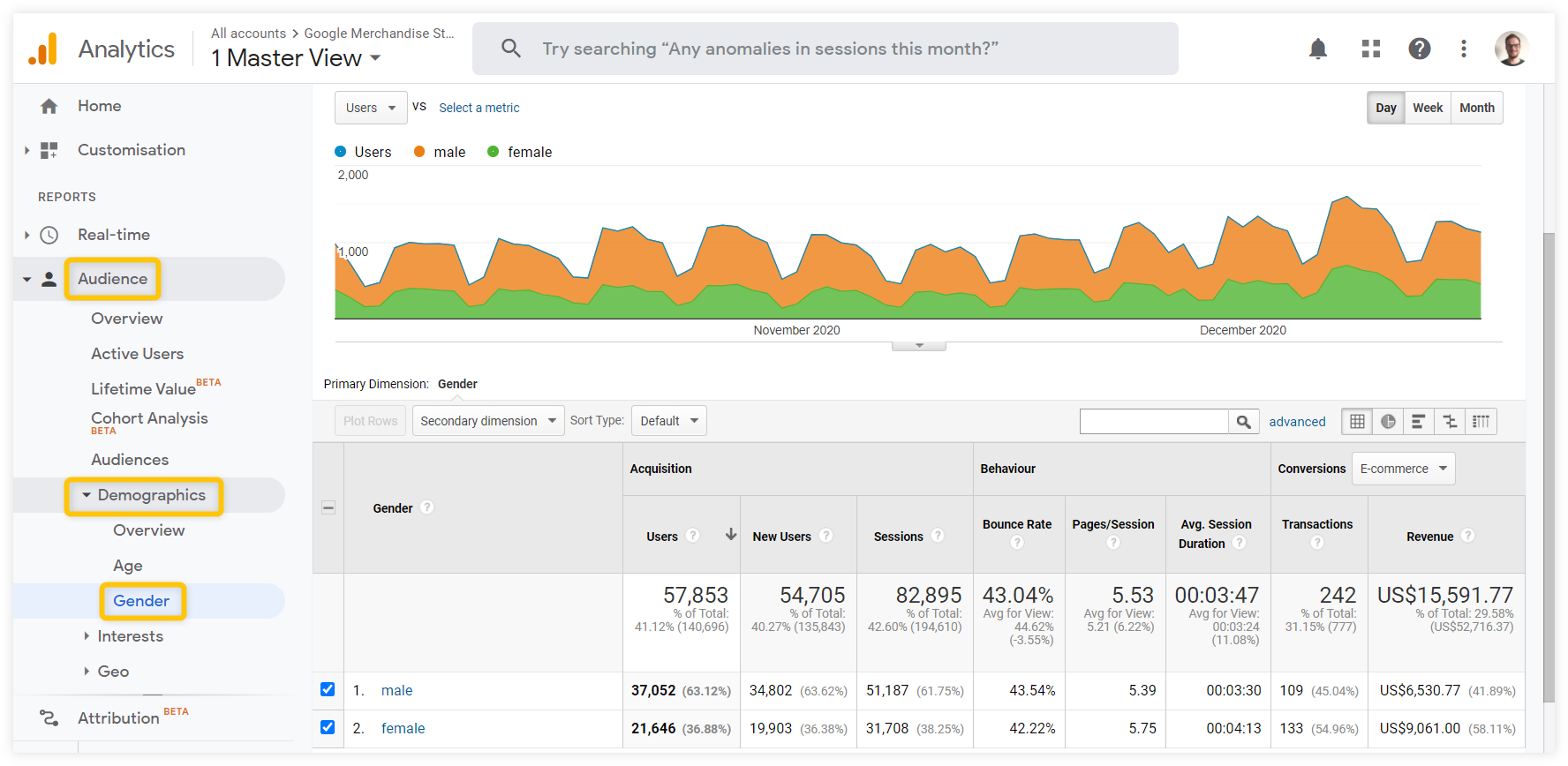

- Audience – everything you need to know about your visitors (demographics, interests, technology used, etc.)

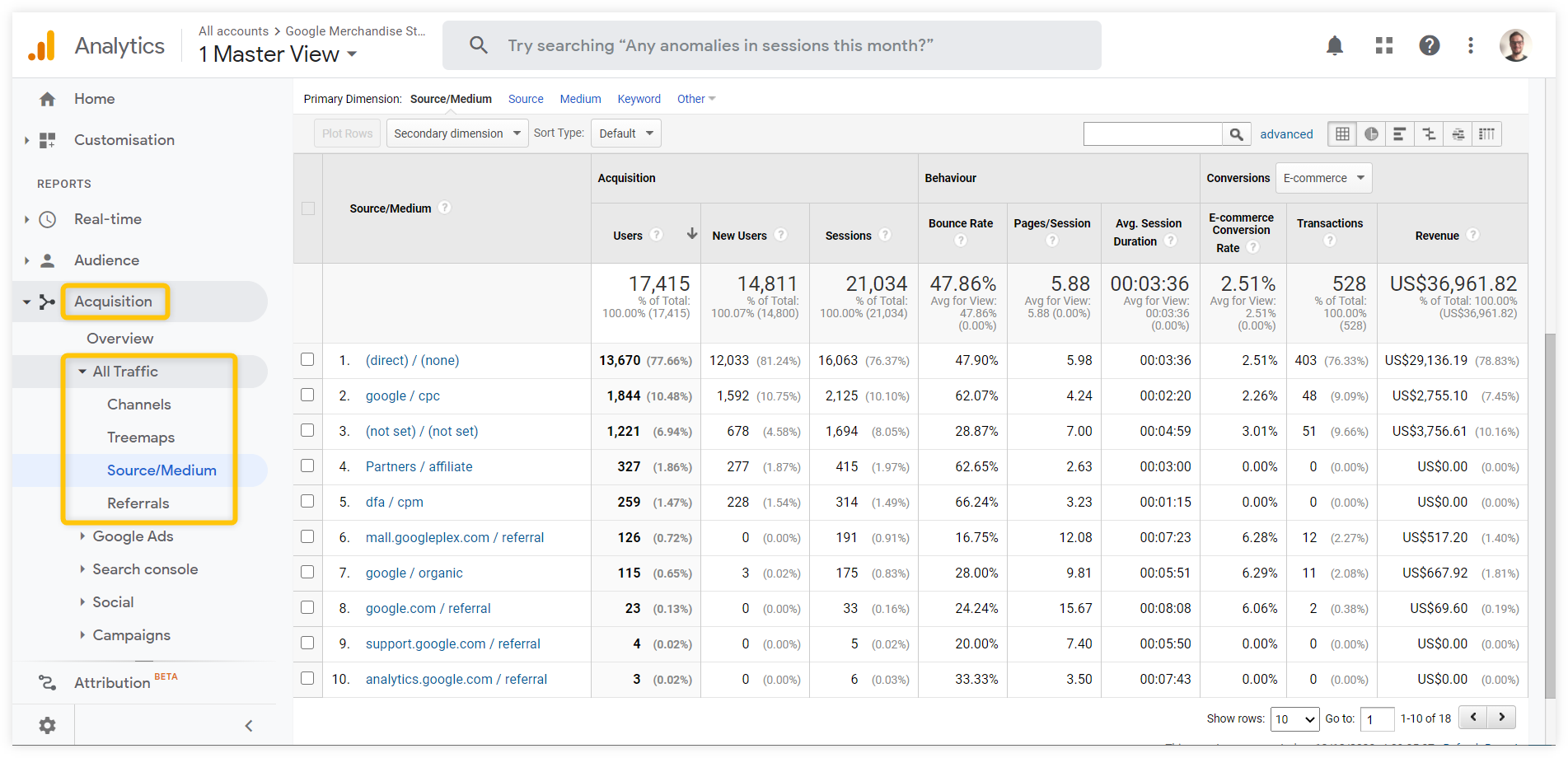

- Acquisition – where your traffic comes from (traffic channels, top referring pages, etc.)

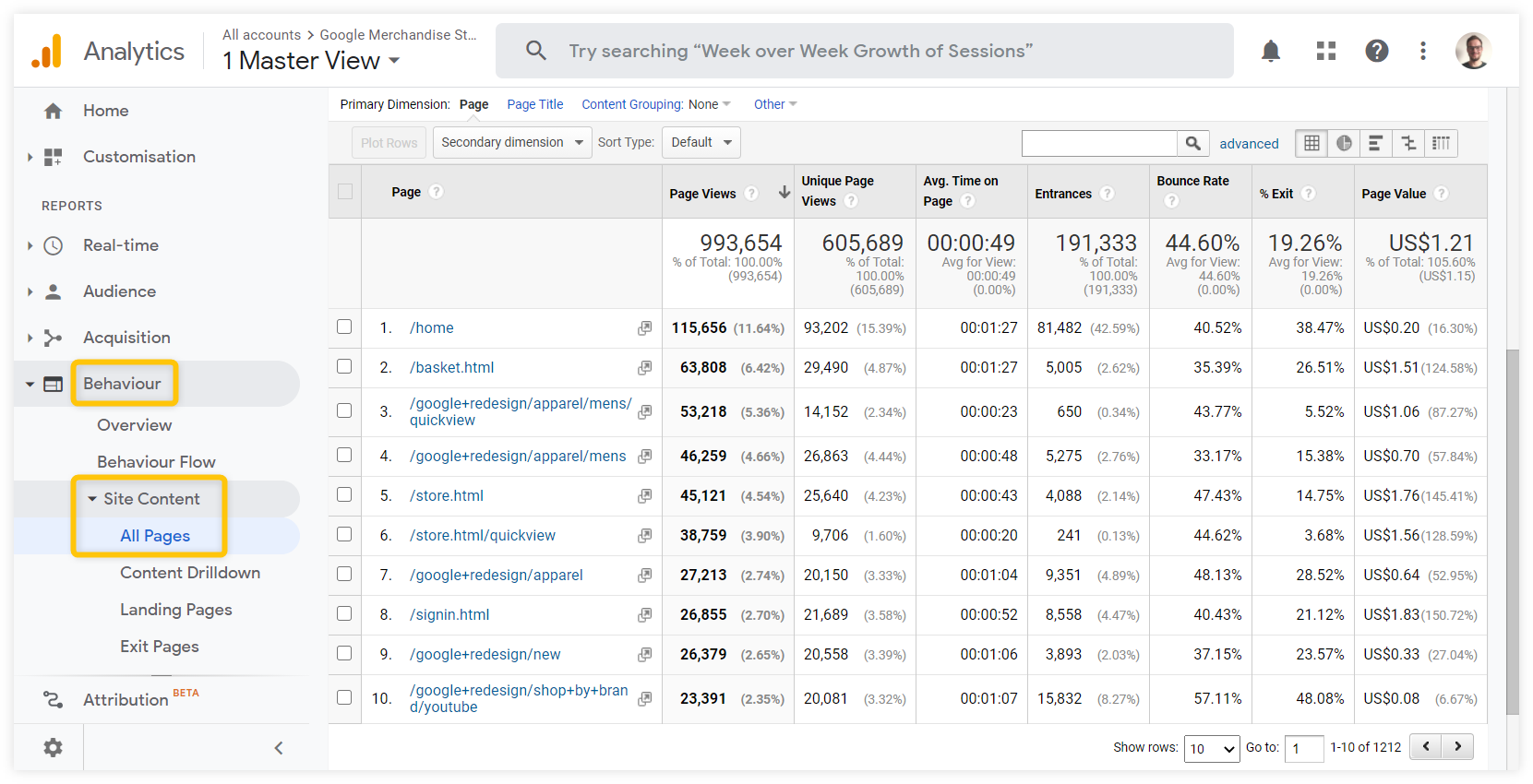

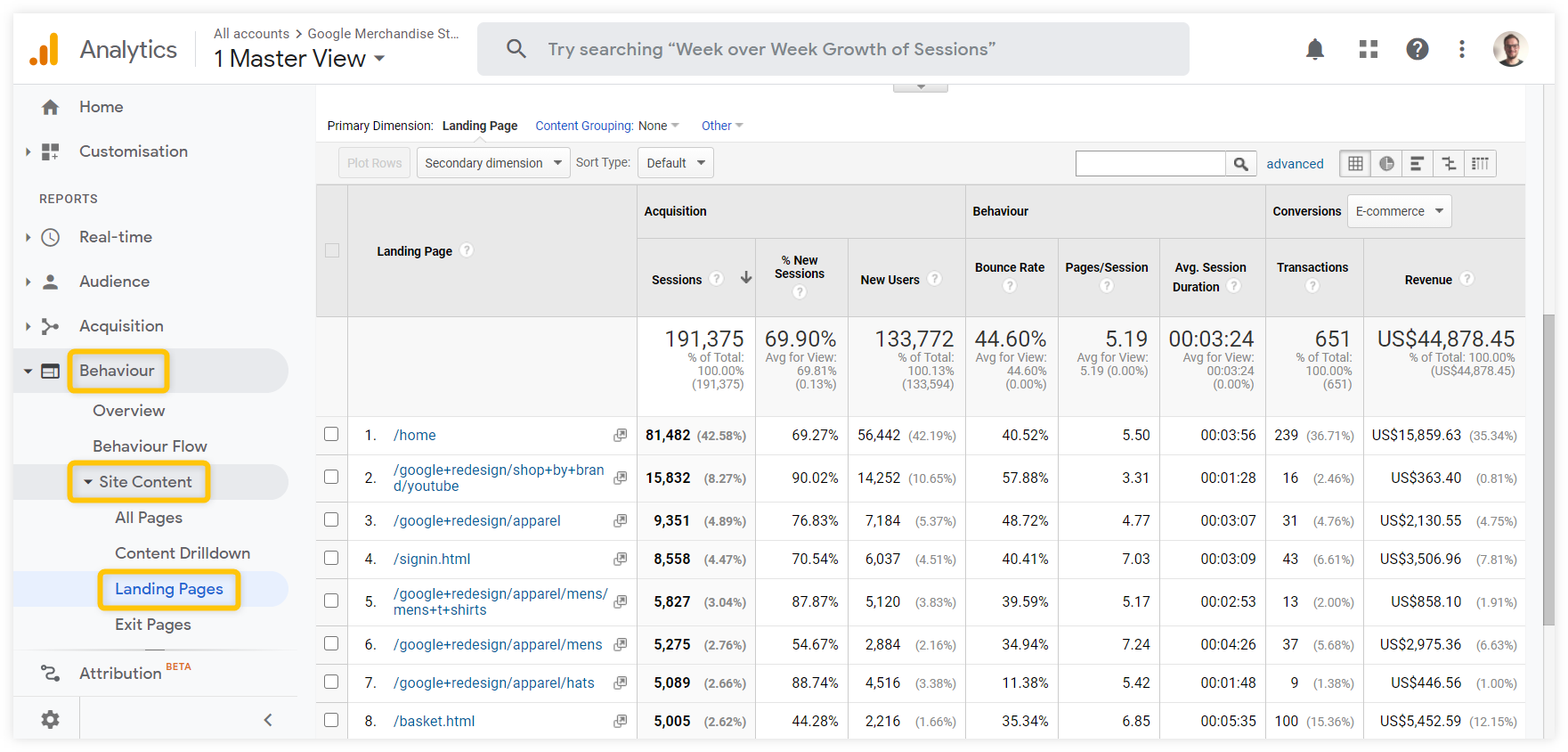

- Behavior – what the visitors do on your website (what pages they visit and how they engage with them)

- Conversions – details on how your visitors convert (in accordance with your goals; e.g. purchase, subscription, affiliate link click, etc.)

Data segmentation

In each report, you can further segment the data to see detailed results based on your specific needs.

Segmentation and filtering are crucial in selecting the right data for your analysis.

Date range

Selecting the right time frame is the first thing you should do when working with any analytics tool.

You can find the date range selector at the top right of every report. It enables you to look at the data within various time frames or compare two time periods.

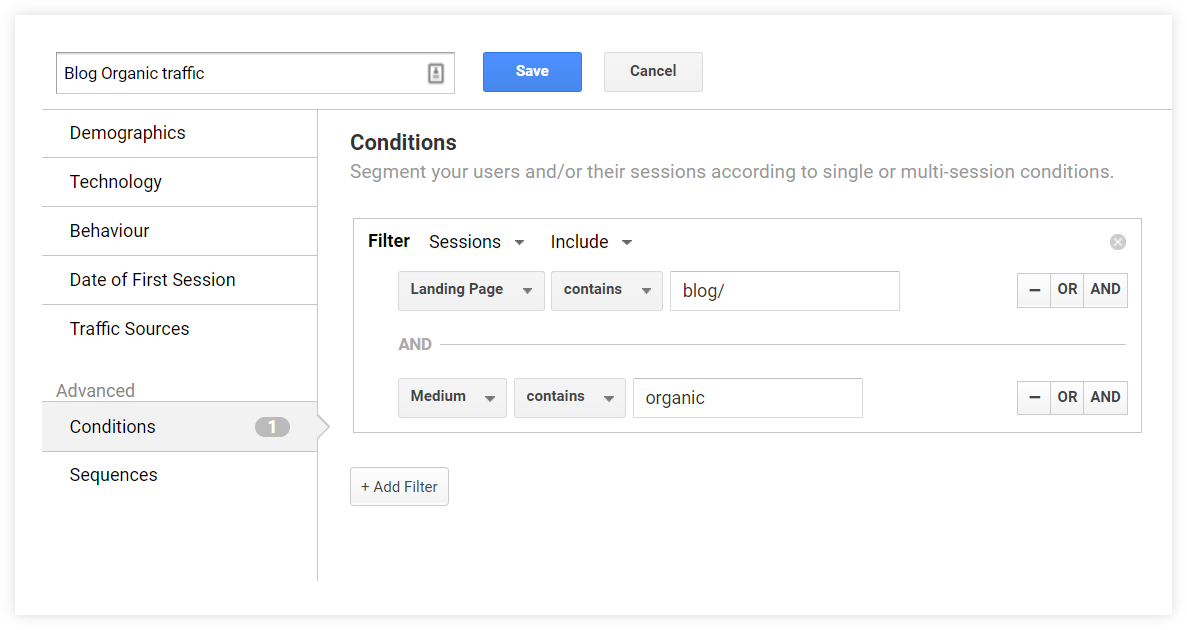

Segments

Segment is any subset of data in Google Analytics. You can select one of the default segments (e.g. Organic Traffic, Mobile Traffic) or create and save a new one to speed up your workflow.

For example: You can create a specific segment that will show only your organic blog traffic so that you can analyze the organic reach of your articles separately from other pages.

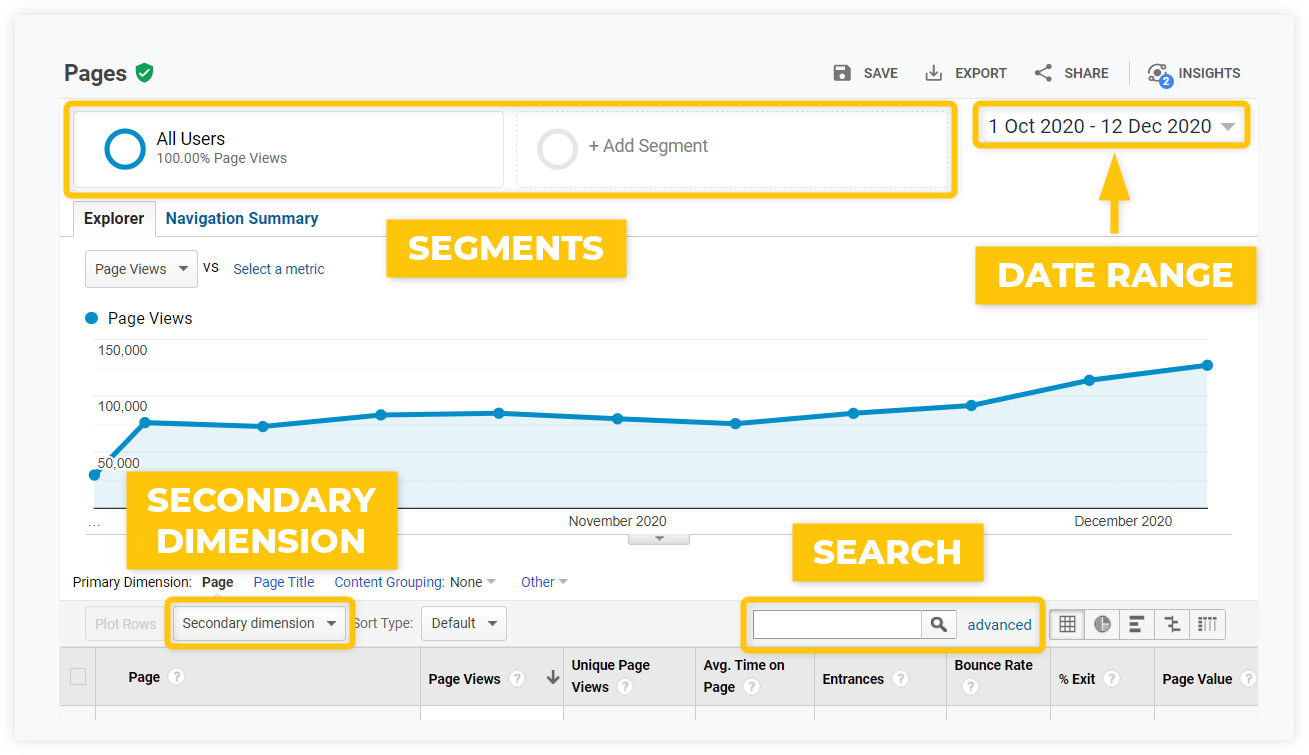

Here’s what the setting would look like:

Secondary dimension

The secondary dimension is an additional dimension you can add to the primary dimension of the given report.

For example: In the All Pages report showing the top pages, you can add the User Type secondary dimension to see the proportion of new vs. returning visitors for each page.

Search

There’s a simple search option above every data table to narrow down the results.

The most useful reports

To describe all the features and data reports Google Analytics has to offer, we would need a separate ultimate guide.

But the truth is, the vast majority of beginner users will do just fine with sticking to a couple of basic reports.